Technologies come and go, but LCD technology has so far seen off all comers. Can the Micro-LED, finally, displace its crown?

LG's LSAB009 microLED display. Image: LG.

A long time ago, there was something called a plasma screen, and it was most wonderful. At the time, TFT-LCD displays were only really suitable for cash registers, Game Boys and those peculiarly washed-out and grainy seatback displays that still exist in the very oldest airliners. By comparison, plasma looked good from anywhere in the room, had rich and dense blacks, and had absolutely no blur, lag or strange colour artefacts on moving images. In fact, most of them were so fast that they actually strobed several times per frame, just to make up brightness, which could make them a bit of a nightmare for people looking to include then in set designs.

The problem was that plasma screens were hard to make, dim, and tended to wear out quickly, especially if we tried to solve the dimness. They were also amazingly heavy and used a lot of power. Before those problems were solved, the LCD people had improved their technology to the point where LCD displays were already better than plasma for most purposes. Plasma display panels, which had shown so much promise, and which had a technological lineage going all the way back to the bright orange laptop displays of the 1980s, were more or less obsolete. They were out of manufacture globally by the mid-2010s.

This is what a plasma display looks like up really, really close. Somewhat like a TFT-LCD, only with more black area.

So, can anyone think of another technology that seeks to replace LCD, has been hard to manufacture, struggles for high brightness and can wear out quickly, especially if driven hard?

It might seem a little premature to start counting OLED out, given the fact that the technology is currently dominating the world of high-end displays in both the consumer and professional spheres. There really, honestly, isn't a substitute for the inky blacks, the huge gamut, and the blinding speed of an OLED panel. But then there wasn't any substitute for some of the things plasma could do, either, and it, eventually, was replaced.

OLED has always been a difficult technology. Remember the little glass panel Sony showed at several NABs in a row as it struggled to perfect the process? It became the XEL-1 TV, launched at the end of 2008 to reviews blunted by the swingeing $2500 pricetag, low resolution and dubious colorimetry; it was subjectively pretty, but went out of production eighteen months later.

And Sony is still not the world's leading manufacturer of OLED display panels; it didn’t launch an OLED Bravia until 2017 and we might assume it's buying the 55” panels for its large broadcast monitor from LG. Sony has backed away from what have been called “top output” OLED, the really bright, thousand-plus-nit, high-output stuff as used in the seminal BVM-X300, and it doesn't seem likely that consumers would be willing to pay what it would cost for LG to chase after the same numbers, even if there was an obvious engineering route to making that happen. Many panels are forced to use white-emitting subpixels to achieve higher output, compromising colour performance.

OLED, not the only game in town.

OLED is hard.

It's easy, at this point, to start talking about dual-layer LCDs. Manufacturers don't like the term “dual layer LCD,” possibly because they've spent a lot of money with a branding agency to come up with something that sounds more splendid, but it describes the fundamental approach taken by panels like that used in the BVM-HX310.

It works pretty well. Sony has repeatedly shown the X300 and the HX310 side by side. They are not identical, but the differences are so microscopic as to defeat even the most golden-eyed colourist outside the context of a side-by-side comparison.

BVM-HX310. Bright enough to take photos by.

Enter MicroLEDs

But there is another pretender. MicroLED takes conventional LEDs, roughly the same tech as is emblazoned up and down the Las Vegas strip, and makes it small. Very small: the best video walls, including options from Sony and Samsung, look like a very coarse plasma screen up close.

At some point, further miniaturisation turns it into a microLED display. They’re being proposed for things like cellphones, with rumours (though rumours only) of Apple taking an interest. If they can get that small, they can certainly get small enough for a decent 4K desktop display. OLEDs, despite the similarity in the name, do not use quite the same physics as conventional LEDs, and they should have all the advantages of OLED and some other things besides.

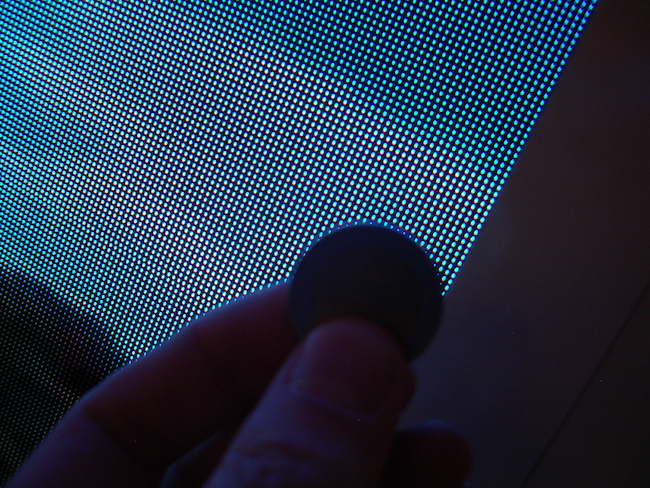

Sony's CLEDIS display. That's a British penny in direct contact with the display - small, but not cellphone-worthy. Things are improving, though.

We're some way from being able to tell which way this is going to go. If microLED becomes first established and then established and cheap, it could displace LCD in the way we all sort of hoped OLED would. It could easily displace OLED, which is still an expensive thoroughbred and a tricky beast. And of course, the incumbent LCD technology, with companies like Samsung pushing some big, impressive TVs, is unlikely to go down without a fight.

For most of the period where there have been electronic moving pictures, we've had exactly one technology that was capable of displaying them. Overlooking projection, we've now had at least four (CRT, plasma, TFT-LCD and OLED, not including things like nano-spindt field emission displays which popped up at NAB 2008 but otherwise barely made it out of the lab.) The fact that there are now three technologies in serious contention, all of which are capable of greatness, is a reason for celebration if ever there was one.

Tags: Technology

Comments