One of these will help you power the Tone Mapping you need.

One of these will help you power the Tone Mapping you need.

If you want to be throwing enough information per pixel around a screen to fill an HDR colour space you need the processing power of a GPU, and AMD's gaming heritage helps its GPUs do just that.

No new video technology makes people sit up and notice more than HDR. Not everyone, even under optimal conditions, can see the difference between HD and 4K, but even those with defective eyesight can immediately notice that HDR is better than “standard” dynamic range, no matter what the underlying resolution.

This is probably why HDR is being adopted so quickly. The really good news is that HDR is in some ways easier to embrace than images with more pixels. Essentially, instead of getting more pixels, you get better ones.

They’re better because an HDR pixel can express a wider range of shades, including deeper blacks and brighter whites. Much of this is new to video and filmmakers, but the concept has been around for a while in computer games.

For some time now, computer games have been able to work in an HDR mode, where bright light sources of light and reflections are depicted without over exposure. Until recently, this has only been partially effective because to reproduce HDR accurately, your display has to be able to match the range between light and dark in your content, and, mostly, this hasn’t been the case.

So to achieve the appearance of HDR (on a non-HDR monitor) there has to be a clever technical compromise, called Tone Mapping. Here’s how it works.

Raw video from a camera with a modern sensor might have a dynamic rage of 12 stops or more. Standard dynamic range monitors (formally known as “monitors”) can only reproduce around 7 stops. In order to show HDR material on an SDR monitor, you have to “tone map” your content. Imagine your HDR content has a range of one to a hundred levels of brightness and your monitor has only 40 levels. Level 40 will be nowhere as bright as level 100 and level 1 will be nowhere as dark as level 1 in your HDR material. Tone mapping takes each level on the HDR scale and places it proportionately on the SDR scale, without chopping off any of the levels at the top and bottom of the scale.

The effect of this is can look rather artificial but has the advantage that, for example, a sky on a cloudy day doesn’t look uniformly white, and there is at least some detail in darker areas. But it doesn’t look very natural on displays that aren’t capable showing the dynamic range required by HDR. That’s why HDR is so exciting today. Monitors and TVs that are genuinely able to depict HDR are in the shops. It’s happened faster than many of us expected.

AMD has deep roots in gaming technology and has designed its products to be fully HDR capable. This know-how, which extends back several years, is now right at the core of modern post production workflows.

AMD GPUs have 32-bit floating point internal processing and where needed can provide up 64-bits per pixel - enough for any HDR colour space. Colour space conversions are inevitable with HDR post production and preparation, so it’s essential to have precision to avoid visible damage to the material. Now that HDR displays are available, AMD is at the forefront of the essential process of interfacing GPU output with HDR monitors. The GPU company has a tone mapper that takes pristine HDR material and outputs it to HDR monitors in the best possible quality.

Another AMD advantage: AMD technology is based around open standards. Software designers don’t have to restrict themselves to working on proprietary, single-vendor platforms. Right now, HDR is at a stage in its evolution where it arguably has either too many standards (there are several competing ones), or two few of them (there’s no single standard that everyone is converging on). So it’s essential for GPU platforms to be adaptable and flexible. AMD’s openness means that more software will be available to run on its GPUs, and that users have more options as they navigate through the choppy waters early HDR adoption.

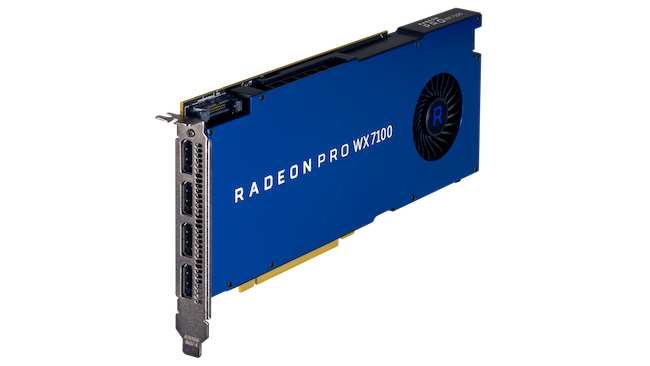

It’s clear that HDR is at the forefront of the next generation of content production, and, almost inevitably, a GPU is at the epicentre of a modern post production workflow. If you’re planning to work with HDR material soon, check out AMD’s Radeon Pro products here.

AMD Radeon Pro-powered machines like this Dell Precision T7910 tower will help you realise the HDR future

Tags: Technology

Comments