In the days of 7-stop cameras it was easy to measure dynamic range. But what about now?

In the days of 7-stop cameras it was easy to measure dynamic range. But what about now?

A lot is made about dynamic range in modern cameras. But can it really, truly be measured easily and accurately?

Of all the numbers chased by camera people, dynamic range is probably the most sensible. Now we can have more or less any resolution we'd like, the call for better pixels rather than more pixels is fairly well established. Really, though, we've been demanding better pixels ever since someone concluded that a lot of the reason that 1990s video didn't look like film was because it had so much less dynamic range. At the time of the big changeover many video cameras threw away horrendous amounts of dynamic range with deliberate colour processing, but the practical result was the same. Early in the transition from video to film, dynamic range became a factor worth caring about.

Classic video

Dynamic range can be fairly difficult to measure. That wasn't the case back in the bad old days of seven-stop video, when the brightest stop visible to the camera was only represented by 128 times more actual photons than the blackest stop. Making a chart to measure that is fairly easy: you can almost achieve that by printing a really good black ink onto a piece of white paper at various densities. The problem is that modern cameras are promoted with dynamic range around the fifteen-stop level, and regardless of the plausibility of these claims that means that the brightest patch needs 32,768 times more photons coming out of it than the very darkest one.

That's difficult, especially if its taken into account that the chart needs to have a bit more range than the camera so that it is possible to see where the camera starts to clip. It's difficult to make a sufficiently deep black, and a sufficiently bright white. We can help conventional printing processes achieve better contrast by using a gloss finish, which is why photographic (and inkjet) prints of holiday snaps are traditionally printed on a high-gloss paper. It's maybe a bit more difficult to look at, because of the reflections, but if the gloss surface is angled so that the reflections can't be seen, then most of the light that's bouncing off the surface isn't going into our eyes, and the blacks look pretty black. Use a matte surface, and all the light that bounces off the surface is being diffused into the eyes of anyone who looks at it from any angle, increasing the minimum brightness of the surface.

Cavity black

Test charts often need a “cavity black”, which is actually a hole cut in the chart with a box behind it, the box being lined with black velvet. This makes it very unlikely that any photon which goes inside will bounce around and come back out again, so the blackest-looking chip can achieve a lower black level than even the blackest ink on the glossiest chart. Modern technology may come to the rescue here: the development of extremely light-absorbing surface coatings such as the carbon nanotube arrays trademarked Vantablack may make it possible for test charts to to achieve better blacks even than a cavity black.

So there may be an answer to blacker blacks, at least as a reference. Even then, creating a linear ramp of brighter and brighter chips isn't something that's helped much by an ever-denser black reference. We could start thinking about patterned ultra-black coatings to create partial transparency, although it risks moire patterns unless special measures are taken. Either way, we're probably talking about a transparent chart rather than a reflective one, because otherwise it's very difficult to ensure that there's enough light coming out of the brightest white patches.

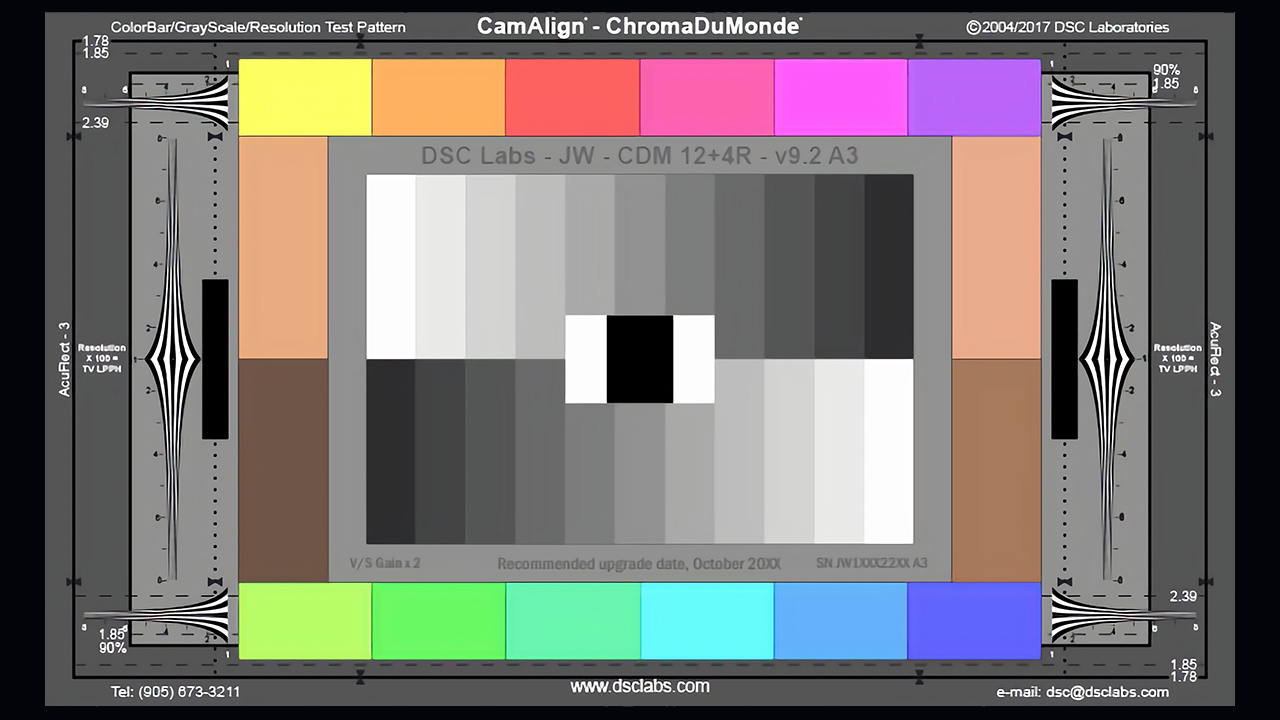

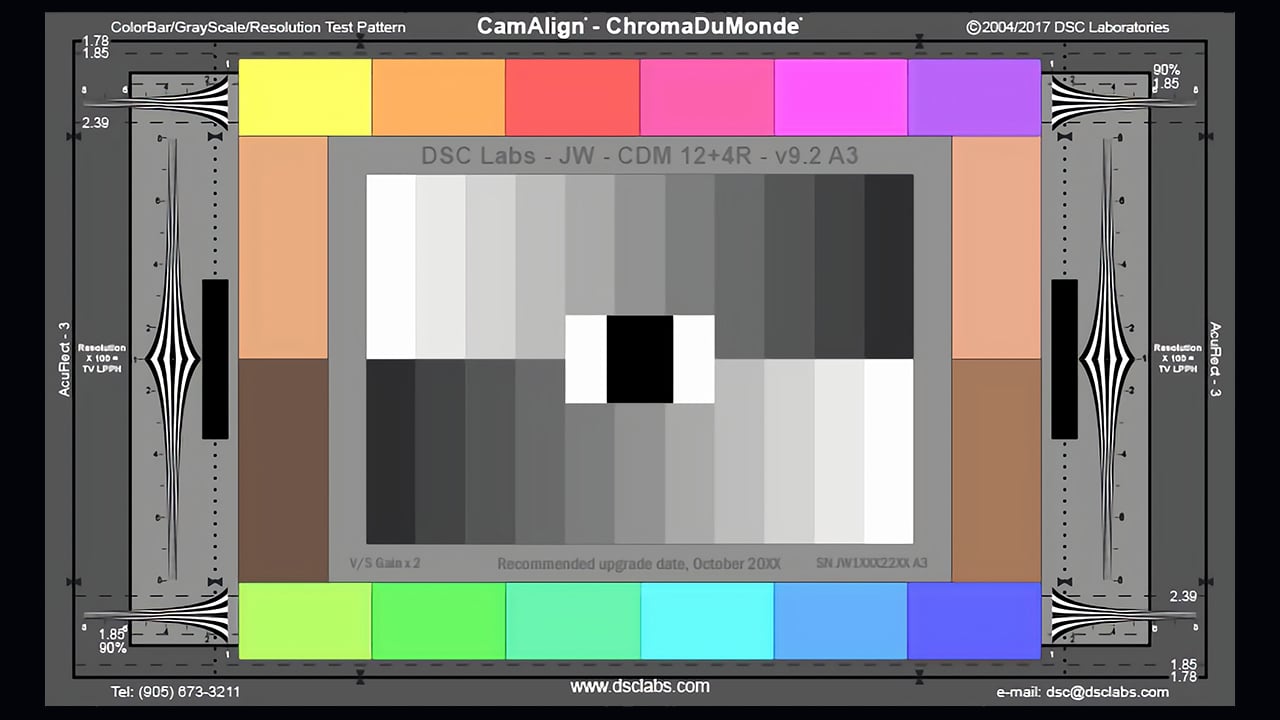

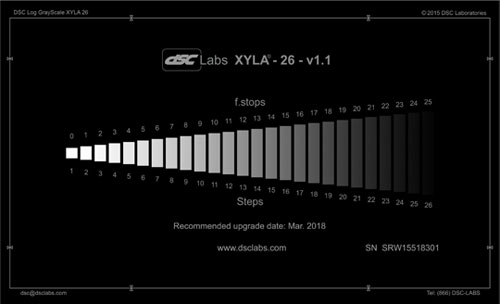

The DSC Labs Xyla chart

So, we've got a chart made out of (basically) very accurate ND filters, and it is placed in front of a light box powerful enough that the brightest patch is bright enough to just barely clip the camera. In order to determine the dynamic range of the camera, all that is needed to be done is count patches until the darkest one that that can be distinguished from background noise, right?

Extreme ranges

Er, no. On modern cameras, the variation in brightness between the brightest and darkest patches is so extreme that, even with very high contrast lenses, the lens flare (properly called the glow or veiling) caused by the brightest patches will be enough to obscure the darkest. From here on, this is essentially describing the features of DSC Labs Xyla charts, which have been designed to overcome most of the issues we're discussing. What all this means, though, is that there's a question over what is actually being measured with this technique.

First, the Xyla charts make the brighter stops smaller than the darker stops, with the idea that they'll produce less obvious veiling. Other charts have provided a sliding mask, so that the camera (or more to the point, the lens) is only exposed to one, or a few, patches at once, so the range of brightness at any one time isn't big enough to cause flare problems. The thing is, this poses questions about what is actually being measured. By masking off the brighter patches, the issue of lens flare spoiling our results is removed, but we're then setting things up with the specific intent of measuring the dynamic range solely of the sensor, as opposed to the entire camera system.

But cameras have lenses, which raises questions over how useful all that dynamic range is, when we can't ever have the darkest black the sensor can see alongside the brightest white. We can never see those things side by side because lens flare will always lift that black. What we're interested in, in reality, is the change of light during a scene, where we can shoot into a dark shadow one moment then move over to look at something much brighter, without changing camera settings - but it's an interesting issue, and one that reinforces just how hard it can be to measure modern cameras.

Don't let's even get started on colour.

Tags: Production

Comments