Nikon has developed a new 1-inch sensor capable of up to 1000fps in 4K. Is this finally the holy grail we have been waiting for with regard to designing a low cost ultra high speed, ultra high definition, camera that doesn't cost the same as a house?

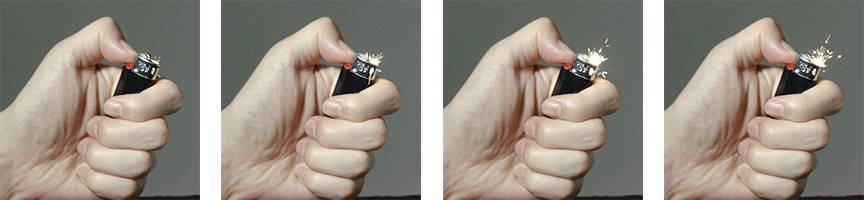

When we talked about the lack of an affordable thousand-frame-per-second 4K camera recently there didn’t seem any realistic prospect of an immediate answer to our prayers. Now, that piece seems almost prophetic, because Nikon has announced a one-inch sensor capable of just that. At this stage, the device we’re discussing appears to exist in at least prototype form, with frames shot by the sensor shown as part of the presentation.

The information emerged at the International Solid State Circuits Conference in San Francisco back on February 15th, and represents an interesting advance for Nikon. Rival Canon has always been a massively capable manufacturer of sensors, whereas many of Nikon’s digital cameras have used Sony sensors, with some contributions from Toshiba. Nikon has certainly done a lot of design work but the massively complex and expensive manufacturing process has always been sent out. Canon, conversely, owns the infrastructure required to fabricate sensors in house, giving the company a significant leg-up in terms of competitive technology.

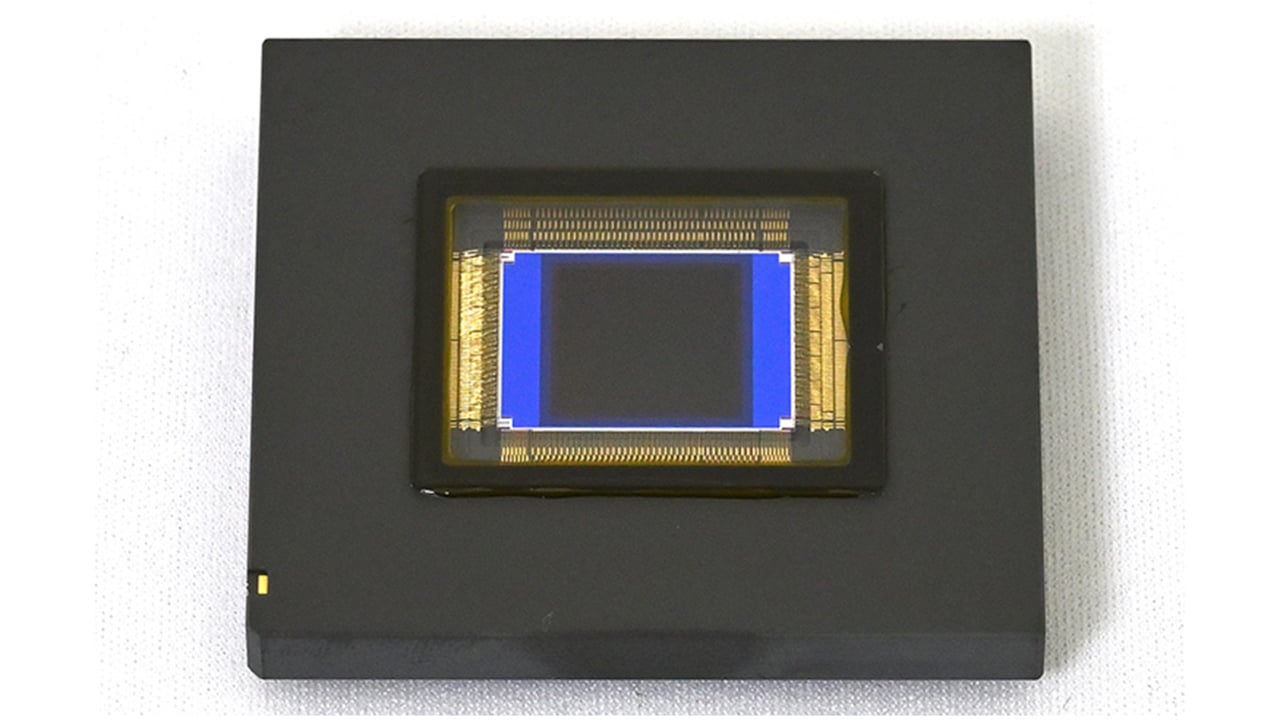

Image: Nikon.

We’re at risk of over-interpreting our evidence, here, but there’s also potentially some politics at play. Back in the virus-free days of August 2019, sonyalpharumors.com published the opinion of someone fairly senior at semiconductor foundry TowerJazz (now Tower Semiconductor) who opined that Nikon might do well to look elsewhere, given Sony’s growing interest in stills cameras that might influence the company’s decision on selling competitive sensors. This needs to be taken in the context that Tower is of course a Sony competitor in terms of sensor manufacturing, but the thought is a reasonable one.

The new sensor design

The new design involves, as we might expect, a back illuminated layout, which has become common since Sony introduced it to the consumer world in 2009. The idea here is to allow light to strike the surface of the device that doesn’t have any (opaque) metal wiring on it, which would usually be considered the back of a normal semiconductor. More sensitivity is great in any case, but it’s even more crucial as regards super high speed cameras; shooting at 1000fps is not likely to be an endeavour compatible with low-light shooting in any case.

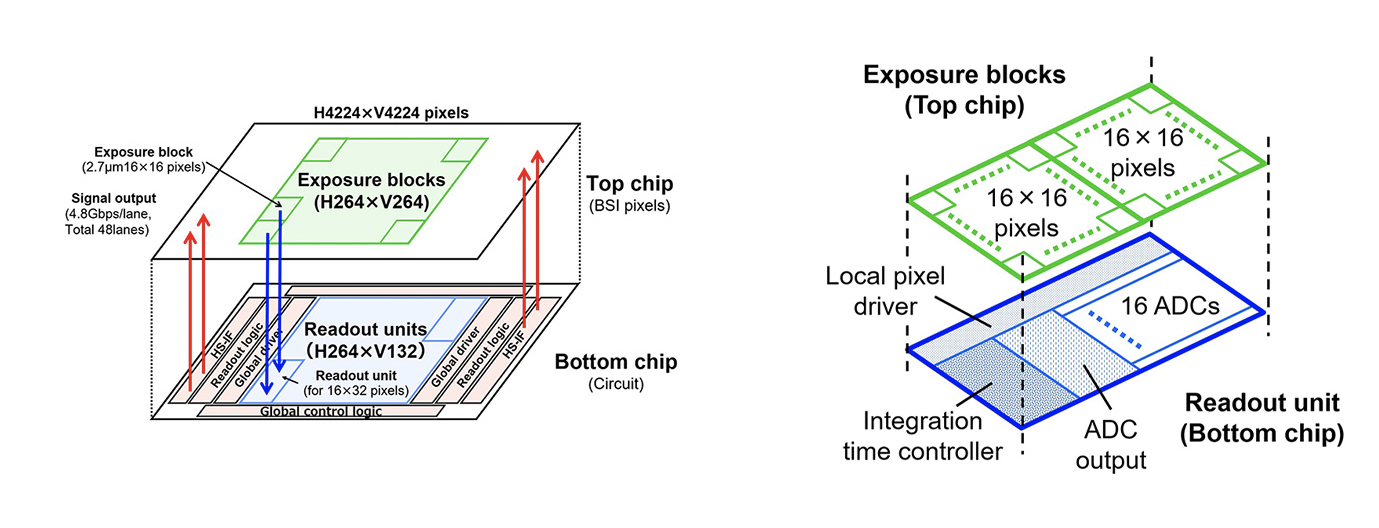

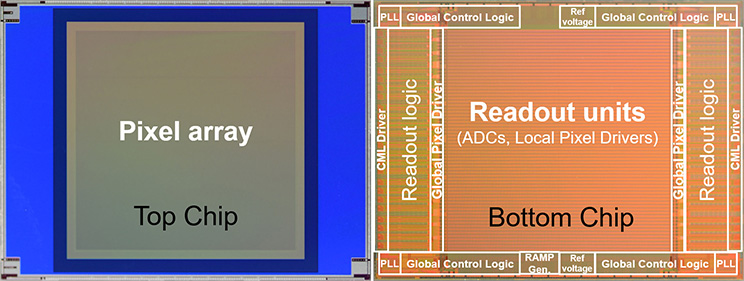

The sensor's layering system. Image: Nikon.

What’s more interesting, maybe, is the way the layers of the sensor are connected together. Manufacturers have long been pushing for a way to put the light-sensitive photodiodes and the associated signal processing electronics on separate layers. There are two reasons to do that: first, to keep as much area clear for photodiodes as possible so they can be as large as possible, and second, to allow the photodiodes and processing circuitry to be made separately, using processes specifically designed to get the best results for each, then combined. Current designs usually have to compromise on the performance of the photodiodes to make the circuitry work. It’s not clear that Nikon’s design actually manages to implement that second goal, but it’s possibly a step in that direction.

The chip itself has an array of them 4K square, and since it’s a one-inch sensor that puts the photosites are on a 2.7 micrometre pitch. By way of comparison, that’s larger than an Ursa Mini Pro 12K, though considerably smaller than the 4.6K. Nikon claim that the sensor has a signal to noise ratio of 110dB when shooting at 1000 frames. If that’s calculated in the same way we’d calculate dynamic range in stops, then the company has managed to pack some serious alien technology in there; 110dB is mathematically equal to over 30 stops. That, to put it mildly, defies reasonable expectation, so we’ll work on the basis that there’s some assumptions in there that don’t quite match the way we usually evaluate cameras and wait to see what the practical reality looks like.

Conclusions

It may be that some of these numbers arise from the ability of the sensor to adjust the readout time over small, 16-by-16 blocks of pixels. How useful that is in terms of conventional photography remains to be seen; without careful postprocessing it would naturally end up creating a sort of tiled appearance to the image, and even with that postprocessing there’s the question of how we know how long an exposure to use for each frame before we actually start that exposure. There are some demonstration images of the extra-high dynamic range behaviour, but they’re very small and don’t show much detail.

We also don’t know what these sensors will cost. There are already sensors which will do what this will do in every Vision Research Phantom Flex4K - at a price. And equally, there’s more to a Phantom Flex4K than its sensor; there’s also a very large amount of very fast flash storage, among other things. It’s always difficult to predict where something like this might go. It’s a lab prototype, an early example. If the advancement in technology allows it to be affordable, though, it could end up solving exactly the problem we were complaining about before, which would be great.

Tags: Technology News

Comments