Recently Filmconvert performed an experiment with its Cinematch software, attempting to match an iPhone 12 to an ARRI ALEXA. The colour matching is quite surprising, though there's much more at play here.

The idea of putting a digitally-photographed image through a magical process that makes it look, well, let’s just say it – more like film, is nothing new. The very word “filmlook” properly refers to a proprietary process that existed to make video look like film in the days when “video” meant Digital Betacam and one could still shoot standard definition and expect to be taken seriously. The technology has improved quite a bit since then, not least in its ability to analyse and automatically match two pictures in a way that even the most golden-eyed colourist might have to sit and think about for a while.

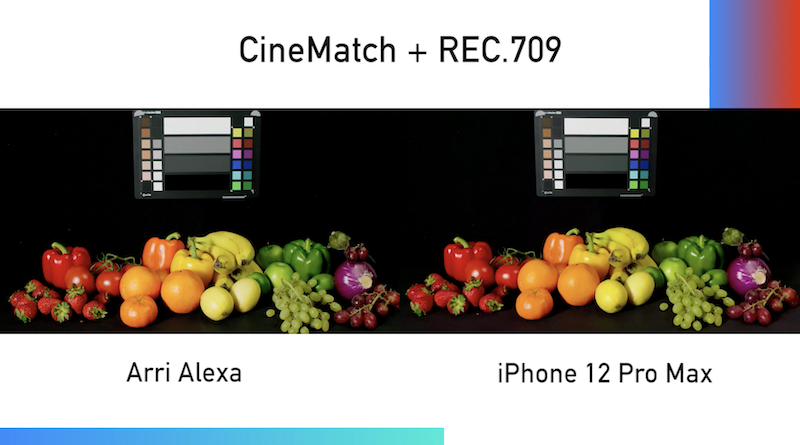

Filmconvert’s product of the same name is one of the more developed options in that field, and now the company is also offering Cinematch, which does exactly what it sounds like – it matches one camera to another. The existence of such a thing does, of course, provoke all sorts of Machiavellian ideas about the potential for matching inexpensive cellphones to really expensive digital cinema cameras, something the company demonstrates in a video recently uploaded to its YouTube channel which puts Alexa side by side with the iPhone 12.

Before we dive into the results of this exercise in advanced overambition, it’s as well to reinforce a few unfortunate realities about converting less-wonderful footage into more-wonderful footage. The most basic approach is the LUT pack, of which there are probably literally thousands available, each produced by someone shooting some footage, grading it for a certain look, and exporting the grading decisions involved as a .cube file. That’s fine as far as it goes, although it depends very heavily on how the original footage was shot and whether the person concerned took the trouble to ensure the results work well on a variety of material (the same concerns apply, of course, to making show LUTs for monitoring).

Or, to put it another way, there’s not much point in taking a grading decision made for, say, Memoirs of a Geisha’s famous dance scene, applying it to material of the ballet academy’s infant section performing in a school gym, and expecting the result to look like Dion Beebe’s Oscar-winning work. To be less flippant, LUTs can be extremely sensitive to how the material was shot.

A screenshot from the comparison with Cinematch and Rec.709 output.

Much more than just a LUT

Things like Filmconvert and CineMatch have much more ability to adjust around the characteristics of the supplied material than a simple LUT might, and the company’s video shows its software doing a creditable job of matching the two cameras. The material is fairly undemanding, under mainly diffuse illumination, and the most obvious caveats to the process are exactly what we’d expect. Specular highlights are, predictably, the iPhone’s Achilles heel, and naturally there’s not much even the cleverest software can do where things are clipped, though watch out for AI here. Some observers have commented that the iPhone footage seems anything up to a stop hot, and that a more conservative exposure might have helped the software do an even better job, but it’s pretty reasonable nonetheless.

If there’s anything to complain about, it might be that CineMatch is perhaps a little too willing to dull highlights. Look at the overhead light, just visible in the iPhone footage, about half a minute into the video. The Alexa log still renders it close to, or at, peak white, as did the iPhone in its default setting. CineMatch’s log conversion makes the light a little dull in its log match, although other parts of the frame seem perhaps a little over-bright. Mabe that’s CineMatch trying to maintain the slightly hot exposure, but then the following shot also looks a bit bright in the shadow and midtone detail when we look at the log.

Really, though, we’re picking holes. Colourists might claim that something like CineMatch doesn’t do anything that a skilled pair of hands on the trackballs can’t do, although it’d take a brave individual to claim the ability to match two cameras as fast as a piece of software can probably do it. Matching a phone to an Alexa is a stunt, of course, but a revealing one; by the time there’s a (rather harsh) Rec. 709 look on the output, the results are very similar. There’s chapters to be written about how reliant phones are on clever software processing to create such impressive results, as if that’s a bad thing. Still, if this is where grading, camera and phone technology has combined to take us, it’s sort of hard to object.

Tags: Post & VFX Technology

Comments