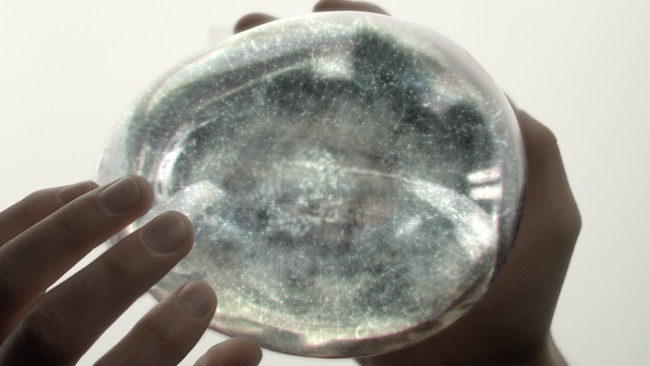

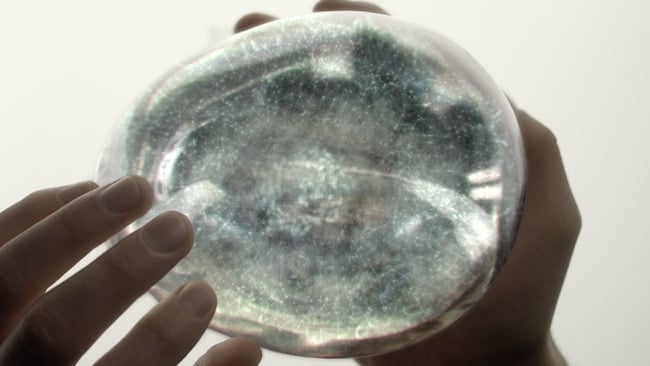

AI inside: Ava’s brain from Ex Machina

AI inside: Ava’s brain from Ex Machina

Slave to the algorithm: The next wave of technology that looks set to break over our collective heads with the promise of changing all before it is Artificial Intelligence. Here is a snapshot of the story so far.

Everything most of us have learned about Artificial Intelligence (AI) has come from Hollywood. There are the time-traveling robots trying to terminate us before we can give birth to the future leaders of the rebellion, the sadistic machines taking control of spaceships and the deus ex machina which seem more human than human — and still kill us. There are even some we fall in love with, but it still doesn’t end well

AI is more prosaic than that. Well, at least it is for now.

The biggest corporations on the planet are embedding predictive intelligence into everyday apps to make our lives easier. The most obvious examples are chatbots or artificially intelligent digital agents like Alexa, Siri, Google Assistant, Facebook M, and Cortana.

Another example is Facebook’s recommended photo tags which uses image recognition. Amazon provides recommended products using machine learning algorithms; Waze (a GPS and maps app) provides optimal travel routes. It is all becoming increasingly ubiquitous.

This is Google CEO, Sundar Pichai, speaking in October; “We are at a seminal moment in computing. We are evolving from a mobile-first to an AI-first world.”

While right at the beginning of the year IBM CEO, Ginny Rometty, told the CES, "It's the dawn of a new era: the cognitive era."

AI and the cognitive arms race

And she’s probably right. Just now IBM, Amazon, Google, Facebook and Microsoft are locked in an AI arms race.

Equity funding of AI-focused startups reached an all-time high in the second quarter of 2016 of more than $1 billion, according to researcher CB Insights.

AI is a catch-all phrase applying to any technique that enables computers to mimic human intelligence, using logic, ‘if-then’ rules, decision trees, and machine learning (including deep learning).

There are nuances though. Machine learning is a subset of AI that includes abstruse statistical techniques that enable machines to improve at tasks with experience. The category includes deep learning. Deep learning is composed of algorithms that permit software to train itself to perform tasks, like speech and image recognition, by exposing multilayered neural networks to vast amounts of data.

“There’s a been a huge influx of data with everyone feeding sound, text and imagery over social media which has accelerated our ability to try and find ways to process and understand it,” says Ian Hughes, analyst at 451 Research. “Traditional processing and analytics is too slow since it doesn’t scale so research has been pushed research into AI as a way of dealing with data.”

In turn, AI data crunching has honed in on specific areas as opposed to blanket analysis.

IBM’s cognitive computer system Watson, for example, has combined its Alchemy Language APIs with a speech to text platform, to create a tool for video owners to analyse video – forming IBM Cloud Video. It is able to scan social media in real time to monitor reactions to live streaming events.

“AI used to be relegated to only the fastest supercomputers, but recent advances in software and the use of GPUs to process the algorithms mean that the cost of AI assistance is no longer a barrier to entry,” says Paul Turner VP, Enterprise Product Management, Telestream. “AI offers the promise of aiding in many facets or the business. You can certainly imagine that systems will be able to analyse the actual content for metadata gathering (facial recognition is one part of this, but other object detection could be just as useful). Given that metadata is key to automated workflows, this could vastly expand our capability to ‘mine’ content for other purposes.”

Nvidia, which has a history of leveraging computing power for interesting purposes, describes its TensorRT product as a “high performance neural network inference engine for production deployment of deep learning applications”.

It is targeting use in delivering super fast inferences and significantly reduced latency, as demanded by real-time services such as streaming video categorisation in the cloud or object detection and segmentation on embedded and automotive platforms. “With TensorRT developers can focus on developing novel AI-powered applications rather than performance tuning for inference deployment,” says Nvidia.

Blurring the boundaries

AI should not be confused with intelligent creation, yet even here the edges are being blurred. Editing software such as Magisto, with 80 million users, takes in raw GoPro or smartphone shot video and automates the process of editing and packaging it with a narrative timeline, tonal grade and background music for consumers and even marketeers facing huge demands on their time and too much video to process and publish online.

For live production how about AutomaticTV, devised by Spanish facilities provider MediaPro as a cost-effective alternative to a full OB and in use at Barcelona FC to live stream training sessions. If you imagine a soccer match as a series of pixels, the system is essentially instructing an algorithm to distinguish between green for the pitch, white for the ball, black pixels for the referee and a collection of coloured pixels for the players. Since soccer is fairly formulaic in presentation the system can follow those pixels based on certain rules. Handball, roller hockey, and basketball have also been trialled.

A documentary assembled by the Lumberjack AI system is hoped to be presented before the SMPTE-backed Hollywood Professional Association (HPA) by 2018 and has already helped create Danish channel STV ‘s 69x10’ episodes of semi-scripted kids series Klassen.

Many questions are thrown up by this advance. Is the accidental juxtaposition of sound and image to create something new a function of solely human intelligence or can a machine be trained to produce content which is not formulaic? Can an AI recognise, in a mass of images, that the nuanced facial expression in an observational documentary is the heartbeat of a story?

And as for scripted shows - well Sunspring, a short film shown at this year’s Sundance film festival was scripted by a computer programme. IBM’s Watson was used to sort and recommend a dozen clips from the full length horror feature Morgan for a craft editor to compose a trailer. And at the Cannes Lions festival this year, a pop promo produced by Saatchi & Saatchi was scripted and directed entirely by AI. Even the casting was done by a program that examined electroencephalogram (EEG) brain data from actors and matched them to the emotions it had detected in the song and its singer.

Canadian data-analysis company Greenlight Essentials has launched a Kickstarter campaign to fund the first feature film co-written by an artificial intelligence.

These latter examples could be put down to novelty except for the thread linking the rapid rise of AI across all media. We want machines to take care of the neurologically impossible task of processing the volume of data we create and receive each day. The utopian view is that if a machine can take this off our hands then humans can find more time to create.

The dystopian? One word: Skynet.

Tags: Technology

Comments