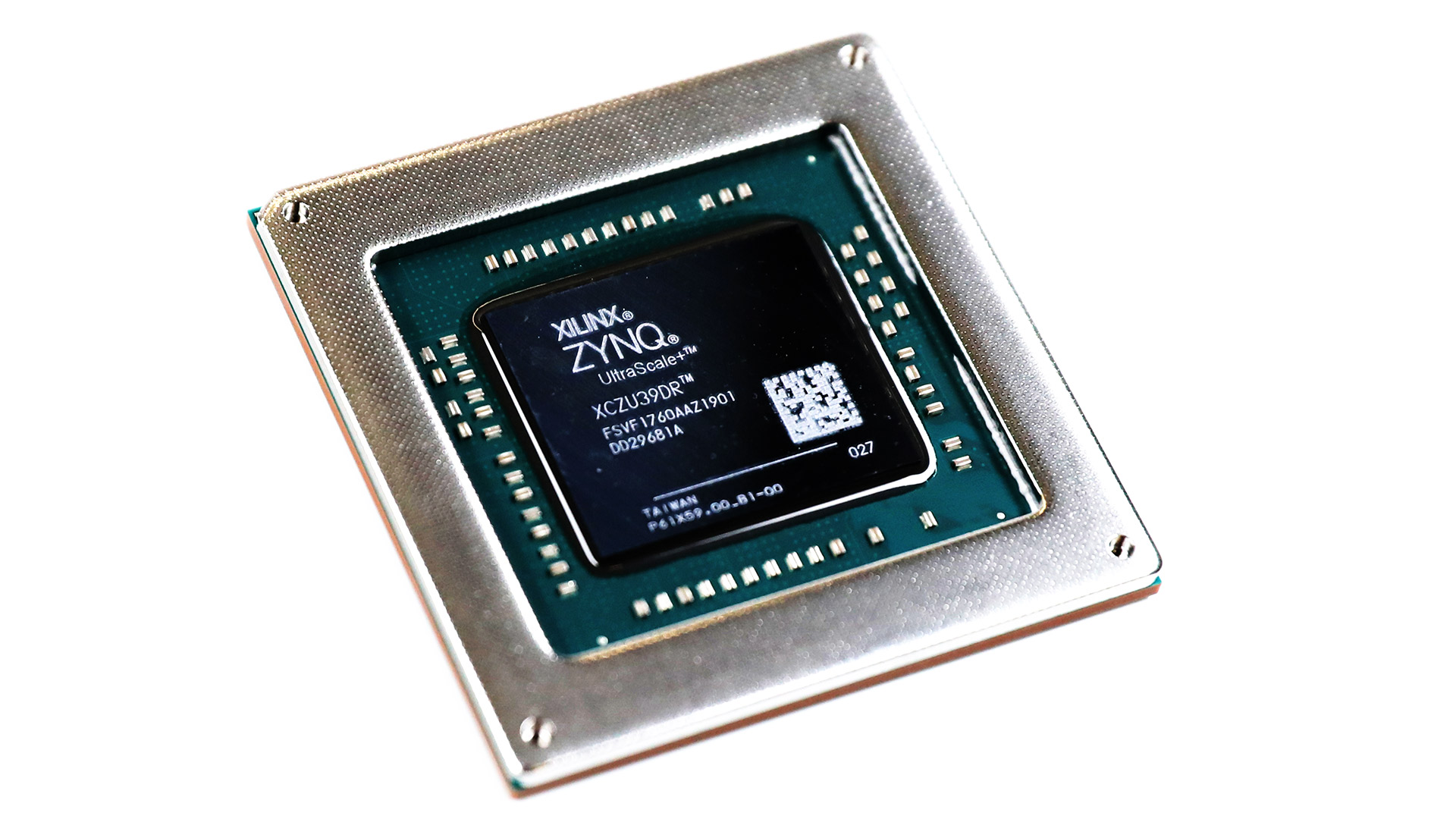

XILINX FPGA

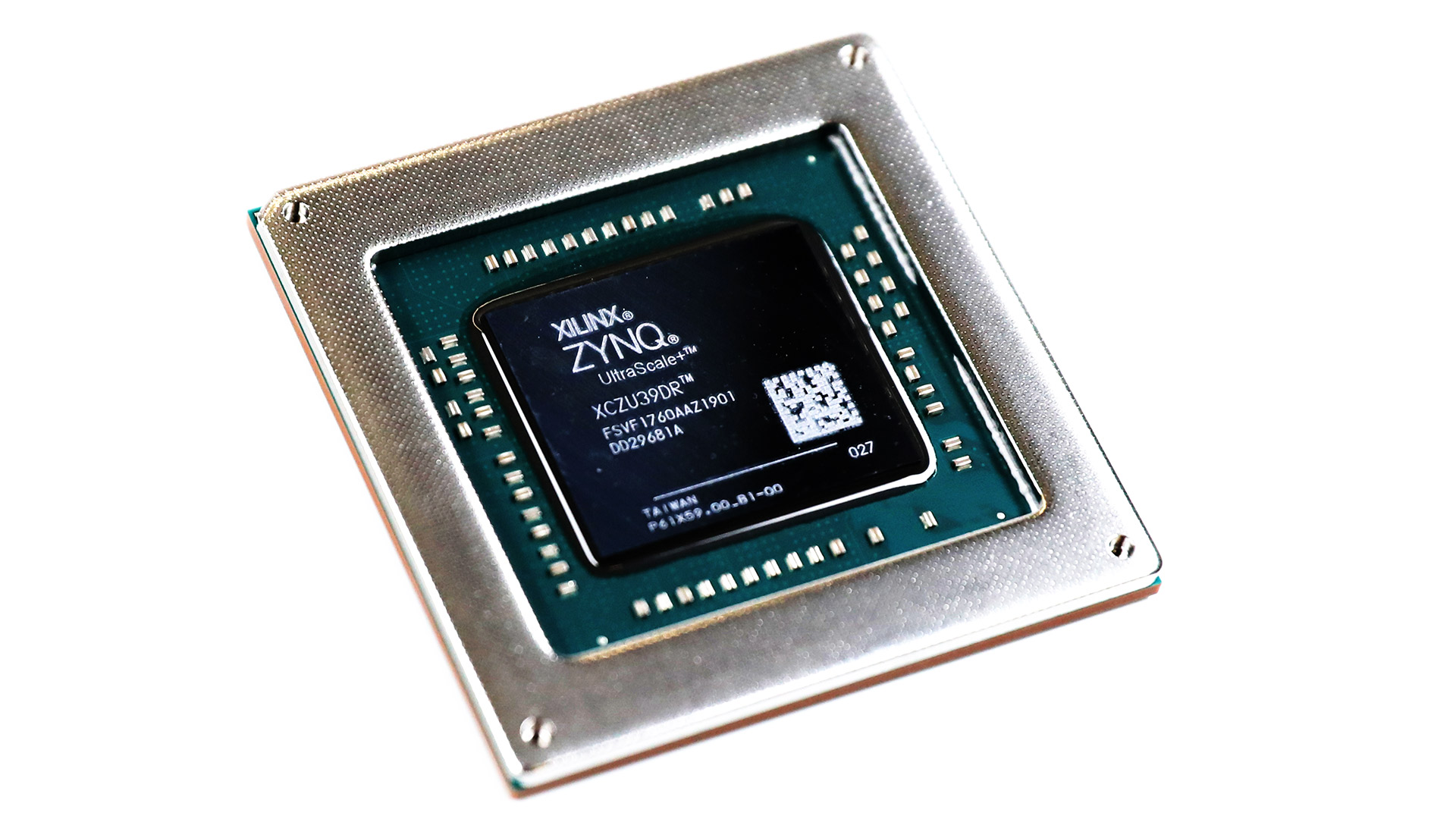

XILINX FPGA

FPGAs are like miracle chips. They're fast and reprogrammable, and they extend the lifespan of products that contain them

Read about this in more depth in an earlier RedShark Article about FPGAs.

FPGAs have been around for a while. When Atomos brought out its first Ninja recorder, it had an FPGA at its core. These reprogrammable chips are ideal for repetitive heavy-duty processing like video compression and de-compression.

I think it's likely that we're going to see FPGA-type logic built into motherboards and even phones. This has already started, with Apple's new Mac Pro featuring an "Afterburner", which we think is an FPGA.

FPGAs have some remarkable and extremely beneficial properties. They're fast, and they're reprogrammable, in an importantly different way to conventional processors. It's this difference that makes them so powerful.

Traditional microprocessors are programmable in this sense: they can execute software programs that have been written in a way that is compatible with their architecture. Which means that software algorithms have to be translated or specially written to use the fixed set of instructions that are available for use with the processor. It's the way that most chips (even ARM chips, which have fewer instructions, but typically execute them faster) have worked for decades. Fitting the algorithm to a processor makes the resulting code run more slowly than, well, what, exactly?

Slower than an FPGA, in fact.

So what is it that's so special about FPGAs?

It's that FPGAs work at a lower level than conventional processors. In fact, they're not really processors as they come out of the box. You can almost think of them as a Lego set: a huge collection of largely similar building blocks that you can turn into a machine with a set of instructions. So, whereas with a CPU, you might tell an onboard multiplier to work on two numbers to give you a result, with an FPGA, you have to use your building blocks to make a multiplier before you even start to feed in the numbers you want multiplied.

I know that sounds like it could be slower, and it is, when you have to write the FPGA code in the first place. But there are three things that give FPGAs an almost unbeatable advantage over CPUs:

A bespoke processor

The first is that you can essentially have a bespoke processor designed to run your algorithm optimally. This is a really massive advantage. You're almost never going to find a general purpose processor (ie a CPU) that fits your needs exactly. There's always going to be a compromise, which normally equates to more more steps being necessary to perform a task. Using well designed code on a fast FPGA can mean performance that is tens of times better than on a CPU.

There are downsides - there have to be, or we'd have stopped using CPUs a long time ago. FPGAs are best when they are used for specific tasks. These tasks can be quite complicated (heavy-duty signal processing, or encoding video into a compression format, for example), but they wouldn't be good for running spreadsheets or even an operating system (although some FPGAs to have CPU co-processors that allow for single-chip computers that can run a display and storage, at the same time as heavy-duty FPGA-type stuff).

Reprogrammability

Here's the almost miraculous bonus of using FPGAs: they're reprogrammable. With a single FPGA, you can have it perform radically different functions each time it boots up. Essentially you can have an infinite number of custom processors on a single chip. In the past (and in the present, as far as we know) manufacturers who used smaller, cheaper FPGAs to limit the cost of their products would reboot the FPGA and load up different code if certain functions were selected.

Upgradability

Closely related to the point above, if a product has an FPGA at its core, then it's simple to add functionality over the life of the product with FPGA upgrades. These can be quite dramatic, like supporting a new codec.

FPGAs are relatively expensive devices. They're too pricey for most consumer products. For the mass market, there's an alternative: ASICs. An ASIC (application-specific Integrated Circuit) can behave like an FPGA in almost every respect, except reprogrammability. ASICs are vastly cheaper than FPGAs, but will only ever do one thing. Another drawback with ASICs is that they're extremely expensive to set up initially. They're often prototyped with an FPGA, to make sure that the design works. It can cost $Millions to make an ASIC, but once it's all set up, individual chips can cost pennies.

If you're considering buying an expensive product - a camera for example - it might be worth asking the manufacturer how much of the device's functionality is based around FPGAs. This will give you a pretty good indication of the lifespan of the camera, and the extent to which the manufacturer will be able to upgrade it in future months and years.

Read about this in more depth in an earlier RedShark Article about FPGAs.

Tags: Technology

Comments