When does a lens not have to look like a lens? When it's a metalens!

When does a lens not have to look like a lens? When it's a metalens!

A new lens developed at Harvard University could revolutionise how smartphone cameras are produced.

Lenses haven't fundamentally changed in more than 3000 years. The first written references date back to 424 BC when Aristophanes mentions a “burning-glass” in his play The Clouds. The Nimrud Lens dates back at least another 500 years before that and comprises of a piece of rock crystal which has been ground (incredibly coarsely, by modern standards) to create curved surfaces. The result is an extremely crude lens with a focal length of about 120mm which was possibly used as a magnifier or, as Aristophanes later mentions, to start fires.

With all due respect to the tireless work of the likes of Zeiss, Cooke and Schneider, things haven't changed much since. It's been known for some time that if you make a hologram of a lens, it will work as a lens, but it'll also have all the same problems as the original lens. And even if we create the hologram mathematically, it'll still have all the multicoloured patterns of a hologram.

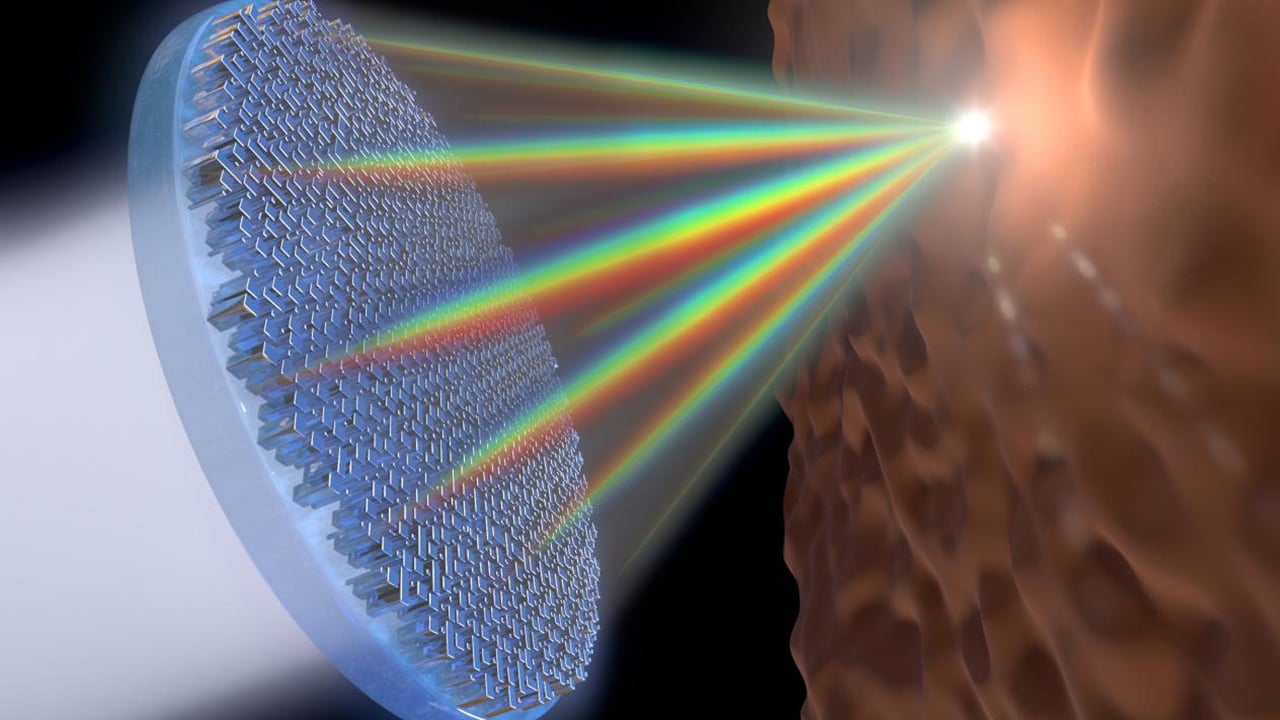

This fascinating animation shows the behaviour of light passing through the metalens

What's also been known is that firing light through small slots can create very odd effects. This can be demonstrated on the desktop: cut two parallel slots in a piece of black card a short distance apart, put a light source on one side and a screen on the other. We'd instinctively expect to see two parallel bars of light on the screen, but if we have a light that's something close to a point source what we get is rather different to that – we get a series of alternating black and white bars. This is a very basic example of diffraction and demonstrates that we can achieve it without bits of glass.

Scanning electron micrograph image of the nanoscopic structures on the surface of the lens. The scale bar is 2mm. They're made of titanium dioxide, which is reasonably easy to work with

To date, almost all practical lenses (pinholes being the principal exception) have involved bits of glass. We should be clear that what we're about to discuss is very much in the laboratory (in fact it has been for a while) and even if the technology makes it into a product, what's mainly being talked about is cell phone cameras because the lens assembly is a principal controlling dimension of phone size. Still, the new tech is an interesting development if only because it offers benefits beyond sheer physical compactness.

Developed by a group from the Paulson School of Engineering and Applied Science at Harvard, the new technology is referred to by them as a “metalens”. It involves an essentially flat surface coated with very tiny physical patterns which, through clever mathematics, are arranged to behave as a lens. As we've said, this is not absolutely the first time it's been talked about and the really new work here is that it's capable of working in visible light, and that it handles a wide enough range of frequencies to cover the whole visual spectrum (so it will work on both red and blue light simultaneously.)

Without wanting to downplay their original work, the Harvard announcement is mainly a good excuse to think about the history of lenses and the sort of thing that may just be developing on a lab bench somewhere. Ultimately, metalenses like the ones proposed by the Harvard team may make phones and tablets smaller and lighter, but they may also do the same to things like AR and VR headsets. There's some hope that the new techniques will permit lower aberration designs, which might also help with some of the rather noticeable aberration intrinsic to the rather extreme optical systems that VR headgear tends to require.

The word “metalens” might validly be applied to something like a light-field array, too, since it's a virtual lens made out of a sparse light-field of many lenses. Work on light-fields stands on the shoulders of literally millennia of development in conventional lenses. Whether the Harvard work will contribute to something better is probably a prospect for the next ten years or so.

Tags: Technology

Comments