Bayer Basics

Bayer Basics

There are several 4K cameras out there. Remarkable though they are, what the manufacturers mean by “4K” needs some interpretation and explanation.

The practice of using a number followed by a K as a shorthand to indicate resolution originated in the world of film scanning. Achieving high resolution scans frequently means a line-array sensor, a CCD imaging sensor comprising a single strip of photosites per colour channel, with either the film being moved past the sensor (as in a Spirit datacine) or the sensor being moved past the film (as in Filmlight's Northlight scanner). Other scanners use area sensors, but given the ability to sample slowly, at several frames per second, and to use sequential RGB exposures, the result of both these approaches is that every pixel in the output image has carefully-measured levels of red, green and blue in it, based on the information on the film.

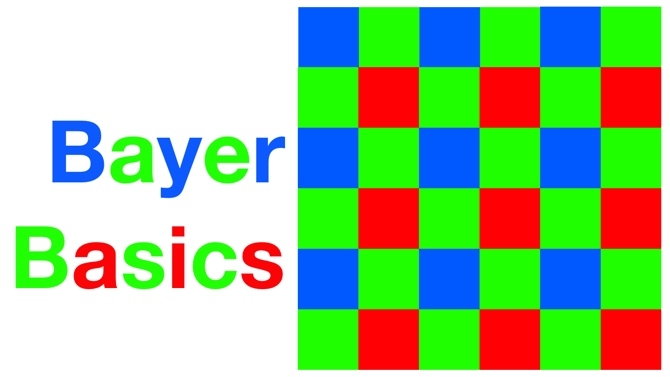

At about the same time film scanning was becoming really good, so was digital still photography. The desire to achieve a similar form factor to traditional DSLRs, and to use their lenses, make it necessary to find a fairly direct replacement of film, with a single sensor capable of capturing a colour image. Low-cost colour video cameras had for a long time been producing workable results with a single sensor patterned with red, green and blue filters, with the missing values for each colour channel interpolated with varying degrees of sophistication. Such sensors in digital stills cameras were and are characterised with the familiar megapixel count; the number of millions of photosites on the sensor.

Dalsa got there first

So far, so obvious, but the argument about resolution is based on a mixing of this terminology. Despite popular prejudice, it wasn't actually Red who started doing this. The first people I remember referring to a single-chip motion picture camera as being of 3K resolution were Dalsa, with their seminal Origin digital cinema camera. In my view, this was (and, if you aren't a cultural relativist, remains) a fundamental misapplication of terminology; using a term customarily used to describe one process to describe a completely dissimilar process, and perhaps not coincidentally doing that with an eye on the commercial advantage.

It's not my intention to in any way criticise the performance of the Dalsa Origin. It was, and even now would still be, an absolutely superb camera, easily the best available at the time in terms of sheer information in the image, and I'm sure the company would remain at the head of that particular pack had they chosen to continue in the field. But was it fair to describe it as 3K? I don't think so, no matter how clever their algorithms were. OK, fine, the algorithms can be clever. Mathematical procedures for recovering full RGB data from a Bayer filter array camera can be sophisticated, and can often achieve results on certain types of image that appear to defy the assumption that two thirds of the output image is interpolated, generated, or in some way made up.

Not all it seems

But made up it is, and trivial situations exist which can confound clever Bayer reconstruction schemes – in fact, they can especially confound the cleverest ones. Results on a test chart comprising black lines on a white background can be good, as there is really no colour information involved and the edges of the lines can be discerned using information from all of the photosites on the sensor. Black or white lines on a green background tend to do less well, as lines are differentiated from the background by differences visible mostly to the green-filtered photosites, which comprise only half of the sensor. Worse again is black lines on blue or red, since those colours comprise only one-quarter of the sensor, and worst of all can be red on blue, which relies most heavily on both of the quarter-resolution colour channels simultaneously. Claims that single chip cameras with sensors four thousand photosites wide can achieve 3.5K of resolution will in most cases turn out to have been based on black-and-white test charts, which isn't much help when executing, for instance, a green screen shot.

Mathematics to the rescue

Of course, as manufacturers like Red will tell you, there are mathematical techniques for avoiding the worst of these problems, and they're far from dealbreakers, but these are invariably considered proprietary (which really means secret) and it is therefore difficult to get an objective idea of the ways in which a camera might misbehave in unusual situations. The biggest objection I have to it, though, is not about imaging performance. Cameras like the Canon C300 and Arri Alexa have superb performance based on these techniques (we haven't seen any images from Sony's F5 and F55 yet but it's reasonable to expect these to be very good as well). The problem, really, is that this switch in terminology could be said to be an unintentional muddying of the water, which, in an industry which valiantly fought off almost-good-enough electronic replacements for film for at least a decade longer than it absolutely had to, is not ideal.

We are still working on a comments system for RedShark. Meanwhile, if you'd like to say something about this article, please write to me, the editor, at david.shapton@redsharknews.com. We'll publish the best comments.

Tags: Technology

Comments