Computer tape isn't like this anymore

Computer tape isn't like this anymore

There's a certain vintage type of sci fi movie which will, whenever it needs to indicate serious computing power, show us a room full of enormous tape decks, gently shuffling big reels of one-inch tape back and forth at the behest of a room-sized mainframe. Seems old fashioned, but tape is still now a modern, fast and extremely reliable medium. Phil Rhodes explains

The last fifty years or so has seen both the birth and, more or less, the death of magnetic tape as a video format, but it persisted through the 90s, against all indications to the contrary, as a data format for people who valued the longevity and permanence of a high capacity removable-media format

Although there have been film and TV people with large amounts of data to back up since the early Cineon scanning and recording system came into existence in the early 90s, the principal users of tape drives through the early 2000s were probably the finance and banking sectors, with a keen regulatory and practical need not to lose things down the back of the sofa. So, until very recently, almost all tape systems were designed to perform backups, as opposed to operating as a ready-use storage device. As such, when people began to build tape archive systems for digital cinematography, there was no universally-recognised way of writing files to tape.

To understand the problem, we need to consider the difference between the raw storage represented by something like a hard disk, flash key or magnetic tape, and the arrangements which allow us to conveniently store and retrieve things as named files stored in folders. At its most basic level, any storage device exists as a collection of individually readable and writable areas in which data can be stored. In many cases, each area, called a sector, contains 512 bytes of information, or, to put it simply, 512 numbers between 0 and 254.

Addressing Sectors

Various schemes exist for addressing sectors, but the basic concept is that 512 numbers stored into a sector at a certain address may later be retrieved from that same address. This is how essentially all storage devices communicate with computers, and while this is agreeably straightforward, it doesn't give us the familiar structure of files and folders we're used to; it just gives us a huge field of numbers that can be stored and retrieved.

The mechanism that's responsible for turning the raw storage sectors into the sort of thing we're used to using, with named files and folders, is called a filesystem. The first, simplest filesystems simply reserved some sectors to keep a list of which files were being stored, usually indicating the address of the first sector at which each file had been written, and the number of sectors used to store it. Developments to include hierarchical folder structures, user permissions, and other refinements to features and reliability bring us to the point we're at now, when these things are second nature.

The Problem with Tape

The problem with tape was that, for a long time, there was no standard filesystem. Companies providing backup solutions had been (and remain) free to write data to tape in any way they like, and by default have developed many things that could be called a filesystem. This is fine for the individual organisation which will perform backups and then recover information from those backups as necessary, generally using the same equipment. What it doesn't facilitate, at least not easily or directly, is programme interchange, the ability common to video tape formats to record a tape at one facility and then read it at another.

Early digital cinematography implementations used perhaps the earliest approach, presumably in the somewhat-reasonable assumption that it would be the most widely compatible. This involves bundling up several files into a single file using TAR, a utility developed in the 80s designed to perform Tape ARchives, and then simply writing them sequentially to the tape, sector by sector, with only minimal extra data to mark the start and end of archives. Efficiently retrieving information from a tape written in this fashion requires separately-supplied supporting information, a tarmap, indicating which files are where. This is a functional approach, albeit someone cumbersome, typically involving a lot of per-job intervention by skilled computer specialists. Even so, films were and probably still are made using equipment operating on this principle, and it's probably still the most prominent way of storing things on tape.

Improvement in Tape

All of this would be irrelevant if it wasn't for the improvement in performance and capacity of tape formats in the late 90s and early 2000s which kept tape useful. For some time, tape had compared poorly to hard disks in terms of speed, and with enormous amounts of research and development, disks were catching up in capacity as well. Tape formats like AIT and SDLT fought back, although the problems of standardising a filesystem remained, even to the point of tapes of a single format being usable only on a specific vendor's equipment.

Recognising the interopability problem, three major players in the storage industry – IBM, Hewlett-Packard and (what was then part of) Seagate – founded the LTO Consortium and developed its namesake tape format. LTO1, with a 100GB capacity, was released in 2000, although it wasn't until 2010 that a companion filesystem was announced (a demo was shown at NAB 2009). The combination of LTO, being (except for IBM's datacenter-specific offerings) the only data tape format around, plus LTFS, finally solves most of the problems of using data tape in anything other than a corporate backup environment.

The LTO Consortium

Before we dive into the technical side of playing around with LTFS on a real tape drive, it's worth taking a moment to consider what it took to get to this point, particularly around the topic of corporate cooperation. The LTO consortium, which now comprises IBM, HP and Quantum after Seagate spun off their tape division and Quantum bought it, is made up of companies that compete fiercely in certain fields. It is hardly in the nature of this situation to encourage cooperation – in fact, it's easy to list technologies which have been terribly balkanized by the tendency to chase vendor lock-in and individual advantage. So, working together on this sort of thing, which brings benefits to both manufacturers and users, is to be applauded – frankly, it's to be given a standing ovation, given the appalling situation which existed for over a decade with regard to tape interopability. Would that flash cards and their manufacturers went a similar route.

One of the very powerful things that this situation ensures is that, unlike, say, HDCAM-SR, which is only made by Sony, anyone can in theory make LTO media or devices. Right now, there's three manufacturers of LTO drives, and considerably more manufacturers of tape media. This makes LTO much less liable to the sort of problems experienced by SR users after the Japanese tsunami destroyed the single manufacturing plant capable of making the tape.

LTO at IBC

This article came about via a chance meeting with the LTO group at IBC, who agreed to send me an LTO drive to play with. No longer quite the cutting edge of technology, the HP StorageWorks Ultrium 3000 is an LTO5 drive, whereas the successor format LTO6, with twice the capacity and bandwidth, is now available. Being higher-end, server-room gear, though, these things don't suffer quite such abrupt end-of-line treatment as some types of computer hardware. I'm actually quite happy to be looking at LTO5 equipment which offers a reasonable amount of capability at a cost that's probably feasible for a DIT, small studio, or one-man animation operation. The drive in question (others are available) offers a raw capacity of 1.5 terabytes per tape, which it's capable of writing at 160 megabytes per second (though see below for more notes on this).

Manufacturers frequently quote numbers twice as big in both respects, since LTO includes a standardised lossless compression which will, on average data, achieve a 2:1 reduction in file size. While, as we discussed in my article on codecs, lossless compression that could work for video data does exist, the algorithm used for LTO is really intended to work on corporate data – documents and spreadsheets – and is unlikely to compress the sort of data seen in the film and TV industry, even if it's uncompressed. Already-compressed data, such as ProRes or DNxHD camera originals, are likely to be incompressible by more or less any algorithm. Happily, LTO is smart enough not to apply this compression to data it can't successfully compress, which would otherwise risk actually inflating the data.

The Drive

The cost of the drive is around UK£1800, and tapes, in small quantities, are about £25 each. This means that having written 30 tapes, or 45TB of data, a user will be in profit over having written the same amount of data to hard disks. The economics of this are likely to be volatile, given the rate of change in both tape and disk technology, but right now it isn't impossible to justify LTO based on nothing more than the cost benefit. The real purpose of tape, though, is archival longevity: various sources quote LTO tape as being good for fifteen to thirty years, based on accelerated-ageing tests involving high temperature and humidity.

While there will be no way to prove these figures until 2043, there really aren't that many other options – certainly experience shows that shelved hard disks don't last very well. People have, of course, proposed writing data to photochemical film as 2D barcodes, with proven archival capability, but then film only really lasts well if kept in rather more controlled conditions than the LTO tests assume, and the total cost of ownership might turn out to be difficult to stomach. Certainly LTO has been shown to be insurable, and in a practical sense sufficiently reliable, for the production of feature films, and that's probably what matters.

The Drive in Use

In use, the HP drive plus the LTFS software does exactly what it's supposed to do. The tape appears as a mounted drive and files can be copied to it and recovered. There is an engineering limitation in that deleting previously written files removes them from the directory listing but doesn't recover the space; this is a reasonable choice, given that the time taken to shuttle up and down the tape to fill in gaps – that is, to fragment files - with new data would be impractical. In general, the intrinsic issue tape will always have with seek time is easy to overlook if the thing is being used as intended – as a more convenient way to access a long-term storage device. Anyone thinking about it as a big removable disk will be disappointed by the minute-plus time taken to locate and retrieve a file, but that isn't really the idea. The idea is to dump a whole load of stuff onto it in one go, and retrieve it as required.

Glitches?

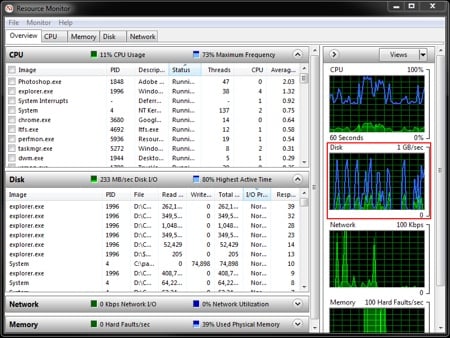

Glitches? Few. That 160MB/second figure represents the raw capability of the tape drive itself, and imposing a filesystem on top of it seems to blunt the performance quite significantly. The problem is that the LTFS software seems to write files a few at a time, speeding up and slowing down the tape between them regardless of whether another chunk of data is already waiting to be written. I never saw rates above 100MB/second with files of a few gigabytes each (About 1TB of ProRes quicktimes from an on-camera recorder). With smaller files, the situation worsens, and as it is, LTFS is not really suitable for storing individual frames as the performance overhead becomes unreasonable. For small amounts of stuff, okay, merely having the option is nice, but nobody's going to be backing up entire movies this way. Wrapping the individual frames up in TARs of a few dozen frames apiece helps, but this does feel like a bit of a retrograde step under the circumstances, and as such LTFS doesn't currently solve the classic problem of storing uncompressed DPX (or DNG, etc) frames. Perhaps future updates to LTFS will implement better buffering, and give us more of the underlying tape format's native performance, especially with small files. It would seem to be a reasonable ambition.

The only other major gripe is that LTO hardware is currently available mainly as SAS-connected drives. SAS (Serial Attached SCSI) is a connection protocol for storage devices that's most often seen in higher-end server hardware, and while many video workstations may have SAS storage (or at least SATA drives using SAS controllers, which is supported), the likelihood of having a spare port is remote. Adding one requires a plug-in PCIe card worth perhaps UK£200, souring the deal for LTO. It is technically possible for LTO drives to be made in USB3 or, for Mac users, Thunderbolt, and I would hope to see this done if the people who make the drives want to sell them to small studios and individuals. The LTO consortium reports no current intentions to pursue USB3, although Thunderbolt was mentioned.

Cool and Robust

Robustness is generally good. Powering-off the drive while it's mounted as an LTFS device did require that the Windows drive letter was deleted and reassigned before it would be recognised again, although this is a straightforward workaround and is probably a side-effect of Windows' generally poor handling of this sort of situation in any case.

This particular LTO5 drive – in common with others I've come across – has a cooling fan which is really rather loud, even for an edit suite, although in a production situation you're never really going to be able to have a tape drive on a quiet set as the inevitable whirring sounds of tape movement are always going to be a problem. The LTO group tell me that this was recognised as a problem in LTO5, and version 6 drives are quieter; I'll try to get one to look at. And finally, I must direct a complaint against HP, whose driver download site for the LTFS and SAS host-bus adaptor software requires an awful lot of information during a signup procedure before the download is made available. The necessity of this seems pretty questionable when the software is only of use to someone with, in effect, a £1800 dongle, and I wouldn't want to be confronted with this in the middle of the sort of highly time-pressured problem solving that one occasionally encounters. Let's just have a file on a web server, eh? Still, hats off to them for providing the demo rig, and coming up with a SAS HBA. Once downloaded, the software was simplicity itself to install and configure.

LTO Overall

Anyway. In general, this whole thing seems pretty well thought out, even if people shooting single-frame formats will still need to TAR stuff up until we get some buffering sorted out. As such I'm happy to consider LTFS and the LTO5 tape format to be Gear We Like, honourable mention to LTO6 for being Gear We Like With Financial Reservations, and with a special mention for the LTO Consortium people. Three cheers for them, and for the rare situation of things having... well, come together rather nicely.

In the spirit of cooperation with which the current LTO+LTFS ecosystem was built, I should mention that this article was prepared with the assistance of Laura Loredo of HP's enterprise servers, storage and networking division, Craig Butler of data protection and retention systems at IBM, Yan Yan Liang, segment marketing manager at Quantum, tape solutions architect Chris Martin of HP, and John Moore, VP of devices engineering at Quantum.

Tags: Technology

Comments