Autonomous long-distance drive

Autonomous long-distance drive

It's one thing to manually specify which points and objects a system should track, but quite another for a visual system to find its own points - and for your life to depend on it. In a strange and convoluted way, self-driving cars may point to the future of cameras

With so many readers new to RedShark, we're replaying some of our more popular articles that you might have missed first time around

Motion tracking is essential in modern film making. Unless you're shooting wildlife documentaries or a political drama, at some point you're going to want to insert computer generated objects into the scene. Actually, if you're making documentaries about dinosaurs, you'll need to do that as well.

Motion Tracking

What motion tracking amounts to is, essentially, telling a machine to "watch" an object, and understand it well enough to know when the view of it has changed. It's only by doing this that you can accurately map 3D computer generated models into real-world situations.

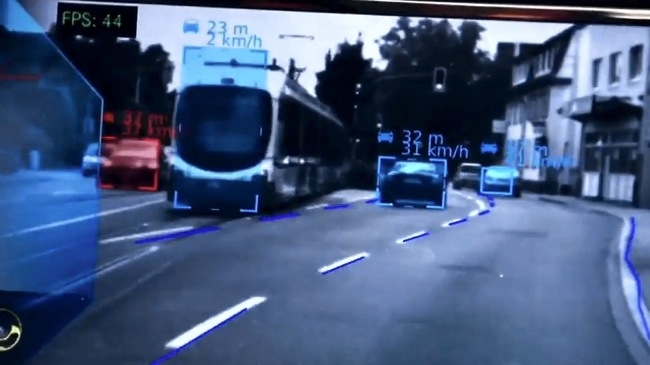

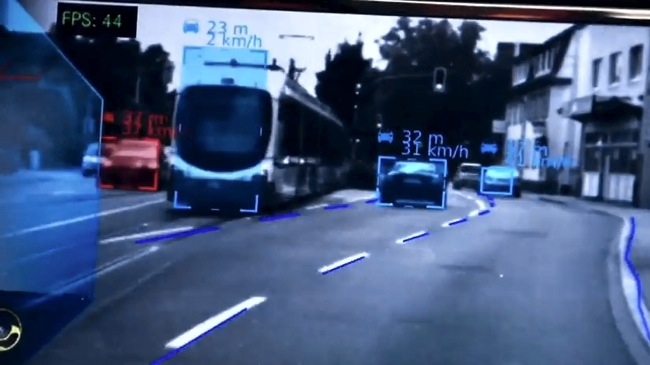

None of which has much to do with self-driving cars, except that the way these autonomous vehicles work is to automatically track objects in the real world, and do it in real time.

Machine Vision

With an array of sensors, including a scanning laser rangefinder, the cars build a picture of the real world landscape. It's a dramatic demonstration of how far we've progressed in the science of "machine vision".

Of course, a camera is a machine, but does it actually "see"? That's a pretty deep question with come complex answers that I'm not going to get into here. Recording an image is not the same a seeing. But what if the camera could "understand" more about the world? What if the camera could "know" about its surroundings.

This already happens in significant ways with cameras. Ever since the first auto exposure and auto focus you could argue that cameras know about their environments, although this is most likely using the world "know" wrongly.

Moving beyond pixels

But at some point, cameras will be able to build a complete picture of where they are and what's in front of the lens. This won't just include the image, but also a 3D model. This model can then be used to create metadata that will enhance the image in various ways, including depth. It might be a better way to do 3D.

And, ultimately, the metadata picture will be of a higher quality than the image from the sensor. At which point, we can dispense with conventional imaging completely and move to a format that is completely resolution and frame-rate independent.

Here's some amazing footage of Mercedes' self-driving car, after the break.

Mercedes' self-driving car footage

Tags: Technology

Comments