Put on your best gravelly movie trailer voice and say "Imagine a world...", because in the future, that's how you'll make films: by imagining a world.

One of the best things about being a technology writer for decades is that you can look at your own predictions and see if they come true. I don't have a perfect record. I once said that "I don't think we'll ever see a time when you can fit an entire High Definition movie into the space of a sugar cube". Imagine how many movies you could fit today on a stack of Micro SD cards the hight of a sugar cube. Probably thousands. That I was so wrong is perhaps a good illustration of what happens when we momentarily fail to take exponential progress into account. It's part of being human: exponential growth is unintuitive.

Another of my predictions is that one day we'll be able to feed a script into a computer and get a fully-made movie as the output. It sounds ridiculous, and it may still turn out to be, but a new AI demonstration has just made it seem about a million times more likely.

Let's unpack the idea of feeding a script into a laptop and getting a Hollywood blockbuster after a bit of rendering. How do we get past the gut feeling that it's impossible? We have to do it in steps.

For anyone who hasn't kept up with AI research, and I suspect that's most people, it can be hard to even explain the concept of AI. Seen through the lens of hysterical media reporting, you could be forgiven that AI is going to make us work for it, or at least take all our jobs, and that's if it doesn't run us over. It's easy to make headlines with a concept like AI that's so little understood. It's hard to understand for several reasons. First, we don't really have a good definition of intelligence. Second, AI research is pretty arcane. It's a completely new domain for most people, and there are very few frames of reference, so the techniques can seem opaque, and the results can be puzzling.

For me, there's a way to understand AI that doesn't mean a deep dive into complexity and abstract methodologies. It's about meaning and understanding. If a computer program can understand the meaning of something, and act on that meaning, then it is in some sense intelligent. The key to this is the concept of "understanding". It doesn't include running a database search to find everyone with two cars that lives in Leeds. There's no understanding there - just a process of matching search terms to database fields. But what if the query was "Draw me a picture of a giraffe driving a Lamborghini"? If the computer made you an image of a giraffe that was indeed driving a Lamborghini, then you'd have to conclude that it had not only understood the meaning of your question, but also knew enough about giraffes and sports cars to put the two together in a picture. You'd also have to say that the AI also understood quite a bit about the world in general.

Three years ago I mentioned a website called ThisPersonDoesNotExist.com. If you go to the site, you'll see a photograph of a random person. Hit "refresh" and you'll see another person. What you might not realise is that the people you see have never been born. They don't exist. These photorealistic images are made by an AI using a technique called a Generative Adversarial Network, which, essentially, makes new images based on data - millions of photos of people in this case - that it's been trained on.

It's still an impressive demonstration, even after several years. It was - and still is - breathtaking that something as nuanced and delicate as a human face can be made by a computer program. And not just one, but an infinite number, each in only the time it takes to refresh a browser window.

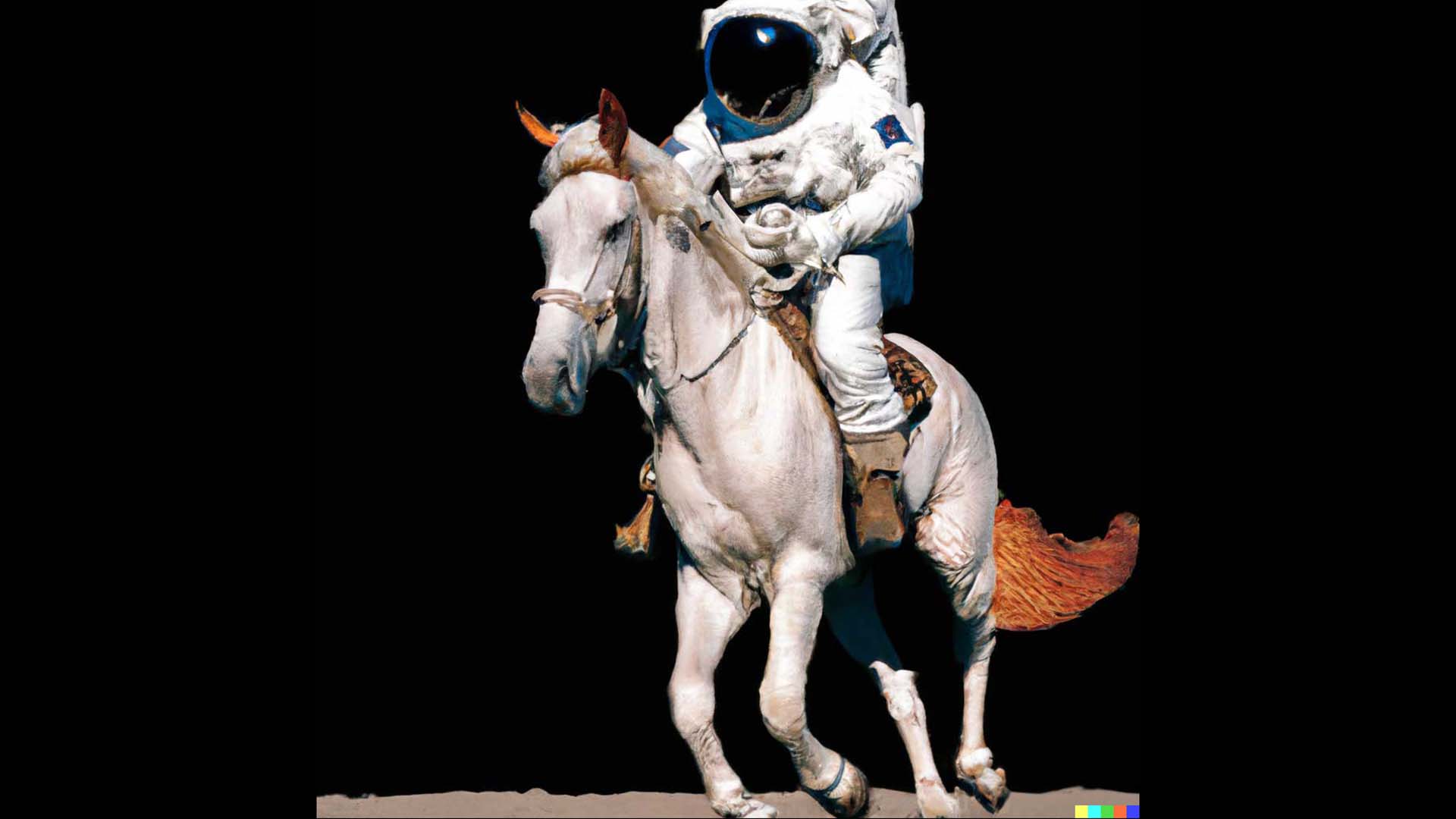

That was then, but now, there's something even bigger. It's an AI system that will make a photorealistic image not just of a person, but anything. You can literally ask if to give you an image of a giraffe driving a Lamborghini in the style of Van Gogh.

The new AI, Dall-e-2, is astonishingly accomplished. It takes us years ahead of where I expected us to be. You can literally describe any scene, any style, and you'll get original images that look like they've been "designed".

This image is entirely constructed by the Dall-e-2 AI system, from the specification, "Teddy bears working on new AI research underwater with 90s technology."

Now imagine where we'll be in ten years. According to Nvidia, the compound growth rate of AI technology is over 100% per year. That quickly leads to some very big numbers. According to the GPU company, we've already seen a million-fold increase in AI capability in the last decade, and it is, of course, accelerating.

So it's no longer very hard to imagine a world where we can say to a computer "Read this script and let me know when you've finished making the movie". With another millionfold increase - and probably more, in the next ten years, it's looking likely that this will happen.

All of which poses so many questions it's hard to know where to start. If you made a film like this, who would be the artist? And while you might get a movie as a result of your script, would it be any good?

I think the answers to this are about artistic intent and control over the process. This element of "control" is what post production will become.

Finally, Just because we will be able to do this in the future, doesn't mean that we have to. I think what's more likely is that we will use elements of this to feed into future virtual production stages, to make it easier to create amazing backdrops. We'll still use real actors in the foreground because that's the essence of filmmaking.

I think we can conclude from all of this that the current paradigm of production and post production is beginning to look old. For all the massive innovations in camera and post production technology, it's time for a new look at how we make content, with AI at the centre of it. How we get from here to there will be a wild ride, but at least it's beginning to look like we now know what our destination is.

Tags: Technology AI

Comments