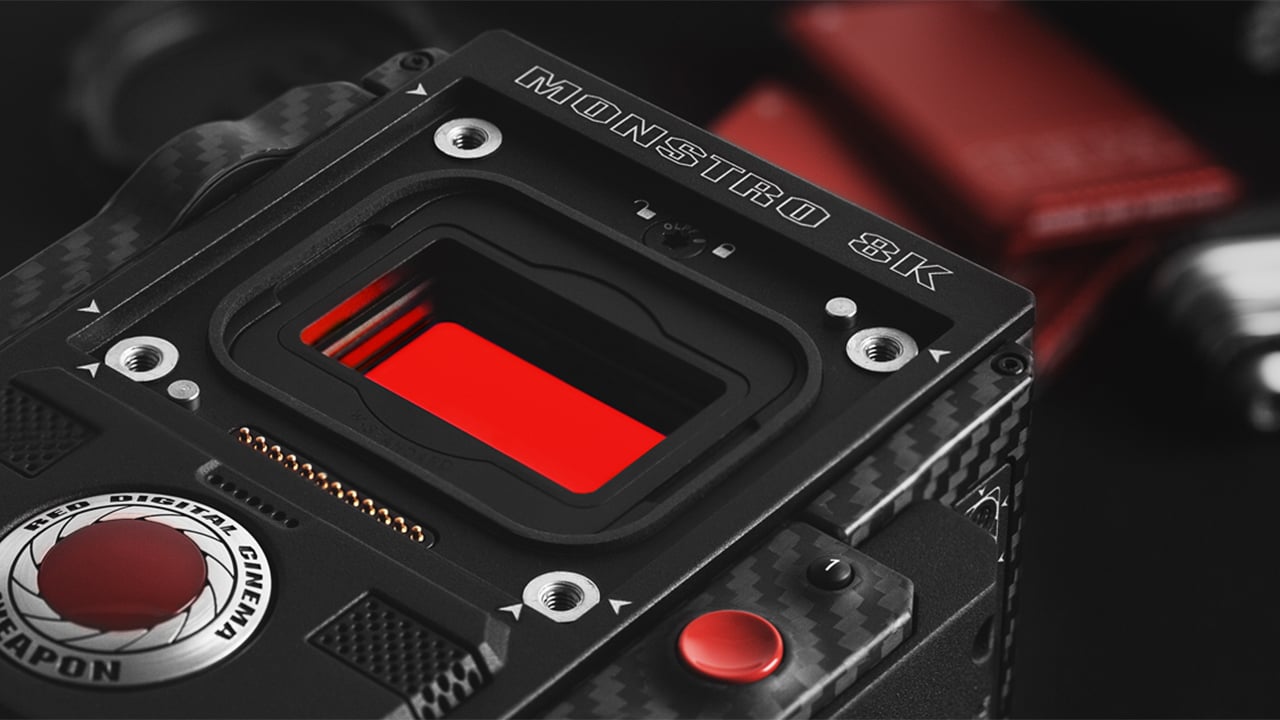

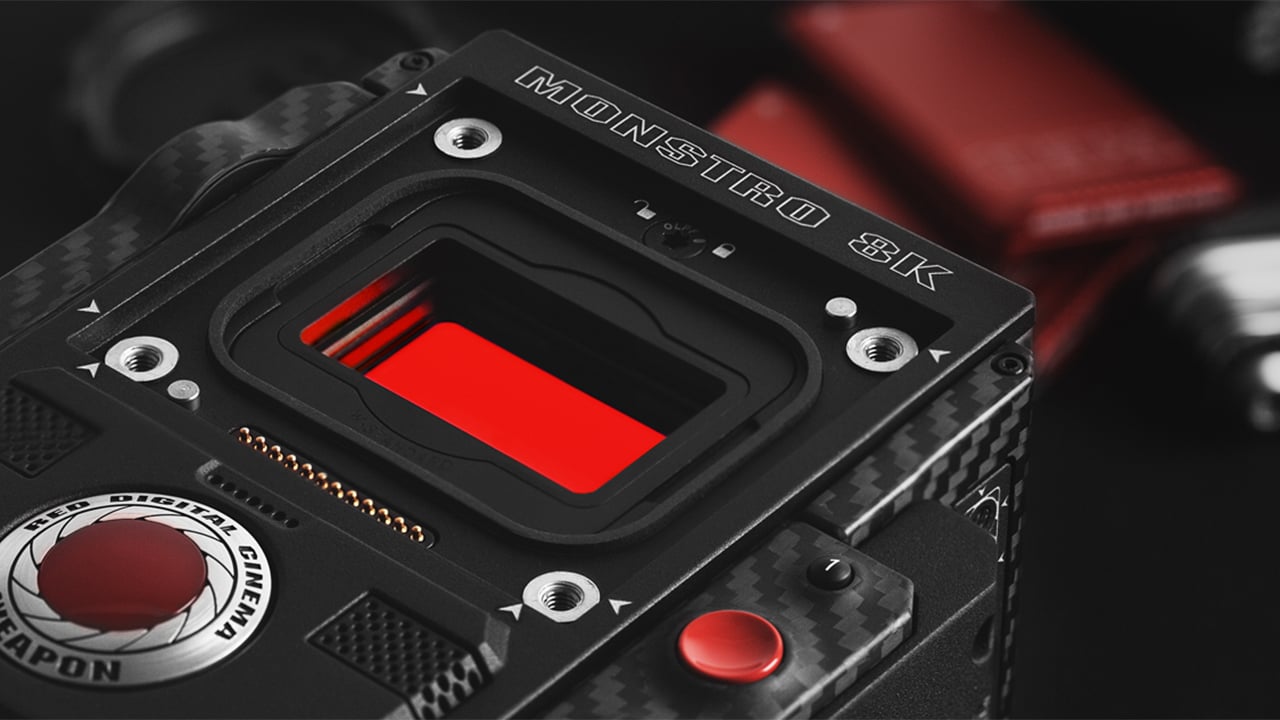

This is the story of how REDCODE was developed in the early days of RED: how it made 4K video possible at a time where storage and bandwidth were extremely limited. And how, today, when modern video surpasses film in every measurable way, REDCODE allows film-makers to work with 8K on a laptop computer.

I recently spent two days with Graeme Nattress, RED's "Problem Solver" by job title. In reality, he's the person responsible for RED's image quality. Graeme was the architect of REDCODE.

DS: When RED was born, over ten years ago, 4K video was a much bigger technical challenge than it is now. What were your priorities at the time?

GN: Back then, and still to an extent now, “compression” was a four letter word. Compression was derided, and with good reason based upon the experience many had with some of the compression technology of the time. But compression isn’t necessarily bad, and when viewed as an enabling technology, it’s quite wonderful. Because of the issues many had with compression, our obvious focus had to be image quality. The other side of REDCODE is practicality. REDCODE made recording 4k RAW in-camera practical. You didn’t need a tether cable to a refrigerator sized stack of hard drives. It enabled a camera small enough to be used in a very wide range of shooting scenarios.

DS: Most people that understand RAW see it as essentially a monochrome signal that can be turned into colour with a knowledge of the Bayer filter pattern and some clever mathematics. But REDCODE records separate colour channels. How is it able to do this? Is it really true RAW?

GN: It’s truly RAW because the spatial relationship of the Bayer pattern is preserved. No colour processing (or other destructive) processing is done ahead of compression, and it’s thus it’s a system whereby what you get out is what you put in. We put RAW Bayer data in, and RAW Bayer data comes out, ready to be demosaiced and colour processed just as if we’d fed the image processing sensor data without the REDCODE compression.

DS: REDCODE eschews the more common Discrete Cosine methods of encoding for Wavelet compression? How is this different, and why did you choose it?

GN: Everything in REDCODE was chosen for compression efficiency with respect to data rate and image quality. We use a wavelet transform as such transforms efficiently scale with resolution and don’t break the image up into blocks. By essentially looking at the whole image rather than just a very small area of it, the encoding can better preserve image quality in a seamless manner (no ugly macroblock edges can ever appear) that also makes the image robust against issues like banding and posterization, which is critical as RAW footage needs to stand up to colour correction not just through development, but through grading too.

REDCODE allows film-makers to edit in incredibly high resolutions, even on a laptop

DS: We've heard a lot about RED's IPP2 (the second and current version of the RED image processing pipeline). How does this relate to REDCODE?

GN: If we’d gone with a more traditional video compression approach, future improvements to image processing would be hard or impossible. Because REDCODE preserves the RAW sensor data, improvements can (and do) occur all along the image processing chain from demosaic, colour image processing and can include new monitor output formats such as Hybrid Log Gamma which were not invented at the time the first RED cameras were produced. IPP2 can take the original RAW image data from original RED One R3D files (REDCODE compressed files have the .R3D file extension) and work on them with improved algorithms to produce better looking developed images than ever before. This is helped by the robust metadata support in REDCODE enabling new algorithms to know all the necessary information about even the earliest of files necessary to produce accurate colorimetry and images.

DS: Have there been any issues scaling REDCODE both in terms of increasing framerates and higher resolutions like 8K?

GN: REDCODE compression is very scalable, so other than a SDK update for the new cameras that support these high resolutions, all just works as it should for the end user. What we have found though is that REDCODE is more efficient at higher resolutions, so it’s working better at 8k than it did at 4k and thus for many use cases you can compress the image a bit more, and thus the amount of data to record the footage doesn’t always have to quadruple in the move from 4k to 8k.

DS: What's your view on ProRes Raw?

GN: It’s very nice when other companies validate the approaches we’ve pioneered and championed to getting the best images out of digital cinema cameras. REDCODE has a 12 year track record of producing excellent images that stand up to the scrutiny of the big screen and have been proven to be robust through VFX and grading. It’s good to remember part of the key to REDCODEs success is how symbiotic it is with the camera systems that support it - camera, REDCODE, decode, image processing is all tied together by a single SDK and thus always works well together.

Tags: Production

Comments