With digital post production it is easy to forget the importance of physical filtration

With digital post production it is easy to forget the importance of physical filtration

Software effects can only get you so far. Phil Rhodes examines current attitudes towards the use of real filters, and how important they still are when it comes to getting the best picture.

There are actually people in the world who consider filters to be obsolete (yes, there really are — I've met them.) This article is for those people.

OK, even the most hardline enthusiast of digital post processing will generally admit that certain effects — things like an ND grad or polariser — can't be faked up in post because they rely on information in high brightness regions of the image, or information about the polarisation of light, that the camera might not record. The thing is, isn't that true of many filters, or even of essentially all filters?

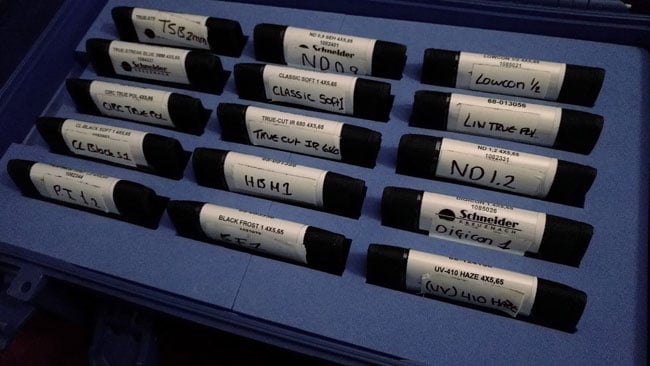

What we're talking about here is the fact that, yes, you can composite a blurred version of an image back over itself and you can use various transfer modes when you do that. You can use more or less blur and you can apply colour transformations (the curves filter, let's say) to the blurred layer, before or after the blur is applied. Lots can be achieved and it's quite feasible to create effects that look very much like an optical black or white frost, or even something vaguely like Schneider's well-liked Hollywood Black Magic filter, which has a complex look that seems, at least by a cursory examination of its effects, to use a combination of optical techniques.

Why real filters are important

But can it ever be simulated by a digital filter? Well, no, not accurately. It's obvious to most people that an ND filter can't be simulated in post, but all filters are affected by the fact that the extreme highlights of an image are long lost by the time it gets to post production. Even the best modern cameras can't see the filament inside a tungsten lamp when the rest of the room is appropriately exposed. An optical filter, though — even a mist filter — can take that light and scatter it into other areas of the frame, depending on its design and the desires of the photographer.

Optical filters, then, have infinite dynamic range. We can understand this by example: glowing light sources are sometimes a desirable result of mist or frost filtration and sometimes not, but they're something that can't easily be done in post without manually locating the centre of the light source. The filter can see the extreme highlight in the original scene and paint a glow around it, whereas a digital effect can only make all clipped highlights glow equally.

There's often no substitute for using a physical filter in front of the lens

The difference is particularly obvious when we're talking about filters specifically designed to create flare, such as Schneider's True-Streak range, which is designed to create horizontal light streaks somewhat similar to those produced by some anamorphic lenses. It is arguably a lenslet filter inasmuch as the vertical features visible on its surface are designed to behave like cylindrical lenses, taking light from the scene and spreading it into (ideally) an infinite horizontal angle. With a correctly configured camera and appropriate lights in the scene, the filter will react to the central highlight of a light source, creating a subtle and complex effect which reflects the shape and type of the light source. A post production effect can only take the clipped white areas of the image and smear them horizontally.

We're going to talk more about optical filtration because it's something that is often overlooked but hasn't changed substantially since the dawn of photography. When we struggle to make digital cameras look unique, or to introduce an appropriate style to a particular production, it's so easy to reach for the trackballs in Resolve that we often neglect the opportunities that exist in front of the lens.

Title image courtesy of Shutterstock.

Tags: Production

Comments