24GB and 10,496 cores? If you think the Nvidia RTX3090 sounds like a pokey GPU, you'd be right. And it'll be rather handy for video production.

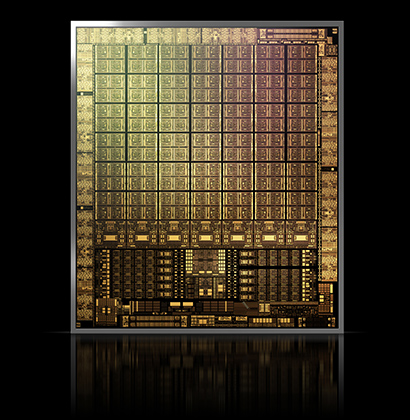

Nvidia's monster RTX3090 GPU. Image: Nvidia.

It must have occurred to someone at Nvidia that the appetite for ever more absurdly-overpowered graphics cards is limited, at least in the context of video games. And without video games, there would be no GPUs in the modern sense, and people who need realtime colour grading would still be paying six-figure sums for racks full of custom hardware.

Nonetheless, Nvidia’s recently-announced RTX 3000 series, about which we know nothing more than the published specs, is unlikely to be seriously taxed by games written for the current generation of Playstation and Xbox. Still, the company seems very aware of non-gaming applications, and it’s no surprise to find applications such as Blender’s Cycles raytracing engine, Chaos V-Ray and Autodesk Arnold on the comparative performance charts alongside the likes of Shadow of the Tomb Raider and Battlefield V.

Yes, if we wanted to run Wolfenstein: Youngblood in 8K at 240fps, an RTX 3090 might well be what you need, but the availability of a GPU in the US$1500 range with 24GB of RAM has quite a few implications for film and TV work. Previously, that much memory required spending a lot of money on something from the Quadro range, or the exceptional 2000-series Titan RTX.

It’s a welcome development because memory is much more of a limiting factor in applications like Resolve, where it’s used in huge quantities for things like the high-quality noise reduction, than it is for video games, which tend to be designed around the current generation of games consoles (a Playstation 4 has 8GB overall.)

A GPU titan

The very existence of a 90-suffixed model number in Nvidia’s RTX 3000 series is something of a new development. An RTX 2090 was mocked up as an April fools’ joke in 2019, but this one’s real; it seems that the 3090 might effectively be the Titan X edition of the 3000 range of GPUs.

It’s often difficult to compare GPUs between generations, since the fundamentals of how they work can vary quite significantly. For instance, the RTX 3000 GPUs are reportedly capable of doing more instructions per clock, per core, than their predecessors, although that sort of thing tends to vary greatly by workload and can only really be assessed under practical tests.

What we do know is that the RTX 3090 pushes the core count into five figures. A really impressive workstation might have a sixteen core processor; the RTX 3090 has – deep breath – 10,496 cores. They’re individually simple, of course, but they have very high speed access to that 24GB of memory and are clocked at 1.7GHz. Actually the lower core counts on the 3080 and 3070 allow (very, very slightly) higher clocks, but almost certainly not so we’d notice; they offer up to 10GB and 8GB of RAM respectively, though the RAM is slightly slower on the 3070.

Conclusion

In all honesty, given what even cards of a couple of generations ago could do, even the lower-end devices from the 3000 range seem likely to be more than most people need. A 3070, on paper, is faster than the flagship 2080 Ti for a fraction of the price; it has more CUDA cores and is clocked faster.

Actually building a system around one of these monsters, or upgrading an existing workstation, will require a little attention to power and cooling. Power consumption is likely to push significantly over 300W on load, meaning that the GPU will be pulling more power than some entire PCs.

It’s perhaps appropriate that the company codenames the 3000 series Ampere. That needs to be allowed for in terms of the power supply in the workstation and the amount of air movement, which is always something of a compromise when we might want to have the thing in the room with us while we’re making creative decisions. Still, anyone looking for a 2080 might well pick up a 3070 and enjoy the lower price and lower power consumption.

In any case, the very highest-end cards are probably significantly more than most people will ever need for grading, though for anyone rendering 3D graphics, Cycles will always eat as many cycles as anyone can throw at it. Perhaps the most spectacular realtime 3D applications for this sort of thing will emerge in the next console generation; in the meantime, anything that lets people go home to their families earlier after a long day with their fingers superglued to three trackballs is a good thing.

Tags: Technology

Comments