The iPhone 12 Pro Max is Apple’s flagship model, boasting improved specs over the smaller iPhone 12 Pro. We look in depth at ProRAW, but what the new range really points to is possibly more profound than just camera specifications.

I’m going to say it out loud right from the start, if you have an iPhone later than an X, you probably don’t need the iPhone 12. Phones like the X are still incredibly capable devices with laptop style processing power. And in some cases due to technology like the Neural Engine, they can still do things that no laptop can, or indeed any mirrorless camera.

That said, my current iPhone's battery was on the wane and with the amount of time I use it for typing emails and taking photos, particularly for reviews on RedShark I deemed the upgrade to a larger screen worth it, not least because my phone was still worth a useful amount on trade-in to soften the blow. It's still not an easy decision to make, especially right now. But it is an integral part of my work life.

The iPhone 12, on paper at least, doesn’t match some rival flagship Android phones when it comes to outright camera specifications. At 12MP the iPhone is what many people would consider to be low resolution compared to the 40-108MP we are seeing elsewhere. The reality is that 12MP is a good resolution to have, considering that most phone photos will never be adorning a wall at A0 size, instead either going to social media, the web in general, or being shown to mates. With the iPhone megapixel counts are a distraction because the one thing that no other smartphone can currently match is Apple’s A series processing power.

A glance at the Geekbench Mobile scores shows Apple devices in the first top 20 slots, without any other manufacturer even getting a look in. The iPhone 12 Pro Max scores a whopping 1585 on the multi-core benchmark, while the fastest non Apple device, the OnePlus 8, comes in at 887, which is still lower than the two year old XS, which scores around 1109.

Benchmarks are one thing, but as we know real world performance is quite often something different. However these scores are still very telling, and yet they still don’t account for the dedicated specialist processing that 's on offer such as the 16-core Neural Engine. But before I get into what some of the new features of the iPhone 12 Pro Max means for us, let me start at the beginning. Opening the box.

iPhone 12 Pro Max build quality and first impressions

Taking the 12 Pro Max out of the box for the first time, I was struck by how it was less gargantuan than I was expecting. I have to admit that after I had ordered it I had wondered if I had made a mistake with the size. I went for the 12 Pro Max mainly because I do end up doing quite a bit of work for RedShark on my phone, and the larger screen helps hugely with that. I also use the phone a lot for review images, and so any improvements or added versatility to the camera would be useful to me.

The packaging is to be commended for its consideration for the environment, but it could be frustrating for some users. There’s no shiny new headphones and there’s not even a charging cable, which in my case was annoying because my old one had just split. I ordered a third party one with a braided covering, so that should last longer than the OEM version. It’s situations like this that do call into question why the iPhone hasn’t moved to a TB3/USB-C style interface. I have a ton load of those types of cables lying around doing nothing!

Build wise I feel that the 12 Pro Max is easy to hold due to slab sides rather than soap dish style curves. But I always find that most of how the bare phone feels to the hand is largely irrelevant because I don’t think I’ve ever seen anyone use a device like this without a case!

I always find it ironic that so much time is spend crooning over the amazing industrial design before blocking it from view forever inside an ugly outer casing. Yes, I could have had Apple’s new MagSafe clear case, but if you’ve ever seen how much crud accrues inside the average phone case you’ll quickly make the realisation just how bad an idea a transparent case is.

That said, the design is beautiful. Given what I’ve just said about cases. It’s largely irrelevant. As is the fact that the phone’s three cameras jut out from the body. Once a case is on it, this ceases to be any sort of issue.

The new construction of the iPhone 12 series should theoretically mean that the need for a case is diminished. I have seen tests where the front screen for instance survived torture tests with barely a mark on it. Not to be repeated with my own phone for this article I might add. And since I like to trade in my old phones I want to keep this one in as good a condition as I can, so inside a case it goes.

Turn the phone on, and that screen becomes the all encompassing wow factor. It is impressive to behold. Yes, other phones on the market have huge screens, but this is my own first large screen smartphone so at least allow me a purely personal moment of being impressed!

The famous ‘notch’ still exists, and yes it is still annoying, although it’s difficult to see how Apple could ditch it altogether. The sensors and camera need to go somewhere.

Transferring the settings from my old iPhone to the new one, as always, went without a hitch, and aside from waiting for all the apps to download, it was totally seamless. Once this was done I did what any self respecting tech writer does, and I installed the latest beta of iOS. The primary reason I did this was that I wanted to try out the phone’s new ProRAW photo format, which was another factor in my purchase decision.

iPhone 12 Pro Max ProRAW - Using the camera app

Yet another factor in my decision process was the added lenses and photography flexibility over my previous phone. The wide angle lens would be very useful to me, as would the slightly extra throw of the 2.5x telephoto.

Some have questioned why there is only a 2.5x telephoto when some other cameras now have much longer focal lengths. All I can suggest is that Apple hasn’t figured a decent way to do an optical zoom lens yet. It could have made a 4x or 5x telephoto, but as a fixed focal length that would be too long to be used as a general purpose portrait lens. So the only option is for future phones to either have a fourth camera, or a genuine zoom, possibly a periscope style design.

I only ever take raw photos on my phone via an app like Halide or Firstlight, even if I’m taking general snaps, so my focus here will be on the camera behaviour in use, ProRAW images and editing.

ProRAW is only available on the iPhone 12 Pro models, or at least it will be once iOS 14.3 is released in full. It is not, as you would ordinarily expect, a proprietary format, but instead it is a widely readable DNG image. This means that any ProRAW photo that you take can be immediately edited in any software you have that takes DNG files. So what’s so special about it if it’s just a DNG file?

Well, ProRAW images aren’t just the raw sensor data. The DNG output from a ProRAW enabled image uses the Neural Engine processing of normal iPhone images, such as the Deep Fusion, which captures multiple bracketed frames in a fraction of a second, and Night mode functions, and then creates the final raw file from that. It gives you what the phone thinks is a good starting point for the image, but all the information is there ready to edit like any other raw file and not baked in. At least most of it is. You can't undo the noise reduction for example, but as you'll see this is exceptional in its quality.

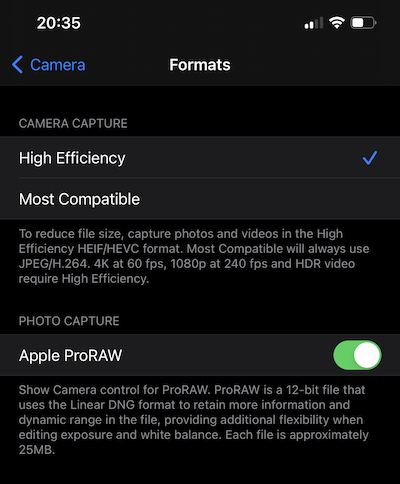

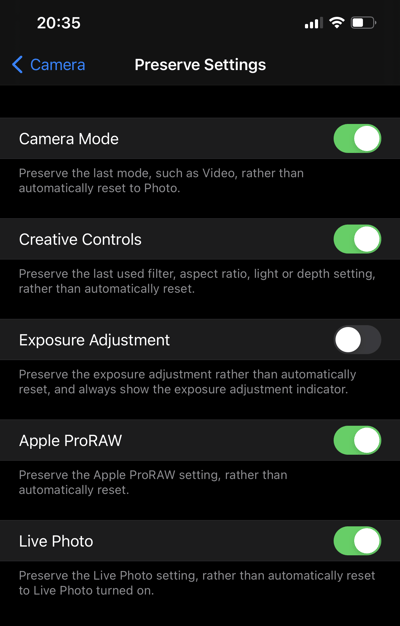

In the beta of iOS 14.3 ProRAW isn’t enabled by default. It has to be first switched on as an option within the camera app settings, and then you need to press on the “Raw” icon within the camera app itself. If you have turned on raw within the camera app and you leave and lock your phone, the option will have disengaged by the time you go back into the app. It turns out that there’s another setting within the app preferences in the “Preserve Settings” menu to specifically tell it to keep raw engaged rather than automatically reset to JPEG or HEIC.

Enabling the ProRAW setting in the camera app.

Enabling the ProRAW setting in the camera app.

Making sure ProRAW stays enabled if you leave the camera app.

Autofocus

LiDAR is used to assist autofocus in low light, but in fact overall the camera now focusses incredibly quickly. I’ve noticed none of the hunting in and out that I’ve had on my previous phones. On the iPhone 12 Pro everything just snaps in extremely quickly. It also appears to lock on and track humans and animals much better than before, which should mean that it is much more reliable for capturing spontaneous moments and events.

Switching between the cameras is extremely quick and responsive. The telephoto lens is much improved from the older phones, allowing a far closer minimum focus distance before, which enables almost macro style shots. I haven’t used the iPhone 11 Pro so it may well be that this ability was present there, but for iPhone users who are coming from devices prior to this, this is something else that might be useful as an added brownie point in the decision list.

The 120 degree ultra-wide angle lens is really useful, not just for landscapes but to create unique perspectives. There’s some loss of sharpness edge to edge, but its usefulness and the final effect is far more important here. What’s even better is that Night mode is enabled for both this and the normal wide lens now, making subject matter like astrophotography a very real possibility. Even better is that Night mode itself is also compatible with the ProRAW output, which means that the already good astrophotography possibilities are now even better.

As I mentioned earlier, ProRAW images can be edited in any software which takes DNG files. Within Apple’s own Photos app that is included with the phone, you will initially see an image that the phone thinks is the best result. As you’d expect, what the phone thinks is the ideal result and what you or I think is a good result are quite often two different things. Primarily the phone always employs far too much detail enhancement.

As a result of this, when you first go into the Photos app to look at your lovely ProRAW image you’d be forgiven for thinking “Is that it?!” However, if you then click on “Edit” the app will then load the unenhanced ProRAW file for you to adjust. The difference between the two can be stark as seen by the two images below. Note that the edited raw file has much more natural and realistic skin tones.

A ProRAW image with the default processing giving a 'JPEG' type look.

A ProRAW image adjusted in Lightroom Mobile with DOF effect added in Focos.

The difference between raw and ProRAW

You might be left wondering what the real differences between raw and ProRAW images are. Below I have shown two examples. One is a raw file taken within Halide, and the other is a ProRAW file. Both were taken in almost total darkness, save for the moonlight. I’m afraid that at the time of writing that since the UK is in lockdown my images are not of the Rocky Mountains or the Utah desert monolith, so my car bonnet and driveway will have to suffice for this particular example. As you can see the differences are, pardon the pun, night and day, particularly with reference to noise.

ProRAW Night mode image taken on the iPhone 12 Pro Max.

Raw image taken in Halide on the same phone.

Whilst it is possible for me to use the noise reduction in Lightroom to reduce its effects on the Halide image, it simply cannot do it anywhere near as accurately as the ProRAW image processing can. Once there’s fine detail involved a common all garden standard raw image doesn’t stand a chance if it is full to the brim of noise. In the Halide image much of the detail has already been lost, never to be recovered.

Very low light image taken in Halide, handheld, no post processing.

The same angle taken seconds later using ProRAW, no post processing.

100% crop of the Halide image.

100% crop of the ProRAW image.

Quite how the ProRAW processing is producing such low noise images is open to speculation, however I would perhaps put my bets on the image analysis looking at how the noise moves from image to image and looking for the constant detail. I could of course be totally wrong, but the way in which ProRAW reduces the noise levels without destroying the detail suggests some form of deep image analysis. Some have suggested that the ultra clean look looks too digital. If for some reason you want more noise you can always add it.

The iPhone knows when it is mounted onto a still object such as a tripod. When it detects this it will automatically enable longer exposures, which you can tune manually. One thing to note, Night mode purportedly works with the telephoto lens, however it does appear that the phone switches back to the wide lens and then creates a ‘digital zoom’ of the image to match the angle of view of the telephoto. This is certainly odd behaviour given that the telephoto camera not only image stabilisation. But it could be related to why Night mode in portrait mode uses the wide lens and not the usual telephoto one.

Taking a night portrait can produce some good results, although your subject will need to remain as still as possible and the output is not in ProRAW. The image below was taken in Night Portrait mode handheld and then had one of the Photo app’s preset looks applied.

The way ProRAW works in regard to using Deep Fusion is important to note when comparing the iPhone 12 Pro to other phones. The ability to use Night Mode in a raw format that uses the neural engine and Deep Fusion processing to drastically reduce noise and keep detail gives the iPhone 12 Pro something that no other phone can currently. It will be interesting to see how other manufacturers respond, because most phone camera comparisons only look at the highly processed compressed out of the box output, which is only really relevant to casual users. Once a feature like ProRAW is engaged, the game changes.

Lastly, Night mode and ProRAW also work on the selfie camera, enabling lowlight reverse shots to be taken and then fully edited at a full 12MP. As I mentioned ProRAW does not work with any of the Portrait modes, either on the front or rear cameras. All is not lost, though, since apps like Focos do an incredible job, sometimes better than the built in software, at creating shallow DOF photos from existing deep focus image.

The Google Pixel 4 has had an astrophotography mode in it for some time, producing some pretty amazing results. Although they can take a while to process. On the iPhone 12 Pro Max 30 second exposures had been merged and made into an editable ProRAW photo in around a second after the capture had been completed.

The significance of LiDAR in iOS devices

One of the most significant additions to the iPhone 12 Pro is LiDAR. When I think back only a handful of years a basic LiDAR system for a drone could set you back around £10k and required cloud based analysis of your data to do the number crunching at a subscription price of £1k a year. Now we have LiDAR in a phone, and there may well be much more to this than for app developers simply to have an additional toy to play with.

I’ve been playing around with various LiDAR scanning apps, and with care they can produce some absolutely stunning results, with full 3D reproductions of the room you are in, or an object. It isn’t the most accurate LiDAR around, but this is on a phone, and it has repercussions on both this and future devices.

Now, the implementation of LiDAR on the iPhone could be a precursor to the rumoured Apple glasses, since augmented reality benefits hugely from it. Apple could be trialling the system on its current devices to iron out any issues and even build up apps that utilise LiDAR enhanced augmented reality that could maximise available software for the glasses once released.

The inclusion of LiDAR in both the mobile phones and potentially the rumoured glasses could mean big things for applications such as mapping. Apple already has 3D views of the most populated areas on its newly revamped Maps app, and if a large group of people opted in it would be possible to create extremely detailed street level 3D views on a crowd sourced basis.

All of that is conjecture, but I think it is quite likely that as the phones improve, so will the LiDAR accuracy. And in the long game it means Apple has a head start and it will likely play into a longer term strategy. LiDAR has a lot of possibilities yet untapped. More accurate LiDAR has potential for much, much better depth mapping and computational DOF effects, as well as object removal in photos and indeed video. You could, for example, take a photo with a person walking in front of it. The photo could be taken in an instant, but the LiDAR could run a bit longer, enabling full removal of the person from the image.

No, the LiDAR in the current phone isn’t accurate enough for this, as no doubt someone in the comments will point out. But that’s not the point. It’s the future possibilities we’re talking about here. And before someone tells me that LiDAR in a mobile device will never be accurate enough, at one point in the past I was told that LiDAR in a phone wouldn’t be possible, and now look where we are.

First conclusions

For photography on the move, the new iPhone 12 Pro and Pro Max are going to change things. Yes, I know that other phones such as the Pixel have some pretty paper busting specifications, as well as exceptionally good night modes of their own. But the clincher here is when the iPhone is using night mode and ProRAW and Deep Fusion all together. Unlike with ProRAW Rival phones do not create a raw file out of the final night mode computation. To do that you need to take each of the individual raw files (which the phones can be made to give you) and then process and merge them yourself.

By definition the use of ProRAW does include the application of Deep Fusion and the Neural Engine, but it is important to emphasise the fact that this combination really does mean that currently at least, the iPhone 12 Pro has an advantage over its rivals even if it can’t match them for telephoto or overall pixel count. Particularly when it comes to the sheer speed at which it computes and puts everything together. This is the groundwork that has been laid, and once Apple does feel it time to increase the number of pixels it will have an extremely solid computational photography foundation from which to build things.

The question then is whether you should upgrade from your current iPhone to the new one. From the perspective of the 12 Pro Max, frankly most people don’t need this device. The 12 Pro will give you an equal photographic experience save for sacrificing a small amount of telephoto. If you don't need telephoto then the standard iPhone 12 will more than suffice. The Pro Max has slightly better stabilisation, but in the grand scheme of things it probably won’t make a huge difference to you day-to-day. I mainly bought the Max over the standard sized Pro due to the amount of reading and typing I need to do, and for that a bigger screen is better.

If you already own an X or an XS your main decider will be whether you really want ProRAW. If you are currently happy with the raw results from those cameras you can perhaps wait a little longer until next year. For phones previous to the X however, there will be a marked difference in quality and ability for you.

In the next part I’ll look at video capability.

Tags: Technology Editor Review

Comments