Replay: The next revolution in resolution is going to be less about physical representations of reality but rather how we perceive it ourselves.

Video resolution has been through a revolution in the last couple of decades. The difference between standard definition and 8K is about eighty-five times the number of pixels. And if that doesn't feel like enough, you can now buy a digital film camera for under $7000 that shoots in 12K. That's certainly enough Ks for most people.

But that's not the biggest resolution revolution, however. There's another one taking place right now, and surprisingly, it has very little to do with pixels.

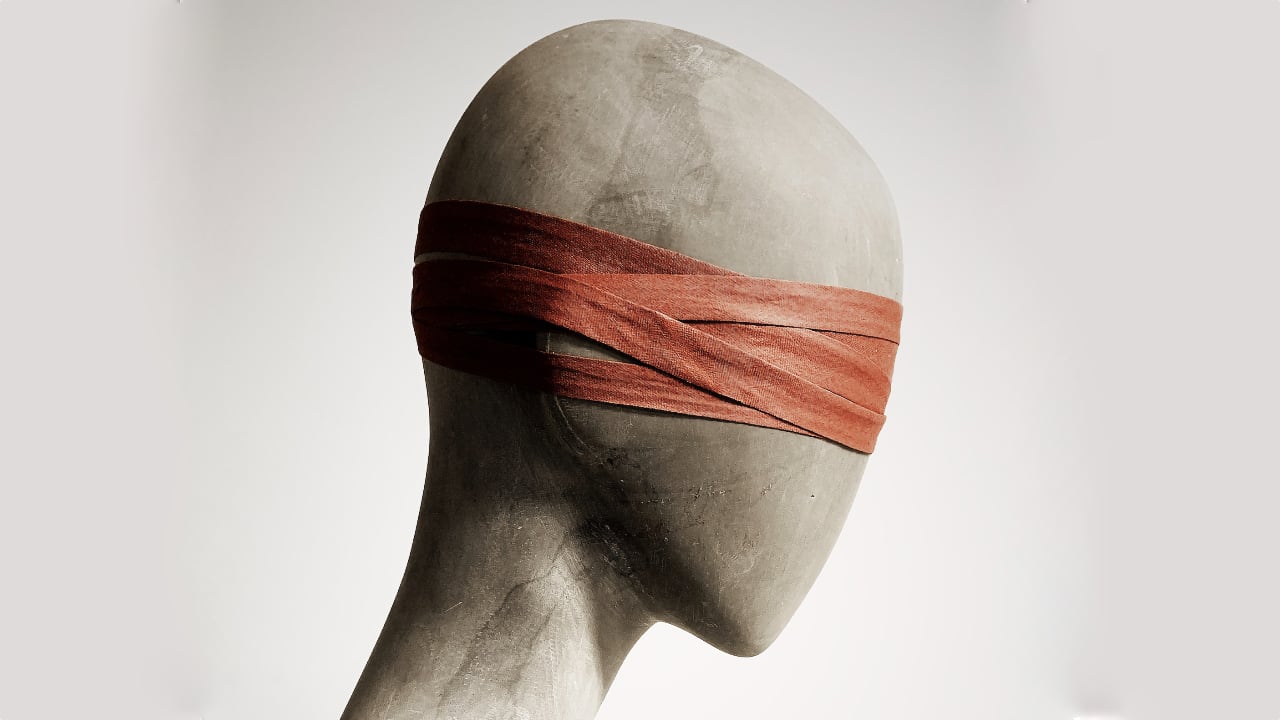

The next resolution revolution will be more about us than cameras. It will be about understanding how we see things. And ultimately, it will lead to the conclusion that high resolution is ultimately made by us - whatever the capability of the technology.

It probably won't surprise you to learn that this paradigm change will come partly from AI. But that's not the whole story or even the beginning of it. To understand the future of video, we need to look inside our heads and ask, "How do we really see things".

Seeing is believing

There are, as ever, several answers to this. Obviously, we see things with our eyes. But that's anything but a complete explanation. It merely kicks the problem further upstream. But, ultimately, we will only get to the bottom of this once we start talking about perception and consciousness, and when we do, we'll realise that this is not just any problem; it's a hard problem.

The Australian Cognitive Scientist David Chalmers said this about the hard problem:

"There is not just one problem of consciousness. "Consciousness" is an ambiguous term, referring to many different phenomena. Each of these phenomena needs to be explained, but some are easier to explain than others. At the start, it is useful to divide the associated problems of consciousness into "hard" and "easy" problems. The easy problems of consciousness are those that seem directly susceptible to the standard methods of cognitive science, whereby a phenomenon is explained in terms of computational or neural mechanisms. The hard problems are those that seem to resist those methods."

Broadly speaking, the "easy" parts of consciousness to study are those which you can test or measure - like the response of nuerons to stimuli. The "hard" parts are those that you can't measure. But you can't deny that those hard-to-explain parts exist because whichever way you try to analyse consciousness away, it remains stubbornly "there" and is what comprises the essence of the living, thinking "you".

You might reasonably ask at this point why consciousness matters when discussing video. As long as we know what we're seeing, why does it matter beyond that?

Here's why. Take a few deep breaths. (And because this stuff is pretty intense, I've broken it into two parts. First, we'll deal with the "in your head" bit here and with the AI in a later column.)

The reality dysfunction

In my view, one of the most important books of this century is "Being You", by Anil Seth. He aims in the book to build a theory of consciousness, and while he's doing that, he turns our default understanding of perception entirely on its head. We don't perceive reality directly. Instead, we somehow function in the world through an overlapping series of controlled hallucinations. On the face of it, it's hard to even begin to understand what that means, but it boils down to something quite simple but remarkable.

The first thing to understand is that what we assume to be reality isn't reality. That red rose, pink flamingo or blue sky aren't objectively those colours at all. Or, indeed, those things. We can't conclude that there is a direct connection between the world outside our head and what we perceive to be there. If we could do that, it would mean we already had a mechanistic, deterministic notion of the nature of consciousness. But there isn't a direct cable connection between what we see and what we perceive. Far from it.

Instead, Seth suggests, the way our perception works is that we make guesses about the world based on what we know so far. Those guesses are fed into our "reality engine" (my words, not his), which is the source of very real-looking hallucinations.

"Guessing" doesn't sound exceptionally reliable, but that's less of a problem than it sounds because we continuously improve our guesses as new information arrives. This process isn't as chaotic or random as it sounds because our guesses follow a methodology with a name you might recognise if you've ever looked into machine learning or computer vision. It's Bayesian inference, and it's essentially a way to improve the accuracy of guesses as more information arrives. For example, if you're driving along the road and see someone lying in your path, you might assume they've fallen ill. But then you notice they're wearing a tee shirt with the words "No Airport Runway Extension". So this looks like it might be a protest. Shortly after, dozens of similarly-dressed people arrive, followed by police sirens and large numbers of police officers. You're still left guessing, but your guesses have painted an almost complete picture, which happens to correspond with the truth.

While your initial assumption might have been off the mark, it quickly gets corrected. This happens all the time, on a big scale, a small scale, and everything in between.

None of this sounds much like our lived experience of perception, nor a sound basis for our conscious thought processes; it seems counter-intuitive. And yet, it is plausible because it solves the problems surrounding how we can have a subjective experience (i.e., what the colour red seems like to me) that is also somehow tied into the objective world.

Wither pixels?

Did you notice the role that pixels play in any of this? No? Well, that's the point. We don't think of pixels. We don't see in pixels. Our thoughts and imaginings are not pixelated.

But, without a direct and deterministic connection between the world out there and our own subjective perception of it, shouldn't it all seem to be a bit, well, blurry?

Here's a quick thought experiment to explain why our own thoughts and, yes, our subjective experiences are perfectly sharp and seem real to us.

Imagine being back at school. Outside, there's a playing field where you used to go and run around with your friends. Now, in your head, freeze-frame your mental image of a patch of grass about a third of a metre square. Again, in your mind, "zoom in" until you can clearly see the individual blades of grass. Keep zooming. Don't stop until you can see the actual structure of the grass: the ribs supporting the leaf, the texture, the subtle variations in the colour.

Is it still in focus? Yes. How could this be possible? It's not as if you carry a gigapixel photo of that patch of grass in your mind. So what exactly is it that you're seeing here?

What you're seeing is created by you and informed by your previous experience. You've seen grass close up, and what you're seeing is what you remember but projected onto your imagined patch of grass. And because this is the product of your "reality engine", it looks real because you're defining it as real.

The same process applies to the world outside your head. Instead of relying on memory, you have objective reality to guide your guesses. But you're not seeing reality: you're seeing the result of accurate guesses, verified and reinforced by reality.

Our sense of realism is generated by us. It is, essentially, a label we choose to attach to an experience. It's like a metadata tag where the value "Real" is set to "1" instead of "0". It's why dreams seem so convincing (and so absurd - because they are both real and yet they can't possibly be!). But it's also why video resolution might not be as important as we have nearly always thought it to be.

Self-resolution

Just to be completely clear: if we generate the sensation of reality to ourselves, then all we need to do to give a sense of high resolution is trigger the application of that label, rather than having to go to extreme lengths to capture and reproduce extremely detailed pictures.

Generative AI very nearly offers validation of this. What could be less like a high-resolution image than a paragraph of text? But feed that text into Midjourney 5 (5.1 is available now), and with a bit of practice, you'll get extremely high-resolution images like the ones in Simon Wyndham's definitive article on How to get great results with Midjourney.

You can perhaps see where this is headed. AI will be our interface with "I" - our own intelligence. We're on the threshold of a seismic shift in our knowledge of ourselves and of the world. Our relationship with reality is morphing towards a more deeply understood and profoundly helpful model. In the space of six months, our previous fifty year's assumptions about the future of content creation have been blown away. The dust hasn't settled yet. When it has, what will be there is anybody's guess.

Comments