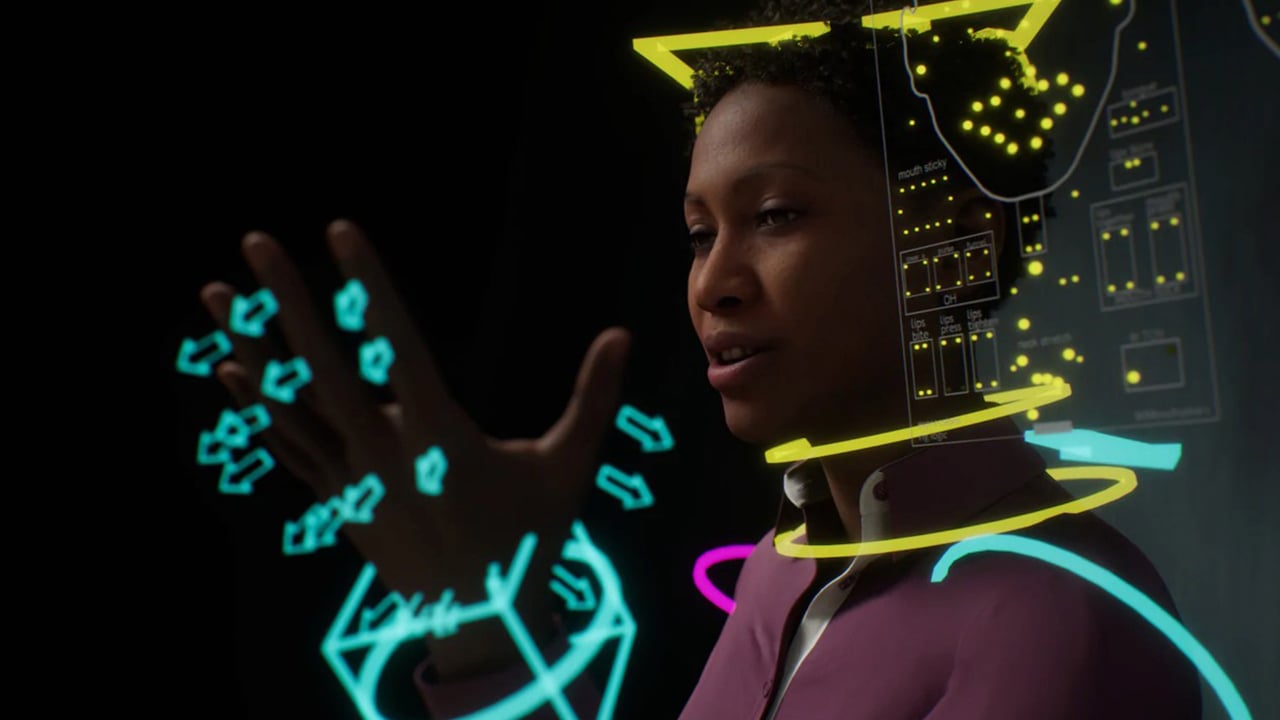

This article by RedShark's former Editor in Chief, David Shapton, is an important insight into where technology is heading. The metaverse - a converged and universal 3D overlay on real life - is the direction that almost all new and cutting edge technology is taking us. Previously only hinted at in science fiction it is now becoming a science fact.

Don’t feel puzzled or inadequate if you haven’t come across the metaverse yet. But be prepared to be surprised or even amazed. It is the biggest of big pictures. And it is the convergence point of most, if not all, media, computing and communication technologies.

The metaverse is what the internet is likely to become. It will be an “emergent property” of new and exciting technologies like Virtual Reality (VR), Augmented Reality (AR) and Extended Reality (XR), 5G, Edge computing, AI, New, low power consumption, high processing power chips (like Apple's M series), New internet satellite networks, Real time games engines, GPUs.

It will affect every sector of the economy and our daily lives, but most notably Retail, Entertainment & Leisure, Design, Manufacturing, Medicine, Education, Transport (most notably Autonomous Vehicles), User interfaces, Content creation, Telecommunication, Transport and travel.

What is the metaverse?

There’s a big clue in the way we talk about “the metaverse” and not “a metaverse”. There will only ever be a single metaverse. That’s critical. The metaverse is only a metaverse if there is only one of them.

The metaverse is a shared 3D space where users can see other people and share experiences. It can mirror the real world, or can be a total fantasy. That’s up to the user and both versions - and everything in between - will be supported. There will be specialist devices to access it where you need extra high precision but most functions will be supported by devices that will become everyday.

So to some users it will be like being inside an actual computer game. To others it will be the way we do our shopping. To industry, it will support micron-accurate simulations of production processes. To a surgeon, it will allow them to actually be inside a human body.

All of this is going to demand some massive improvements in technology. And that process of improvement is exactly what is going on now.

No need for cumbersome headsets

It is obvious that we won’t be walking down the high street any time soon wearching an immersive VR HMD (Head Mounted Display). It would be dangerous. What’s far more likely, and much more practical, is that we will access the metaverse though AR (Augmented Reality) or XR (Extended Reality). Apple is reportedly developing AR glasses.

Exactly when we will be able to buy these is anyone’s guess and if there is an issue with them it is probably that technology is changing in this area so fast that almost any snapshot of the current state of the art will be baked into Apple’s product and likely to be obsolete before it even gets to market.

Apple knows this and will wait until they are far enough ahead of the curve before they release a product.

As time passes, AR displays will improve. They might project an image onto the retina. Or they might bypass physical perception altogether and communicate directly with the brain though a low-level “reality” language (that we do, of course, have yet to discover).

If this all seems like fantasy, remember that we now have AI-based video codecs. At the point where you already have a cognitive process - albeit an artificial one - encoding live video streams, being able to talk directly to the visual side of our brains doesn’t seem quite so far away.

Augmented Reality is almost the perfect description of the role of the metaverse

AR provides a 3D modelled overlay on top of our visual experience. As well as showing “generated” objects in the real world, there will be a data layer too, helpfully annotating other people’s faces with a reminder that we met them two years ago in Austin, Texas, that they have three children and like snowboarding.

While all of this might seem “nice to have” but very far from necessary, there are reasons to think that the importance of the metaverse will be much more than this.

It is also worth remembering that we already have a global data overlay on our real life but instead of seeing it as part of our visual field, we see it on our smartphones, smart watches and digital car dashboards.

How can the metaverse become as big as the internet?

It will happen gradually, and while it is growing, it won’t be the metaverse, just small slivers of it. Eventually, though, all the individual examples of metaverse-type thinking will join up and we will suddenly realise that we live in a shared augmented reality.

Here is an example of metaverse-type thinking.

Individual Metaverse applications will be developed, like the ability to walk through a virtual shop in an avatar of your body. Your avatar will carry your measurements and perhaps your clothing preferences - privately and only released with your permission. You'll be able to try on a dress in a shop, look in a virtual mirror, and see yourself wearing the garment, complete with folds and accurate movement, generated by the Metaverse's physics engine.

If you want to buy the dress, you just say “I’ll take that” to the virtual assistant, who will wrap it up and send it to you, through the real mail. But beyond that, because your avatar carries your complete set of measurements (obtained by scanning yourself with the LIDAR sensor in your iPhone) the “shop” will send your measurements with your order to a clothing manufacturer. There will be a Clothing Description Language that fully describes the garment so that it can be manufactured just for you to fit perfectly.

While you could reasonably argue that this is niche, remember that this doesn’t replace the shopping experience: it enhances it. This will work equally well in an actual shop as in a virtual one.

How can all of this even be possible?

It is possible and indeed very likely because of the current trajectory of several complementary technologies. Here are some examples.

Real-time, photorealistic games engines

Computer games provide the best example yet of shared 3D worlds. Half-life, Fortnight, Avakin Life and Minecraft are wildly different examples of shared 3D worlds. Other games that focus on realism rather than the shared nature of the experience commonly use Games Engines like Unity and Unreal Engine.

Recently, Unreal has achieved extraordinarily high quality and is even being used in feature film production as a virtual background. It has recently revealed “Meta Humans” - photo realistic models of human beings that don’t require weeks of design and rigging.

5G and Edge

This is about more than cellphones. It’s about infrastructure: visible and invisible. Not only will 5G provide high bandwidth, low latency access to the internet, it will be the “last mile” of Edge computing. From any 5G device, it will be as though you’re connected to a powerful graphics and AI workstation, because the responses you get from your device will be so fast and so rich.

ARM chips everywhere

Somewhere just outside of our awareness, Apple has built a whole computer platform around its own ARM chips, the M series. All of a sudden, they’ve taken the lead in price/performance/power consumption.

At the same time, the Cuppertino innovators have built AI and all other kinds of specialist processing into the chips themselves, and unified the sections with a shared on-chip memory that has a common high speed bus. The result: an eye-watering increase in performance.

Intel disputes the scale of performance gains, but it’s clear that a new trend in processor design has broken through. But it’s not exclusive to Apple. Other semiconductor houses like Qualcomm are coming through with their own designs.

What it means for the world is that high power, hugely efficient processors with specialist accelerators will become universal. This can only benefit the metaverse, and bring many of its wilder-sounding features a lot closer.

Artificial Intelligence

AI is growing in capability exponentially, and is doubling in power much faster than the soon-to-be-obsolete Moore’s Law. One unique characteristic of AI is that it is capable of improving itself, so it is very hard to predict how vast it will improve, or in which directions.

Much of the AI in the cloud is powered by Nvidia, known to most users as makers of GPUs - graphics cards and chipsets. And this is a useful coincidence, because not only is GPU compute resource at the centre of metaverse visual experiences, so is AI.

How will we reach the metaverse?

A true metaverse is not here yet. Most people haven’t even heard about it. That’s normal, and the vast majority of present day internet users had not heard about it even while it was being rolled out. There’s a lot we can learn from that experience.

The early internet was built to be a robust form of communication that could essentially repair itself if it was damaged. Messages carried their own destination addresses and could “find their way” even if their original planned route was broken.

But the web, social media and OTT video were not around then. Even though the web seems intrinsic to the internet now, neither it, nor HTML, were available at the start. They are, essentially, applications running on the internet. Before that there were a few special cases that needed the internet infrastructure, but it wasn’t seen even remotely as the sort of all-embracing and ubiquitous service that it is today.

It is hard to encapsulate all the changes the internet has brought to not just specialist users but the entire world.

And that’s exactly what the metaverse will be, probably sooner rather than later. It will be an all-embracing fabric of interconnected services that appear to us either as a layer of data and 3D images to augment our visual field, or a completely immersive environment where we can indulge in fact or fantasy, or anywhere in between. It will be our choice how to engage with it.

Baby steps, but some steps bigger and faster than others.

Virtual film production, multi-user games with avatars and 3D worlds and the growing scope and ambition of social media will take us in the direction of a functional metaverse. Unlike building an aircraft or a spaceship, where it’s not done until it’s done, somewhat surprisingly, the metaverse can unfold in layers and in specialist segments.

Crucially, once you break away from the idea that the metaverse has to be immersive, blocking out and replacing all other sensory input, it’s possible to nurture it as an overlay or enhancement to our everyday lives. It won’t be necessary to leave our normal realm of existence any more than television replaced reality when it arrived. Instead, it became a (limited) visual portal into events (real and fictional) taking place somewhere else.

Cooperation and openness

Crucial to the growth and success of metaverse applications will be willingness to interoperate between parties making up the metaverse. This is already happening. Nvidia’s Omniverse is a quite different endeavour but may well become part of the mechanics of the metaverse. Omniverse is a set of tools to replicate the real world with great precision.

It will be used for everything from modelling a production line to building immersive 3D film sets that can be reused and repopulated for sustainability. Crucially, it uses Pixar’s USD (Universal Scene Description) and its own MDL (Material Description Language) to provide exchangeability between 3D assets and lighting.

These are great examples of Metaverse-type thinking and show that its goals and ambitions are realistic.

Widely available description languages and open APIs will mean that the metaverse will be bigger than the sum of its parts, with the best of the best fully able to take part.

The ultimate home

It will be the ultimate home of social media, games, films and TV. It will be a space where humans can safely join others in times of health emergencies and it will be a portal into shared experiences for everyday life. It will improve sustainability. We will be able to meet people and travel virtually. There will be less need for physical travel.

It will empower people living with disabilities and give them an equal status in the virtual world. Online services will find new, engaging and intuitive ways to interact with their users.

It will never stop growing. As technology progresses, we - the human race - may even merge with it. This will be a gradual process. In the same way as we look back now and can’t imagine life without the internet, at some point we will wonder how life could have existed without the metaverse.

This article was reprinted with permission.

Tags: Technology VR & AR Opinion Futurism

Comments