Canon has developed new deep learning algorithms that can correct all known image defects.

In a new paper, released in Japanese on Canon's global website, the company has unveiled a new deep learning technology that is says can correct for all known issues with digital cameras. The new system tackles three specific areas, noise reduction, colour interpolation, and aberration diffraction correction.

The system has been trained on thousands of raw images within Canon's databases, taking into account all manner of different lens types and camera settings, as well as subject matter.

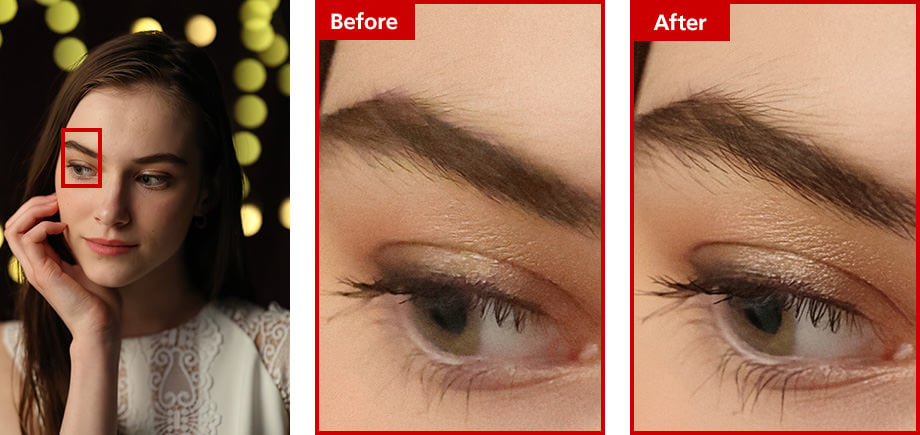

If we take noise reduction as one example of its capabilities, Canon has realised that most standard noise reduction algorithms can over compensate and end up losing real detail. The paper states (directly translated from Japanese), "We established the "Neural network Noise Reduction" function that allows you to get clear and high-quality images. It has become possible to amplify the information of light in high-sensitivity photography and remove the amplified noise at the same time, and express the smooth skin texture (skin tone) that was inhibited by the occurrence of noise."

Original image from the camera on the left, deep learning result on the right

Solving the most common photographic image issues

Another area that the new system tackles is moiré and 'jaggies' brought about by the way that digital sensors work and take images. Sometimes, no matter how good the camera or lens is, some patterns can still cause problems.

Canon states, "Canon has utilized a rich image database to establish a color interpolation deep learning image processing technology named "Neural network Demosaic". We built a learning data set considering the visual characteristics that human vision reacts sensitively to differences in brightness and does not respond much to color changes. As a result, by focusing on subjects that are difficult to guess in color interpolation processing, accidental interpolation is suppressed. The fake colors of striped shirts, jaggies that often stand out with diagonal lines, and moires and false colors that stand out with photos of pets can be accurately interpolated, and the sense of resolution and color reproducibility can be improved."

Image: Canon.

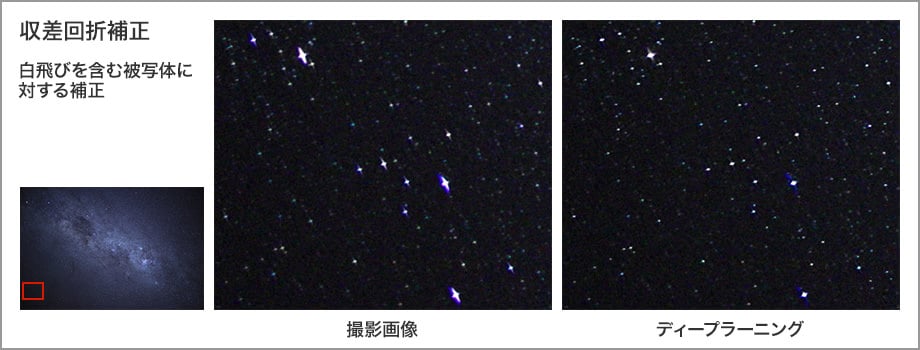

Lastly, the system, tackles lens problems such as aberration, edge softening, and diffraction, which can be caused by using particular aperture settings. Canon's system can bring back detail that is traditionally lost from such issues, and the examples that are given show how effective it is. Of particular note is how the system can drastically help with astrophotography, allowing the photographer to be much more free with their settings, safe in the knowledge that the image can be properly corrected later on.

Correcting astrophotography

Currently the processing is being performed on the image file that the camera produces, so it isn't clear if eventually Canon intends the system for use inside the camera itself. Given the prevalence of computational photography right now, it wouldn't be entirely surprising if some of this technology is applied inside the camera eventually, although professionals usually want this type of control after the fact to be sure they are getting the result they want. That said, I can see this being applied inside more consumer orientated cameras, with separate software available for the power users.

If you have translation software, or you can read Japanese, read Canon's paper over on its global website.

Comments