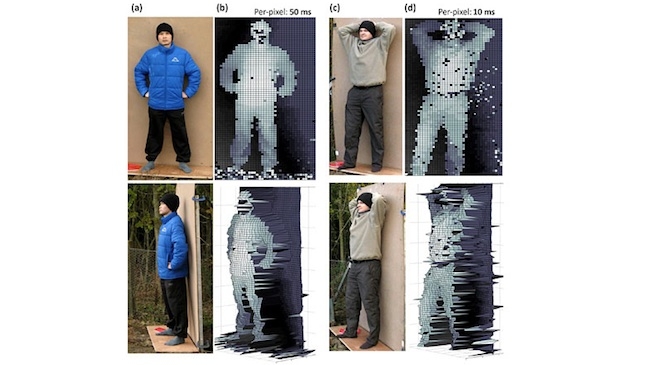

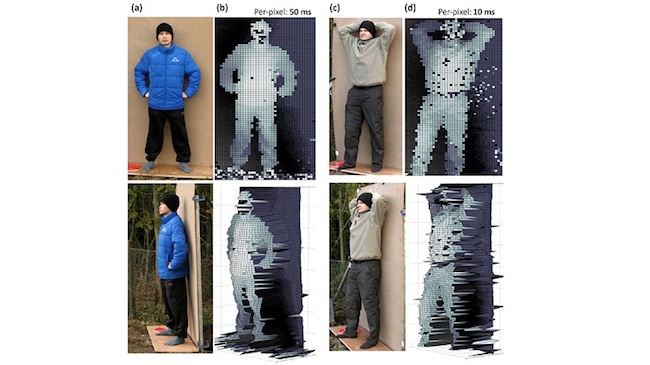

3D results from the new system

3D results from the new system

A team of physicists from the Heriot-Watt University in Edinburgh has developed a laser-based technology that can take millimetre-precise 3D images up to a kilometre away from an object.

Essentially it works in a way similar to sonar and radar by bouncing a laser beam off the object and measuring how long it takes the light to travel back to a detector. The technique, called time-of-flight (ToF), is already used in machine vision navigation systems for autonomous vehicles and other applications, but many current ToF systems have a relatively short range and struggle to image certain objects.

Led by Professor Gerald Buller from the School of Engineering and Physical Sciences, the new system captures reflected laser pulses from famously ‘uncooperative’ objects that do not easily reflect laser pulses, such as fabric, by sweeping a low-power infrared laser beam in the 1560 nanometre range rapidly over an object. It then records, pixel-by-pixel, the round-trip flight time of the photons in the beam as they bounce off the object and arrive back at the source.

The primary use of the system is likely to be scanning static, human-made objects, such as vehicles, though the team also cites other examples such as the remote examination of the health and volume of vegetation and the movement of rock faces, to assess potential hazards. And it is, apparently, particularly good at identifying objects hidden behind clutter, such as foliage.

Here's the snag

Before anyone from the production community gets too excited about its potential, there is a significant wrinkle in that a 1560nm laser doesn’t work well with human skin, which simply doesn’t bounce back a large enough number of transmitted photons to obtain a depth measurement. However, Red Shark would bet a fairly large proportion of its NAB expenses that some VFX departments somewhere are already pricking up their ears, stroking their collective beards, and working out what the international dialling code is for Edinburgh.

Dr Aongus McCarthy, Research Fellow at Heriot-Watt University said in a statement: “Our approach gives a low-power route to the depth imaging of ordinary, small targets at very long range.

“While it is possible that other depth-ranging techniques will match or out-perform some characteristics of these measurements, this single-photon counting approach gives a unique trade-off between depth resolution, range, data-acquisition time and laser-power levels.”

Ultimately, McCarthy also say that it has the potential to scan and image objects located as far as 10 kilometres away. “It is clear that the system would have to be miniaturised and made more rugged, but we believe that a lightweight, fully portable scanning depth imager is possible and could be a product in less than five years.”

Tags: Technology

Comments