Intel has developed a first-of-its-kind self-learning neuromorphic chip which could scale to a supercomputer thousands of times more powerful than any today.

An experimental research system that simulates the way human brains work could replace the GPU for the most compute intensive tasks like Artificial Intelligence within five years.

According to analyst Gartner, as reported in the The Wall Street Journal neuromorphic chips are expected to be the predominant computing architecture for new, advanced forms of artificial-intelligence deployments by 2025.

By that year, Gartner predicts, the technology is expected to displace graphics processing units, one of the main computer chips used for AI systems, especially neural networks. Neural networks are used in speech recognition and understanding, as well as computer vision.

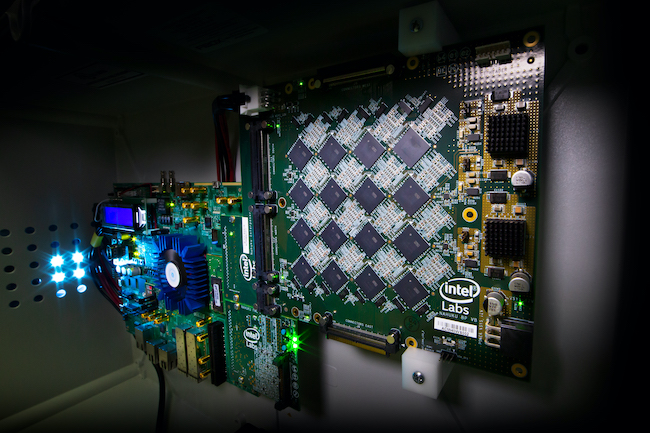

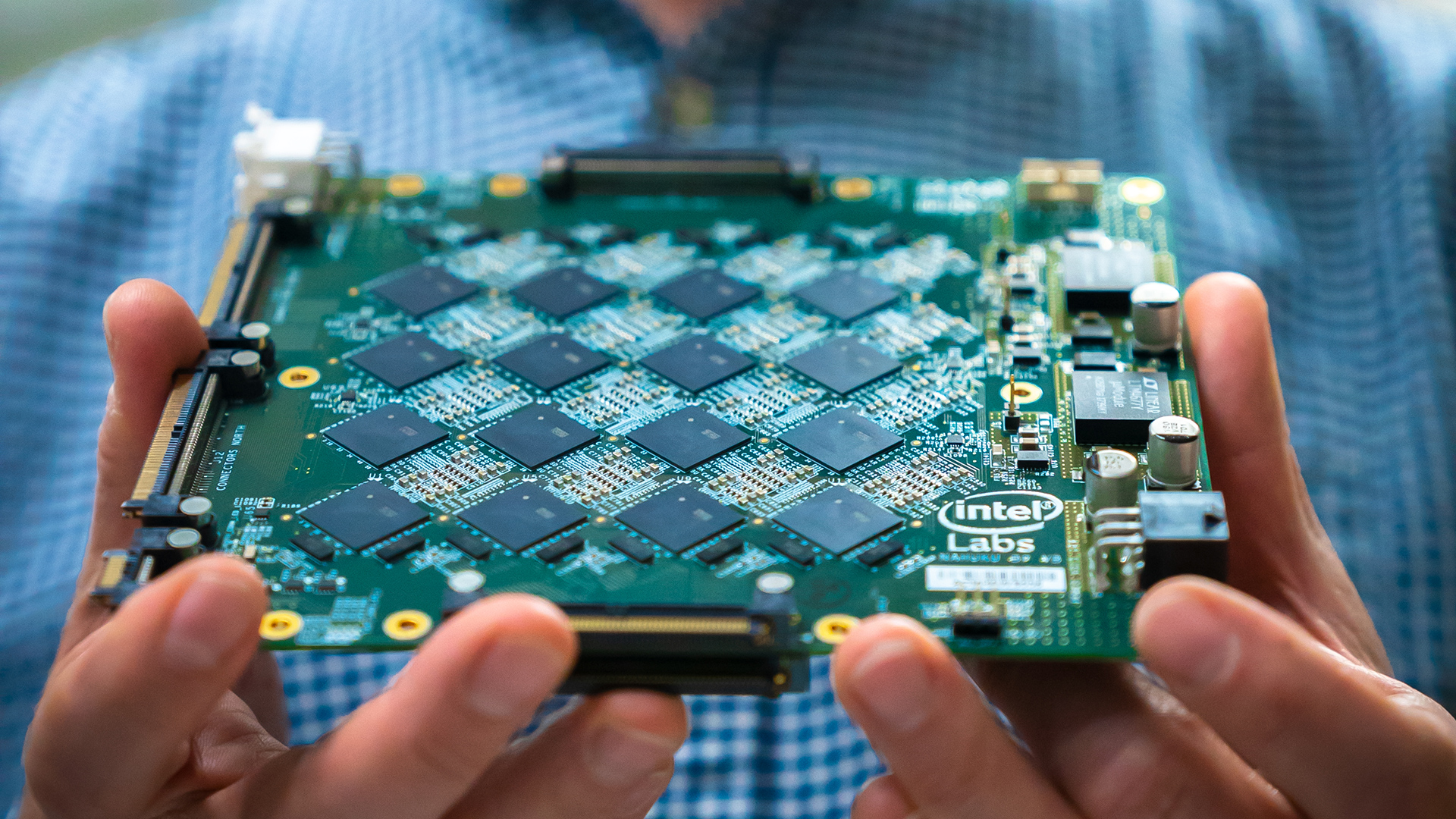

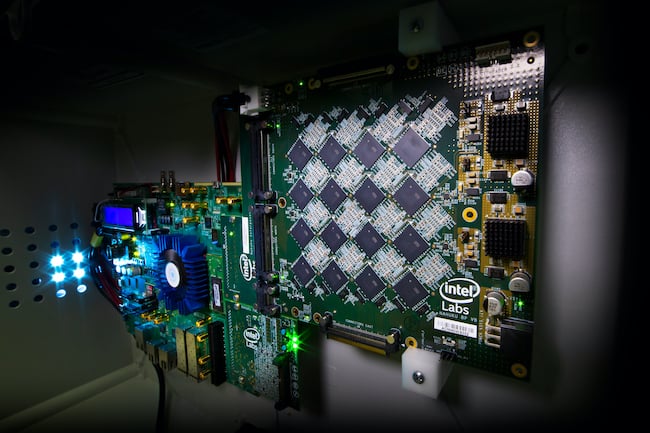

This news is prompted by Intel’s debut of Pohoiki Springs, a neuromorphic computing system comprised of about 770 neuromorphic chips inside a chassis the size of five standard servers.

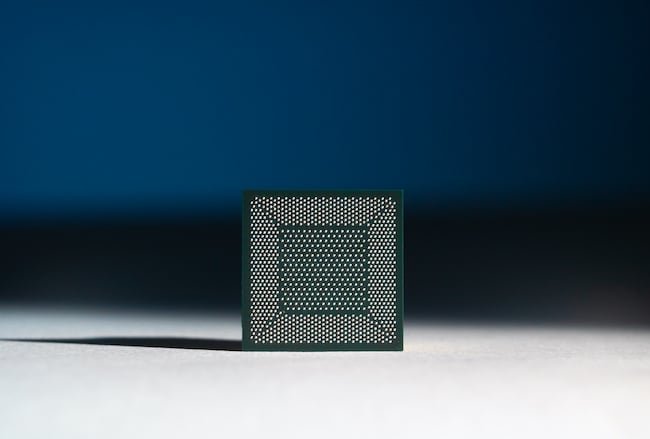

Loihi

The chip – codenamed Loihi – mimics how the brain functions by learning to operate based on various modes of feedback from the environment. This extremely energy-efficient chip, which uses the data to learn and make inferences, gets smarter over time and does not need to be trained in the traditional way.

Loihi processors take inspiration from the human brain. Like the brain, Loihi can process certain demanding workloads up to 1000 times faster and 10,000 times more efficiently than conventional processors. Pohoiki Springs is the next step in scaling this architecture to solve not just artificial intelligence problems, but a wide range of computationally difficult problems.

It is being be made available, via the cloud, to the Intel Neuromorphic Research Community. This includes about a dozen companies (such as Accenture and Airbus), academic researchers and government labs.

The Loihi chip

Neuromorphic computing will make it possible to train machine-learning models using a fraction of the data it takes to train them on traditional computing hardware.

Intel’s Neuromorphic Computing Lab director Mike Davies explained that the models “learn similarly to the way human babies learn, by seeing an image or toy once and being able to recognise it forever.”

The models can also learn from the data, nearly instantaneously, ultimately making predictions that could be more accurate than those made by traditional machine-learning models.

Less energy use

Another major benefit of neuromorphic computing is the potential for it to perform calculations using much less energy. Developing a single AI model, for example, can generate a carbon footprint equivalent to the lifetime emissions of five average cars, according to researchers at the University of Massachusetts.

“It’s going to make some computations possible that are intractable today because they require a lot of energy or too much time to calculate,” Davies said. “Unlike in traditional machines, in the Pohoiki Springs system, the memory and computing elements are intertwined rather than separate … [which] minimizes the distance that data has to travel.”

Neuromorphic chips could eventually be embedded in cameras.

Accenture Labs has been working with Intel’s researchers since 2018 to see how the technology could benefit AI algorithms that are used in internet-connected devices, such as security cameras that are constantly detecting motion.

Insect to mole to human and beyond

To give us an idea of its power, Intel compares its computer brain to that of beings in the natural world.

Some of the smallest living organisms can solve remarkably hard computational problems – packing a lot of problem-solving power into tiny membranes and consuming remarkably little energy.

Many insects, for example, can visually track objects and navigate and avoid obstacles in real time, despite having brains with well under 1 million neurons.

Today, Pohoiki Springs has the computational capacity of 100 million neurons. That compares favourably to the size of a small mammal brain, according to Intel, which describes this as a major step on the path to supporting much larger and more sophisticated neuromorphic workloads.

The system lays the foundation for an autonomous, connected future, which will require new approaches to real-time, dynamic data processing, Intel says.

Intel has already tested a single neuromorphic research chip to train an AI system to recognise hazardous smells using one training sample per odor, compared to the 3000 samples required in state-of-the-art deep-learning methods.

Intel neuromorphic computing lab senior research scientist Nabil Imam believes that neuromorphic systems will be able to “diagnose diseases, detect weapons and explosives, find narcotics, and spot signs of smoke and carbon monoxide.”

Scientists at IBM, HP and researchers at MIT and Stanford are also investigating the technology which, according to Imam, could be used “to develop a supercomputer a thousand times more powerful than any today.”

Tags: Technology

Comments