EPFL Face Capture with Kinect

EPFL Face Capture with Kinect

At RedShark, we're technology junkies. And one area that's really providing us with some thrills at the moment is motion capture - not using expensive motion capture suites, but everyday devices like the Microsoft Kinect.

Now, the Kinect might look like a low-cost peripheral for the XBox 360, but its influence goes much deeper and wider than that. Almost from the first day it was released, developers have hacked into its API and done clever things with its ability to sense what's happening in a 3D, or volumetric, space in front of it.

Microsoft now has an officially documented API for Kinnect, which has spurred even more development, and what we're seeing now is quite incredible.

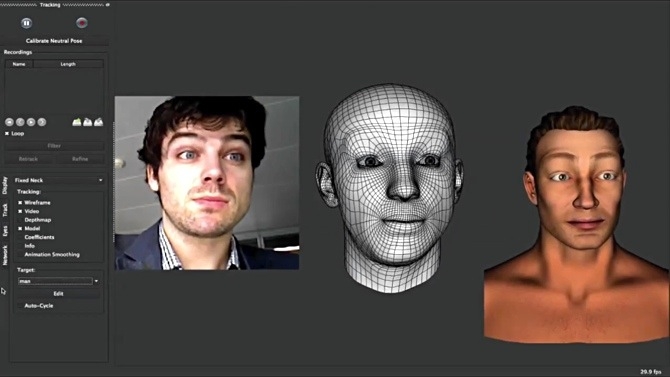

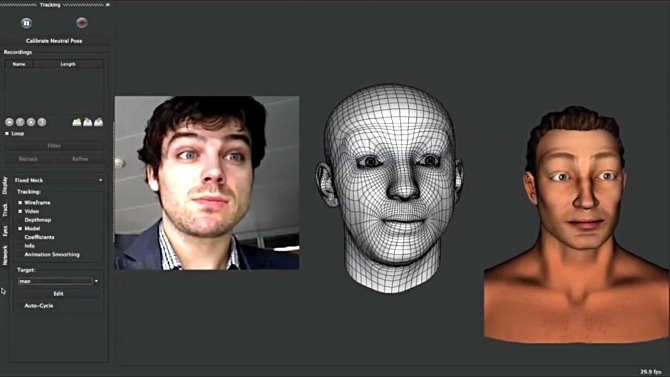

We'll be writing soon about another Kinect project where real-world objects interact with a games physics engine courtesy of Kinect, but until then, have a look at this video, where complex facial expressions are captured and mapped onto 3D models in real-time.

Is it really real?

Of course you can always say that you need more detail and more realism - which is absolutely true, and also completely likely within a year or so, because all it will take is a Kinect with more resolution, and faster processing.

This will bring with it other problems - the biggest of which is the "Uncanny Valley"; the chasm between CGI ultra-realistic faces and their ultimate believability. The trouble is, it seems, that the more realistic artificially-created creatures might be, the more we fight against it.

Watch the demo below from EPFL, an institute for Life Science, Architecture and Computer Sciences located on the shore of Lake Geneva. . The technique is all explained in the video. And remember - this is not just about gaming or person-to-person messaging. This is actually a way to great CGI animation quickly on a very low budget.

Tags: Technology

Comments