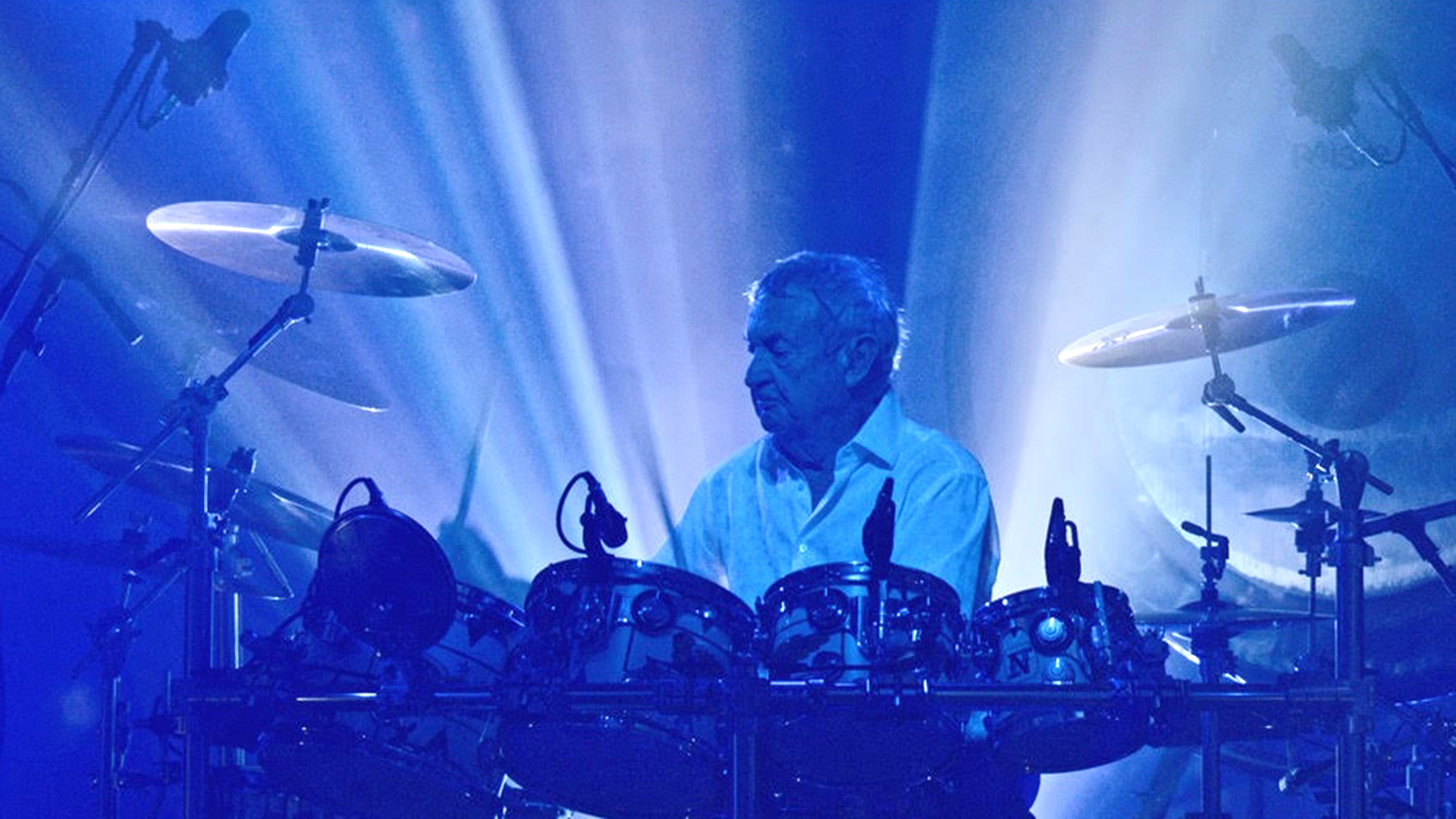

Filmmaker and director James Tonkin shares learnings from his recent experience shooting and editing a concert and documentary for Pink Floyd drummer, Nick Mason.

Throughout my twenty-year career, I have managed all kinds of post-production workflows and have come to learn that the magic is in setting it up the right way. Nowadays, we seem to work in such a way that we’re constantly importing and exporting, and nothing follows a straight linear path anymore.

For example, you might have just started your first day of editorial, and by the end of that week, the client might want to see a fully edited and graded first couple of tracks to see what they will get three months down the line. So it’s essential to set yourself up in a way that keeps the workflow fluid, offering much needed flexibility, right up to the point that you hand over the final copies of the drives.

Last year I successfully tested a ten camera setup just in DaVinci Resolve on a documentary project for ‘5 Seconds of Summer,’ and I wanted to replicate that approach and set up again for Nick Mason’s concert project, which this time involved twice the number of cameras.

The project centered around a two-day live concert shoot with handful of documentary filming days too. Relying on a combination of Alexa, REDs and Sony F55s we used 20 cameras, all monitored and controlled through an OB truck, recording each ISO in camera. We then applied a custom base LUT across everything to establish some consistency, capturing in 4K, 6K and 8K.

We shot all nineteen songs in multi-cam. The key was that during the performance, we were able to play the track in real-time, live direct the shots, and can quickly switch in and out with alternate angles at any given moment. When shooting multi cam, I’m also insistent that it remains active all the way through so that we have the flexibility to switch angles at any stage in the post process, too, right down to the last second before we deliver.

I loaded everything on to an iMac Pro running DaVinci Resolve on day three of filming. We then started the first day of editing a couple of days after wrapping the shoot - a pretty impressive turnaround considering we were dealing with more than 22 terabytes of rushes.

File organisation

Project organisation

I started the project on our shared database, assigning different user logins for various jobs, which meant that people could work on offline edits in one room, and I could work in parallel prepping looks, testing effects, and starting the grade.

The colour pipeline needed to run in parallel to the edit as I didn’t want to spend three months on a project before I started to think about the grade. Instead, I set LUTs on day one to give me a rough base look. This meant that I only needed to make a couple of tweaks on certain shots and songs, and used this colour pipeline right the way through to approval screenings.

From experience, I have learned that this simplicity can only exist by staying inside one application throughout the entire post-production chain.

We used compound clips across everything in this project for the first time. So instead of having a master in a two-hour timeline with all the single individual cuts on, I had one timeline per song, and then I'd have a master timeline with all the songs as compound clips within that. This meant that it was so much easier to move songs around on the master timeline, change orders, trim things without worrying about three hundred cuts per song.

The timeline itself just became much cleaner and simplified and a lot easier to manage. For fiddly things such as the intro, title sequences and credits, where there tend to be lots of stacked layers and effects, it meant that the timeline was still nice and managed so I could make any necessary changes quickly.

Obsessive editing

When you start editing a whole concert film, you can very quickly forget about the two hour duration that the audience is going to watch it over. You can get obsessed with the cuts of each song, but I was keen to keep assembling the show as we went. By the end of the first week, we had assembled the first five or six tracks, and we also had documentary sections that we were cutting in as well.

I had already conceived around the set list how I was going to do it, but you never know if it’s actually going to work until you sit and watch it and see the flow of it. It’s only then you realise where it's going to hold your interest and whether it's going to be the right time to dip out of the concert and into the documentary section.

As I was working, I made Dropbox folders so that I could continuously export out tracks or sections of the project for our team and producer to watch and be involved at all stages. It's much more important to be reviewing and playing back regularly, adopting an almost film set like mentality where you have those regular screenings to keep on top of things.

One application

With Resolve I have really embraced the idea that you're in one application, and how much more flexible we are as a production company, for it.

For example, I can finish shots from the very beginning. If a handheld camera needs stabilizing, Resolve allows you to do it right there and then. I just hop into the Colour Page one click away, stabilize it, then just continue right on where I left off with my edit.

It has also helped to reduce the amount of time spent doing conforms, fixing XML linking problems, and all of the other non-creative elements that eat in to your time went moving between applications.

Post production, in my view, isn’t the linear process it once was. The idea that you start with a locked cut and then send that off for a grade and mix just isn’t practical today. These days, it’s fairly typical for clients to be making decisions on a project right up until the point of mastering and so needs every aspect of post has to run in parallel, seamlessly.

About James Tonkin: James Tonkin is a filmmaker and director of Hangman, a London-based creative studio that works across commercials, documentaries, music videos and live filming for broadcast, cinema and online.

Tags: Production

Comments