A Dell computer monitor flight cased for used on set. Notice the huge differentiation in colour between the Dell and the display on the Atomos recorder on top of it.

A Dell computer monitor flight cased for used on set. Notice the huge differentiation in colour between the Dell and the display on the Atomos recorder on top of it.

The Art of Coarse Monitoring: All about monitoring - when you absolutely should and when you may be able to get away with less-than-ideal options.

Recently we've talked quite a lot about monitoring, calibration, and LUTs. It's a huge subject, but one recurring question keeps coming up: if we're producing material for people who generally don't have particularly high-grade monitors, is there any value in pitching for perfection in monitoring ourselves?

The simple answer to this is, of course, yes, for two reasons. First is the fact that, yes, there's a lot of variation in displays, but it's a lot easier to say "it's not my work, it's your TV," if one's own monitoring is in order; that's the motivation behind most of the claims which are made about calibration being essential. It's a business decision, not a technical one. It's also more of a problem in some circumstances than others: if we know our material must pass a distributor's quality control assessment, it may be more important to get things right (although even then, experience can account for a lot of it). But if we're producing music videos or short films on a low budget, there may be better places to spend money than on ensuring the monitoring is a couple of delta E closer-to-ideal, because there will be more tolerance of inaccuracy.

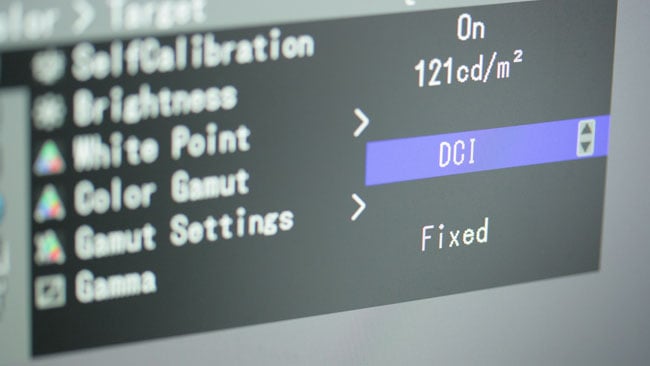

Some displays, such as this Eizo, have inbuilt calibration and are widely used for film and TV work, although they can be about as expensive as low-end dedicated video monitors.

Some displays, such as this Eizo, have inbuilt calibration and are widely used for film and TV work, although they can be about as expensive as low-end dedicated video monitors.

Aim for the middle...

It's also possible to quite validly argue that a large part of the purpose of calibrating monitors is to ensure they're right in the middle of the range of inaccuracy that exists in domestic equipment, to minimise the chances of anything looking seriously odd on any TV in particular. Ideally, someone would have performed a survey done of not just how good domestic TVs are, on average, but how they deviate from the norm. Subjective experience suggests that they tend to be over-contrasty and over-saturated, particularly in the reds, and tend, especially when old, to deviate towards the yellow. Therefore, a truly well-calibrated display might actually not be in the middle of what's actually normal. Nonetheless, experience suggests that properly calibrated reference displays tend to produce pictures that, when displayed on most consumer gear, are acceptable to most consumers.

More costly displays are likely to include cinematography-oriented display options.

More costly displays are likely to include cinematography-oriented display options.

A question of priorities

The principle counterargument is whether it's really that creatively crucial that the image we see on a calibrated grading display is precisely what the audience ends up seeing. Realistically, given the motley assortment of tablets, cellphones and TVs that comprise the output devices of YouTube, that's almost never going to be the case anyway, except in the increasingly rarefied circumstance of theatrical distribution (and even then...).

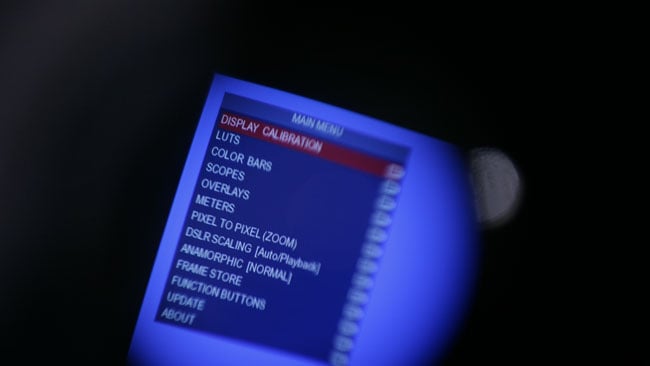

Even some viewfinders, such as this Zacuto Gratical HD, have calibration options.

Even some viewfinders, such as this Zacuto Gratical HD, have calibration options.

As such, there are legitimate concerns over exactly how crucial any of this is in the first place. I say this in the knowledge that it will provoke a mailbag bulging with comments from irate colourists with five-figure investments in display technology, but there is a grey area in which a picture can look acceptable without looking identical to the way it did in the grading suite. In reality, practically all pictures seen in people's homes and on their personal electronics fall into this category.

Let's understand that this isn't about encouraging laziness, it's about spending money in the right areas to make the production look good. Lighting, grip and production design are extremely important to every production. Accurate monitoring is desirable, but given the choice between that and a decent location or a good-looking and well-fitted costume, the latter wins every time. To use a terrible bit of corporate management-speak, there's a lot of working in professional silos in film and TV and, on a high-end show. There's not often much question of trading off a high-end monitor for another hair or makeup artist, but on smaller stuff, on more agile productions which an act more flexibly, that sort of thinking is very much possible.

Coarse monitoring

So, to practical advice. On set, it's the wild west: if we're shooting log formats, the main purpose of monitoring is to indicate that the image we're recording can be, via grading, made to look the way we want it to look. Now, there's still plenty of reasons to want the displays to be accurate, especially if we're going to give the monitor LUTs to the colourist as a starting point so that what's seen on set and what's seen in post match as closely as they can. Even so, given the inaccuracy (or at least the impression of inaccuracy) caused by varying ambient light, the incredible time pressure and the fact that it's all going to be tweaked anyway, it maybe difficult to justify carrying the most expensive monitoring options every single day of the shoot.

This degree of inaccuracy is common for basic computer monitors, but applications such as SpectraCal, plus a probe, can correct for it.

This degree of inaccuracy is common for basic computer monitors, but applications such as SpectraCal, plus a probe, can correct for it.

Given all the above, it's pretty clear that if there's a place where highly accurate monitoring is most essential, it's at the point of final grading. At that point, it's at least possible to tweak out any previously-encountered problems. Happily, it's never been easier to get into at-least-reasonable monitoring, given really quite decent displays from companies such as BenQ and HP's widely-admired Dreamcolor range. Quite affordable monitors are often now supplied with respectable factory calibration and we can take advantage of this quite effectively.

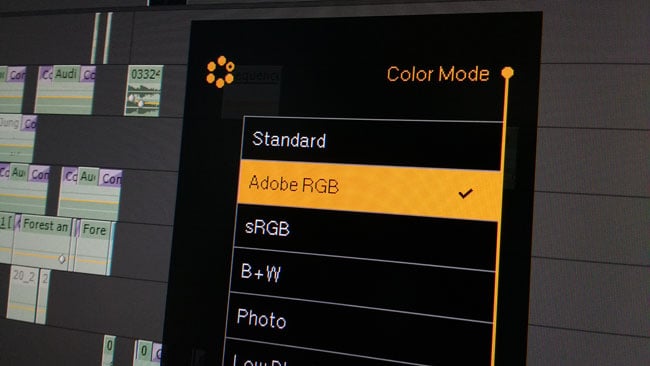

Many displays, such as this BenQ SW2700PT, lack video-oriented colorimetry options, but the modes they do support can provide a surprisingly accurate starting point.

Many displays, such as this BenQ SW2700PT, lack video-oriented colorimetry options, but the modes they do support can provide a surprisingly accurate starting point.

We can't completely ignore the downsides of coarse monitoring. In the worst possible case, an on-set monitor could be so far out of true as to provoke, say, considerable underexposure, resulting in additional noise once the problem is fixed later. That's a pretty extreme circumstance, though, and it's much more likely that a mistake in menu setup or LUT selection would cause similar or worse problems. There can also be arguments between camera and postproduction departments over what the image was supposed to look like, although in most circumstances the inaccuracy of even quite a bad monitor would represent less of a deviation from ideal than the subjective, creative arguments that often arise.

This is not a manifesto for sloppiness or an excuse not to try. It's an attempt to be clear on when we can get away with cutting corners and when we can't – and how to get the best out of available equipment. It's easy to insist that everything must be perfect and that nothing less can be tolerated; that doesn't require any skill. What's difficult is getting reasonable results when things are less ideal, and if this article helps clear up any arguments over how that's best done, it's been successful.

Tags: Production

Comments