AI is becoming ever more important to solving difficult problems, but it has lacked the ability to understand context until now.

Context is everything. AI can solve many problems, and it is increasingly important to how modern computing works. But it's still a bit of a blunt instrument, and an important hurdle to overcome is the ability for AI to understand the contextual relationship between things.

Imagine an object such a bottle or glass sitting on a tabletop. Until now, AI might identify the bottle or the glass, but it wouldn't understand that those objects were physically on top of the table. Instead, in the world of the AI system, they are simply objects that are next to one another, and for all it knows the bottle or glass is floating above the table.

MIT has developed an AI, called 3DP3 to understand these contextual relationships using probabilistic programming. For example, the system can cross-check detected objects against input data to see if any images that have been recorded from a camera match any candidate scene.

The probabilistic programming technique effectively gives the system' common sense', and allows the system to account for any errors that might occur with a deep-learning approach.

The system can infer the relationship between objects in a scene concerning the objects mentioned above. For example, if a stick is leaning up against a wall, the system will be able to 'reason' that the stick is in the position it is because it is in contact with the wall.

This means that the system can more accurately place objects in 3D space and better understand the complex relationship between them.

Old concepts

The system was developed by drawing on an early AI concept that assumes computer vision is the 'inverse' of computer graphics. That is to say that computer graphics generates images based on how a scene is represented, but computer vision is the inverse of this. The researchers took this concept and made the technique more learnable and scalable.

"Probabilistic programming allows us to write down our knowledge about some aspects of the world in a way a computer can interpret, but at the same time, it allows us to express what we don't know, the uncertainty. So, the system is able to automatically learn from data and also automatically detect when the rules don't hold," Cusumano-Towner, one of the researchers, explains.

Amazingly, the system requires much fewer resources than deep-learning techniques. For example, whilst deep-learning might require thousands of input training images, the 3DP3 approach can learn about a scene using only five images from different angles. It then learns the object's shape and estimates the volume it occupies.

Lead researcher Nishad Gothoskar said, "If I show you an object from five different perspectives, you can build a pretty good representation of that object. You'd understand its colour, its shape, and you'd be able to recognise that object in many different scenes,"

The researchers compared the 3DP3 model to several deep-learning systems and discovered that the new system outperformed them every time, using far fewer resources.

But they also discovered that the 3DP3 system could work in harmony with a deep-learning AI by correcting errors. For example, a deep-learning model might predict an object is floating above another. But 3DP3 can look at the scene and 'realise' through probabilistic reasoning that this is physically impossible and correct the object relationship.

The researchers say that whilst there is a long way to go before this can be done in real time, their ultimate goal is to get the system to understand these relationships from a single image.

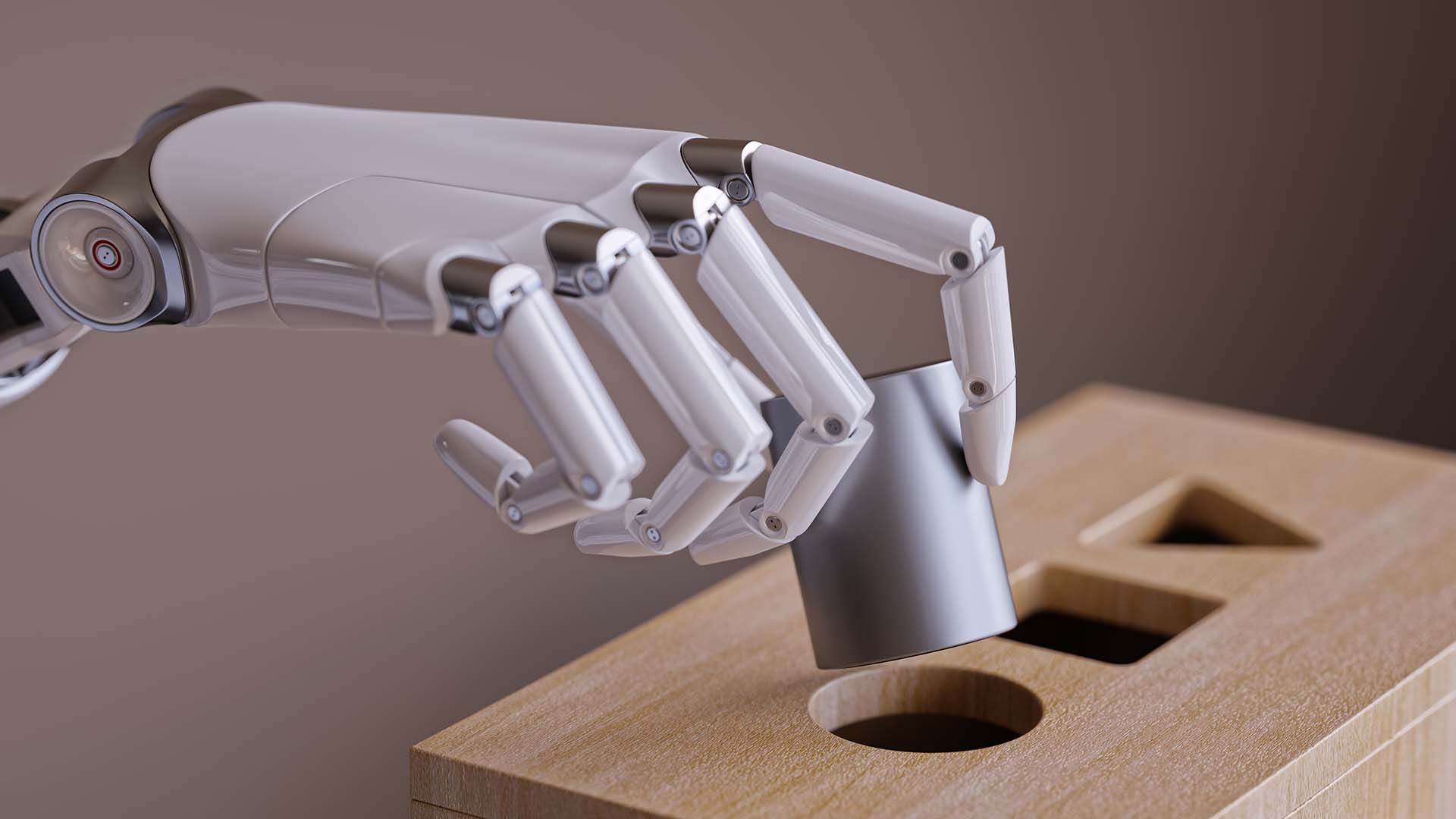

This could be an important development in the context of autonomous vehicle navigation or for robots that might have to manipulate objects, such as one designed for cleaning or organising within a complex environment.

Tags: Technology AI

Comments