IBM’s new dedicated AI processor, NorthPole, makes some interesting trade-offs that could see truly powerful, local AI appearing on a device near you.

We have seen some incredible advances in AI performance this year, with nVidia's monster DGX GH200 GPU the size of 4 African elephants (or something like that) and the truly incredible Cerebras wafer-sized AI processors... and IBM's NorthPole purports to outperform them both, while consuming far less energy.

NorthPole is based on a 12nm process, which by today's processor standard is pretty old; both the Grace Hopper Superchips and Wafe Scale Engine 2 beasts are made on far more modern 4nm and 7nm fabs. However, where nVidia and Cerebras are taking the approach of being simply massive, recall that the Cerebras WSE-w has 850,000 cores on a single wafer, IBM's approach emphasizes latency and efficiency.

Most processors, including the nVidia and Cerebras ones, use memory outside of the core. To access that memory, the processor has to send a request to the memory controller, wait while the memory controller extracts the requested line from the correct memory cell, and send the result back. The time involved is the latency, or in other words, the delay between when the processor requests the data and when it receives the data. That process also consumes energy for signal propagation and the controller, in addition to the processor itself and the memory controller.

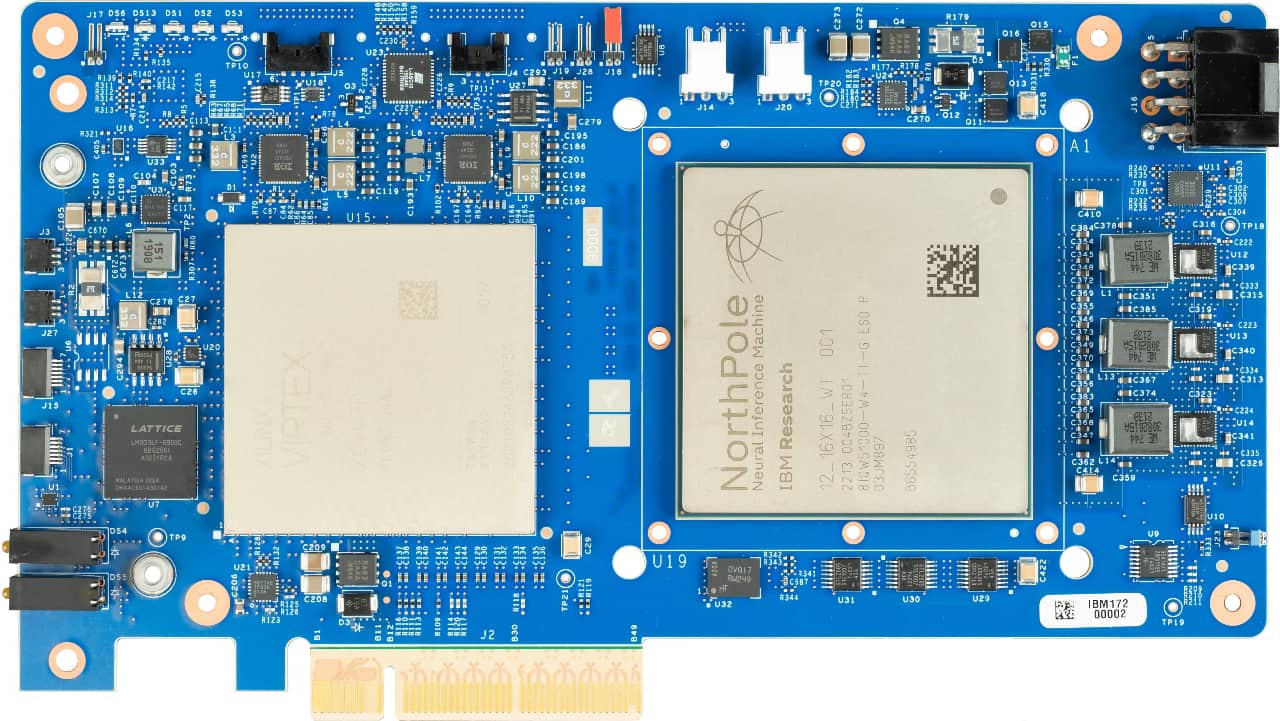

NorthPole is effectively an extension of TrueNorth, a ‘brain-inspired’ chip that started development a decade ago. It is a “neuromorphic” processor, which means that it has physical memory built into the chip. Basically, each core has its own local cache. For models that can fit within the collective memory of the processor, that means that it never needs to burn energy or time waiting to retrieve data from an external source. NorthPole has “only” 256 cores and 224 megabytes per 800 square mm chip; each core can perform 2048 operations per cycle at 8-bit precision.

IBM Research fellow Dharmendra Modha says that from the core’s point of view, the NorthPole looks like memory near compute since each core has memory, while from the system perspective, NorthPole is “active memory” or in other words, memory that can do its own computing. The result is a remarkably powerful processor that consumes a remarkably small amount of power. Since it draws comparatively little power, the thermal load is also small, so it only requires fans and heatsinks to keep it cool, unlike the 850,000 core WSE-2.

IBM says that NorthPole delivers 25x performance per watt and 22x the inference performance of nVidia's 12nm based V100 tensor processor, and is 1/5 the size. In spite of the efficiency gains nVidia made on the way to the 4 nm-based H100 tensor processor, NorthPole still has 5x the power efficiency.

Partitioning networks

As mentioned earlier, there is a caveat: that the model fits in the chip's memory. Modha's team has developed a method of partitioning neural networks too large for one NorthPole into subnetworks that fit within one chip's memory and connect the chips together. This “scale-out” method does allow it to scale beyond its fixed built-in memory, but even that can't scale up the memory capacity to match that of nVidia's DGX GH200 with its giant 144 terabytes, and that is where IBM made the tradeoff.

NorthPole has nowhere near enough memory to run something like ChatGPT, which requires an enormous dataset, and NorthPole also requires an already trained neural network. But while the Cerebras and nVidia monsters can run pretty much any AI task required, there is no chance of ever seeing one in something like, for example, a drone or personal computer.

There are a number of applications already where neural nets are trained on large data center AI systems and then deployed to client devices like cars and drones. The inference engines need to be fast, but since they are running already trained neural networks, they can be relatively small.

This is where you are likely to find NorthPole in the future. IBM clearly has no intention of attempting to replace the giant AI supercomputers but rather to complement them by bringing very high inference performance to power-constrained edge computing.

Thanks to Anastasi in Tech for her introduction to NorthPole…

Tags: Technology

Comments