This is how one North Wales based company is leading the charge in virtual production.

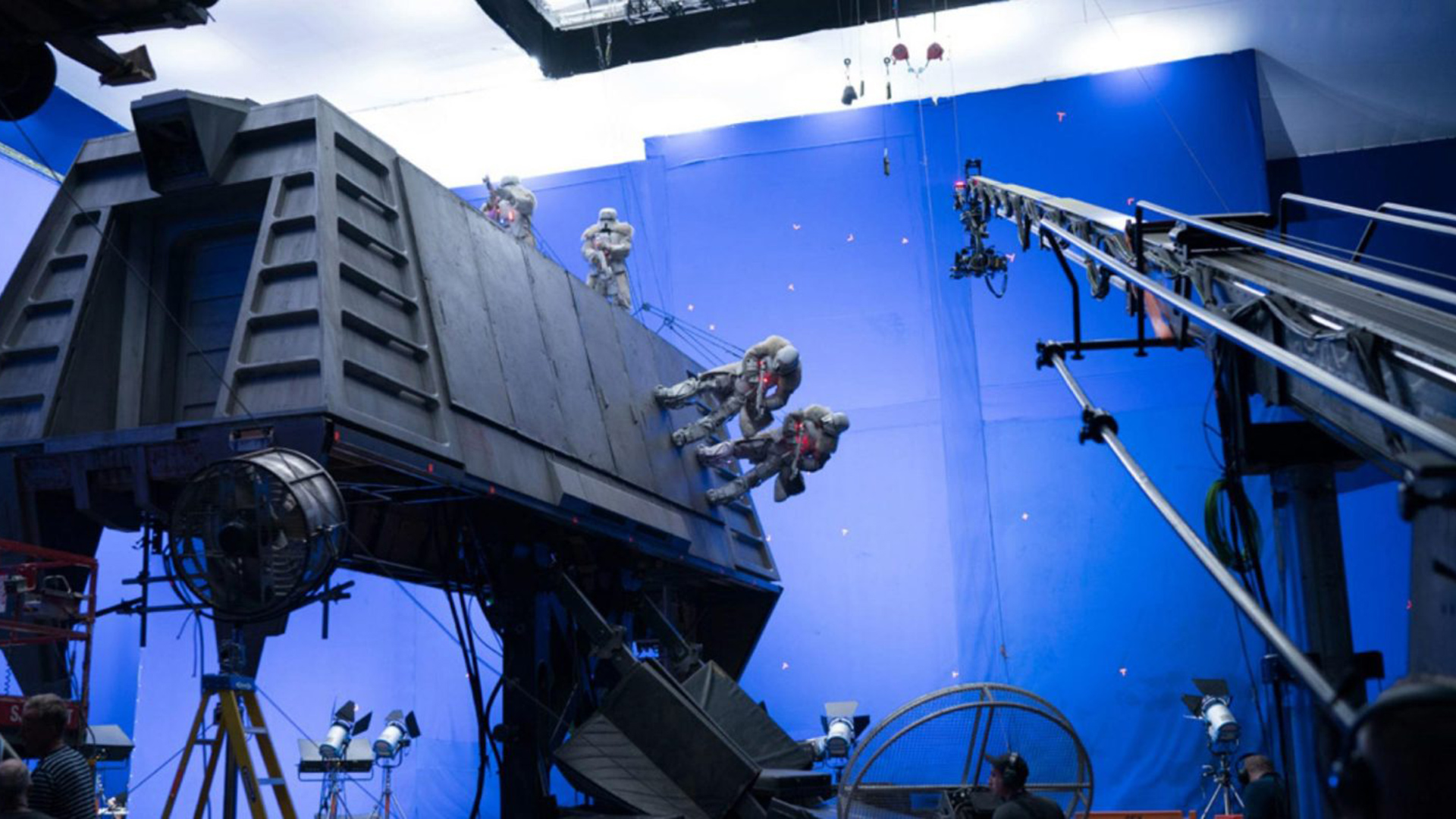

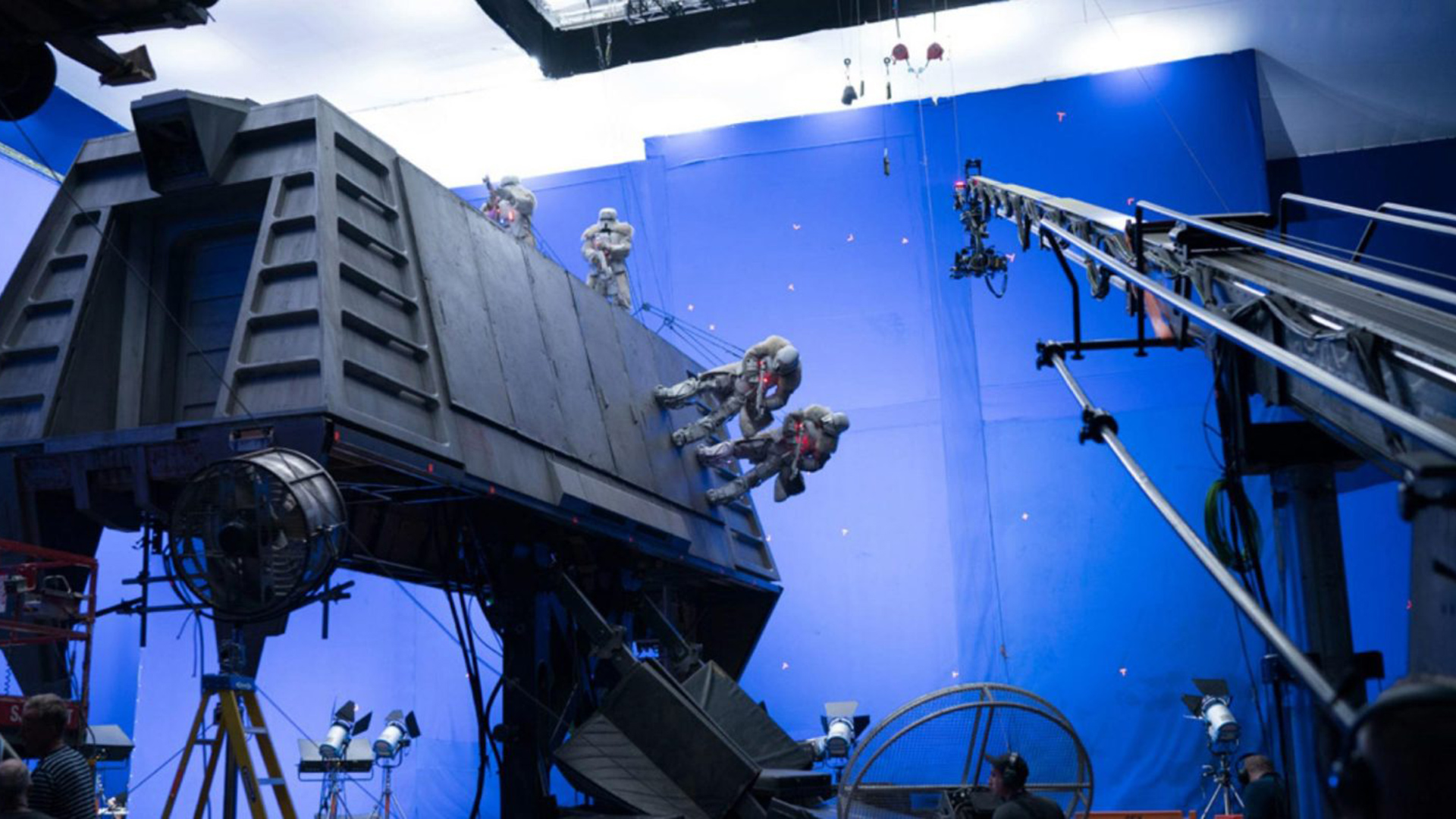

Image: Disney.

In 2009 Avatar pioneered virtual production and by all accounts Avatar 2 due 2021 (with its challenging use of actual water rather than CG fluids) is still state-of-the-art but you don’t need a $250 million budget to merge the physical with the digital into one photoreal story.

Far from it, and in the UK, it is On-Set Facilities (OSF) which is leading the charge.

Set up in 2016 in Corwen, North Wales by Asa Bailey, a former digital creative director at ad agency Saatchi and Saatchi, OSF has grown into a fully managed virtual production studio covering in-camera VFX (LED), mixed reality (green screen), and fully virtual (in-engine) production.

It has sales and support offices in Madrid and LA and partnerships with leading professional camera tracking firms Ncam and Mo-Sys and just recently signed with ARRI to become an ARRI certified virtual production partner.

Bailey, who is CEO and CTO, said of this alliance, “we can use our knowledge of virtual production systems to supply global clients with a lot more first class on-set technology. We can now design and provide complete, turnkey, on-set VFX and virtual production studio solutions with total confidence and better meet the growing on-set technology needs of major studios, production companies, and broadcasters.”

ARRI its “cross-disciplinary competence” in 4K/HDR camera systems, postproduction, and rental combined with “a deep understanding of content production workflows and environments and expertise in state-of-the-art lighting, places the ARRI System Group ahead of the competition.

Sony might disagree. Its Venice cameras were chosen ahead of the competition by James Cameron to shoot the next Avatars.

Nonetheless, the pact with OSF could put ARRI-led productions the way of OSF and at the very least means ARRI camera data will have a solid pipeline into OSF’s realtime production tools.

Virtual production systems

These include camera tracking systems, LED screens for digital backlot and virtual camera modules as well as plug-ins to third party animation and software assets that work with Unreal Engine.

These include the Rokoko mo-cap suit which can stream directly into UE via a live link demoed by OSF here and 3D character creation tools from Reallusion.

OSF also use Apple’s LiveFace app (available for downloaded on any iPhone with a depth sensor camera) and its own motion capture helmets to capture the facial animations.

The firm’s Chief Pipeline Officer, Jon Gress, is also Academic Director at The Digital Animation & Visual Effects School at Universal Studios in Orlando.

OSF even has its own virtual private network, in beta, connected to the Microsoft Azure cloud for virtual production. From a network perspective, StormCloud is designed to connect many thousands of remote VPN users in Unreal Engine. Entry points currently set up in London and San Francisco are being tested by “a number of Hollywood Studios and VFX facilities,” says the facility.

“Filming in-engine and previewed in real-time and with pre-production and editorial teams also connected to StormCloud, virtual production is blurring the lines between what happens on-set physical sets and virtual sets hosted in the cloud,” says Bailey who is CEO and Virtual Production Director.

The rise and rise of virtual production

Most major films and TV series created today already use some form of virtual production. It might be previsualization, it might be techvis or postvis. Epic Games, the makers of Unreal Engine, believe the potential for VP to enhance filmmaking extends far beyond even these current uses.

Examined one way, VP is just another evolution of storytelling – on a continuum with the shift to color or from film to digital. Looked at another way it is more fundamental since virtual production techniques ultimately collapse the traditional sequential method of making motion pictures.

The production line from development to post can be costly in part because of the timescales and in part because of the inability to truly iterate at the point of creativity. A virtual production model breaks down these silos and brings colour correction, animation, and editorial closer to camera. When travel to far flung locations may prove challenging, due to Covid19 or carbon neutral policies, virtual production can bring photorealistic locations to the set.

Directors can direct their actors on the mocap stage because they can see them in their virtual forms composited live into the CG shot. They can even judge the final scene with lighting and set objects in detail.

What the director is seeing, either through the tablet or inside a VR headset, can be closer to final render– which is light-years from where directors used to be before real-time technology became part of the shoot.

Productions with scenes made using games engines, camera tracking and LED screens or monitors include Phoebe Waller Bridge co-created series Run, and the Waller Bridge co-scripted Bond 25 No Time to Die.

Coincidence? Yes of course it is.

Tags: Production

Comments