VPilot uses AI to automate webcasting

VPilot uses AI to automate webcasting

At IBC, AI could spell job loss and a possible creative vacuum. Where will AI go now?

Let’s get this straight. Ninety-nine per cent of all the product in media and entertainment claiming to be AI is not AI. So says Yves Bergquist a researcher in AI and neuroscience at the Entertainment Technology Center in Hollywood. He’s right of course. Very little if any application touted as intelligent at IBC is cognitively aware.

Bergquist’s own definition of AI is a bit of a mouthful: “AI is the design of optimal behaviour of agents in known or unknown computable environments,” he says. “The ability to map the world and to act as an autonomous agent is key.”

So, autonomous cars or game-playing computers are AI but automated media tagging, signal diagnostics and video search systems are not, yet these comprise the bulk of AI applications being introduced to media production.

Regardless of being truly intelligent or not, there is a need for tools to automate and speed up the mundane parts of the process in order to get more of the video which is already being captured streamed to online platforms and social networks. It’s the digital media equivalent of robots on the auto assembly line. And inevitably that means loss of jobs although everyone couches that in terms of cost efficiency or streamlining.

Global worth

AI could deliver $13 trillion more cash to the global economy by 2030, putting its contributions to growth on par with the introduction of the steam engine, if you believe consultants McKinsey. It expects about 70 percent of companies regardless of sector to adopt at least one form of AI in the next decade.

The BBC will be one of them. Its R&D division is exploring AI to try and increase the scale of the Corporation’s live event coverage. Its research, which won the IBC Best Conference Paper Award, explains how it developed a system that produces an edited video package by selecting and sequencing crops from high-resolution cameras. It points out that the results are then “tweaked by a human operator” such as adjusting the frequency it cuts between different crops.

Many production tasks are fundamentally about recording, processing and transmitting information. Yet editing programmes is a deeply creative role.

“Sorting through assets to find good shots isn't the best use of the editor's time - or the fun, creative part of their job,” explains project lead Mike Evans. “We think that AI could help to automate this for them.”

He adds that this technology will be suitable not just for major production companies like the BBC, but minor sports producers which need to increase visibility, and even vloggers who want to improve their online presence.

Automated live production

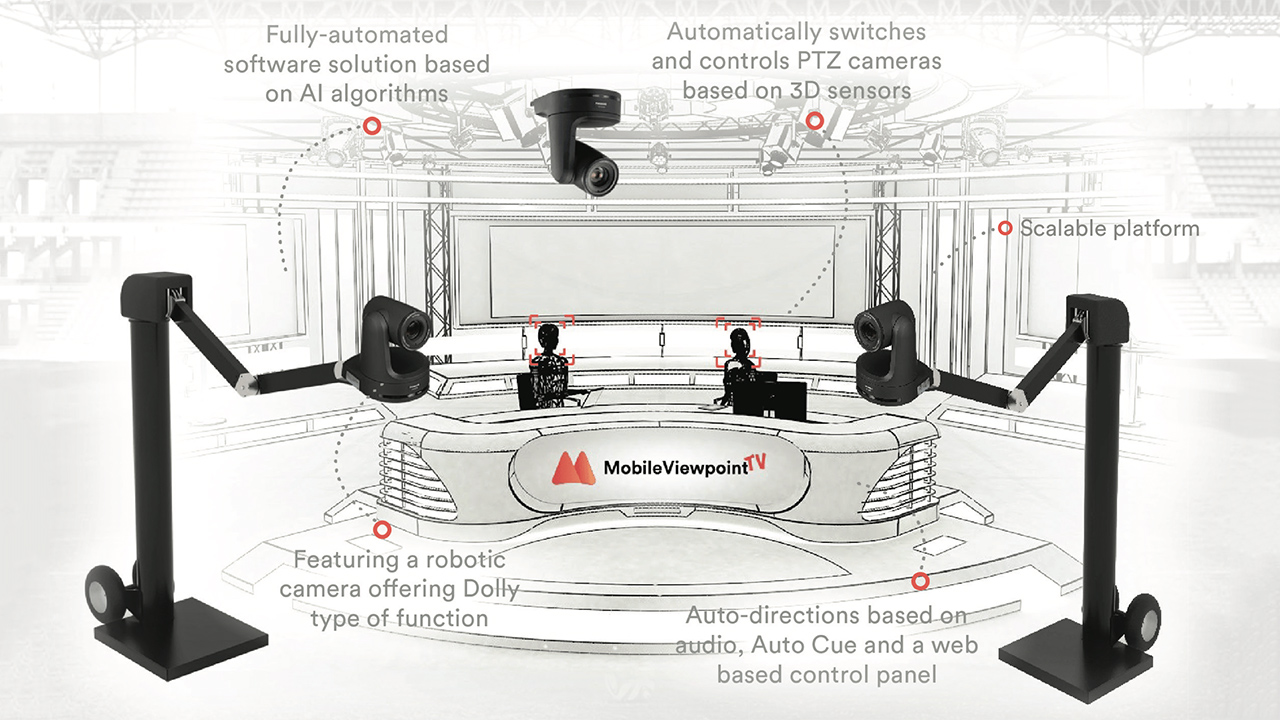

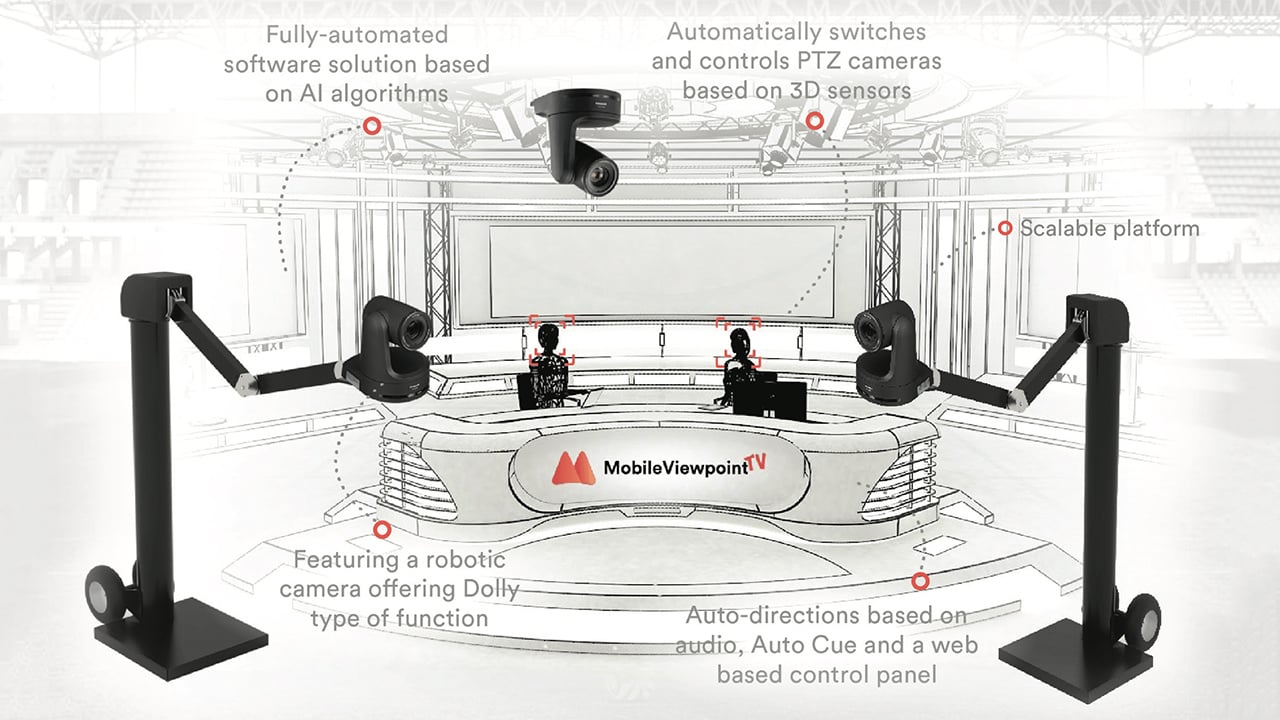

Mobile Viewpoint, for example, has launched VPilot which uses AI to automate live to web production for broadcasters, as well as journalists, radio shows and bloggers.

VPilot consists of three PTZ cameras and software which is able to mimic a human director by analyses audio signals (such as microphone levels), video images, and other sources such as autocue and rundown scripts, to identify which camera to switch to and control. The software automatically points cameras and controls shots to track and focus on TV presenters.

Adobe is making AI a central component of its Creative Cloud editing and finishing platform with systems for facial and object recognition. Adobe Premiere uses can also now make use of a plug-in from GrayMeta that provides metadata around people, landmarks, logos, objects and specific moments. GrayMeta’s John Motz claims this to be “an extraordinary time-saver” compared to scrubbing through content manually.

There isn’t a media asset management company which hasn’t co-opted AI into its workflow, Prime Focus, Primestream and Tedial among them. Avid for example has a partnership with Microsoft for users of Media Composer and other Avid software to work with Microsoft AI’s on content stored in the cloud, again to speed production.

AI is currently expensive to implement because in order to work properly it needs to be trained on a large volume of data which is relevant to each organisation. Taking an AI algorithm off the shelf just won’t work. Dalet for example has taken a best of breed approach in which it will train multiple AI engines on the dataset of studio-based news and sportscasters in order to filter and refine results. The end game is to produce more content, more relevant content and with fewer people.

It is telling that, whereas IBC’s Hall 7 used to be the place to find the latest really creative content production techniques from the likes of Avid or Autodesk that the biggest booth is taken over by IBM. It is flogging a number of technologies but AI is high on its list having demonstrated applications in the automated assembly of highlights for US PGA Golf and Wimbledon earlier this year.

Discovery’s Eurosport is also working to introduce more machine learning to pump out more personalised golf, tennis and Olympics content but says it wants to “balance the algorithm with editorial curation”. The risk from its point of view is that if viewers are only served what they think they want they will end up in a bubble and miss out on all the other content that Discovery wants subscribers to consume.

Netflix is perhaps the most advanced in the industry at attempting to hack the cognitive relationship between stories and audiences in order to fine tune its originals production and its recommendations.

AI startup Corto is attempting to go beyond Netflix by automatically extracting and then mapping every aspect of a piece of content including values such as white balance and frame composition to the emotional reaction a viewer has on watching it. It is doing the latter by using MRI scans to literally hack the brain.

“We will use MRI scans to measure brain activity to infer what emotional response a character or narrative has,” explained Bergquist, who is also Corto’s CEO. “That really is the ultimate - there is no greater level of granularity beyond this.”

Tags: Business

Comments