Replay: Vestri is a robot that can predict the consequences of its own actions, and the repercussions over time could be huge.

Vestri the robot can visualise the possible future consequences of its own actions and act upon it.

Vestri the robot can visualise the possible future consequences of its own actions and act upon it.

If you have ever struggled to learn something new, such as a language, there is a school of thought that says that the route could be made smoother if you learnt like a baby. An example of this was when my sister, a fluent speaker of at least three languages, decided to teach me some Italian. Except that unlike the usual way of teaching, which often involves cross referencing with the English equivalents of words, she only spoke Italian to me. I literally had to work out what she was on about!

This worked for about 5 minutes until I got bored, however it did drill into me at the time, the words, and I suppose it made me associate the sounds with actions rather than performing an internal translation into English. There are, of course, many facets to this and human learning is a highly complex subject.

Enter Vestri

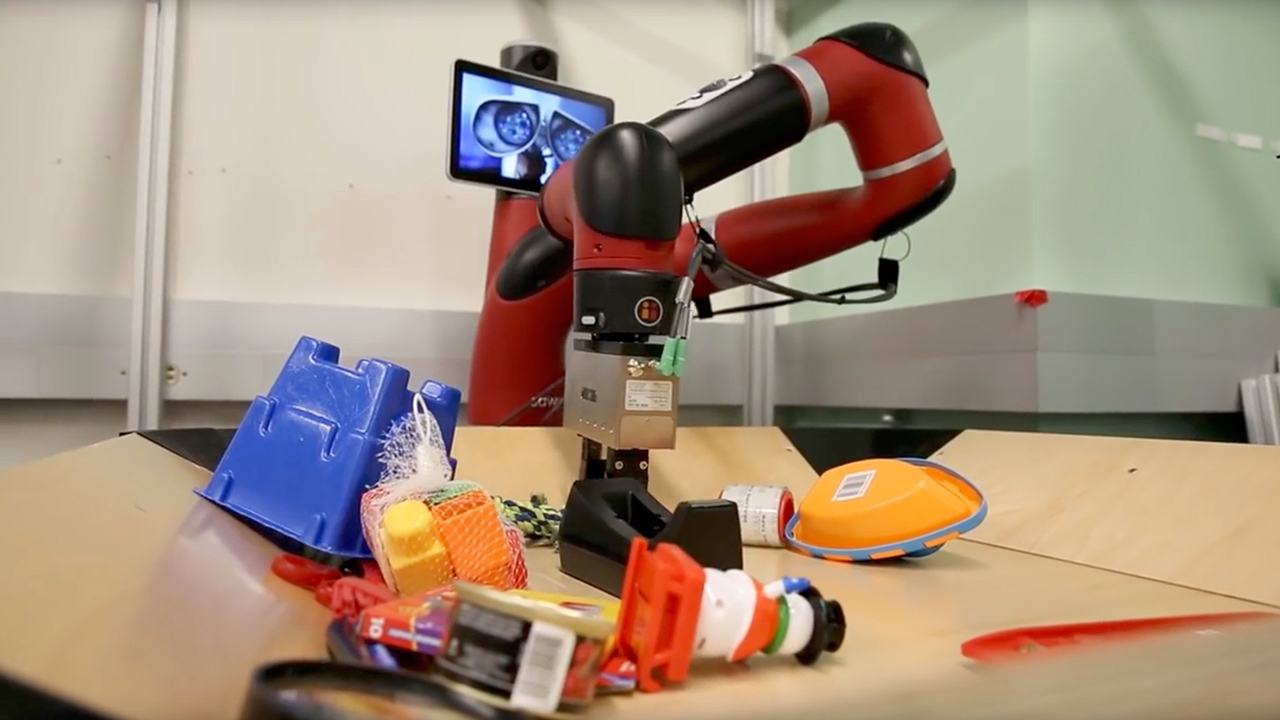

All of which has absolutely nothing to do with the focus of this story other than the fact that researchers at the University of California Berkeley have designed a robot called Vestri that learns like a human baby. We are used to seeing AI systems learning by examples that are given to it. The difference with this robot is that it has been designed to use visual foresight. In other words it can visualise and effectively conceptualise the effects of its actions.

Using it’s camera whilst it manipulates objects, it can ‘imagine’ if that is the right word, or rather predict, what will happen if it moves objects in a certain order. The researchers achieves this by trial and error, which clearly negates the need for a long haired man in a coffee stained T-shirt to spend hours of sleepless nights programming in every possible scenario.

It is early days, but the potential of such a system is clear to see. Driverless cars are the first area that would benefit greatly from such a system in advanced form. The ability to learn roads, and behaviours on them, for example when schools are leaving and more care might be needed in case someone runs into the road, or learning when certain roads are busy, or even achieving the impossible by predicting what a BMW or Audi driver might do next. The possibilities are endless.

There’s more. More recent developments allow Vestri to learn to handle different types of object. For instance learning to be careful of fragile objects such as glass, or objects with flexibility such as rope. This might well make factory robots much more capable, but such dexterity could also assist with motorised prosthetic limbs and wheelchairs. Instead of every minor movement being controlled, it might only need one impulse, and through learned behaviour could just carry out a task based upon what it sees and feels.

I’m no expert in the field of AI or even programming, but such developments offer a glimpse of how some of AI’s most interesting challenges could be met. AI that has an idea of the consequences of its actions before it performs them is a pretty big leap forward.

Watch Vestri in action below, and then go to the Berkeley website to read some more about how it achieves this.

Tags: Technology

Comments